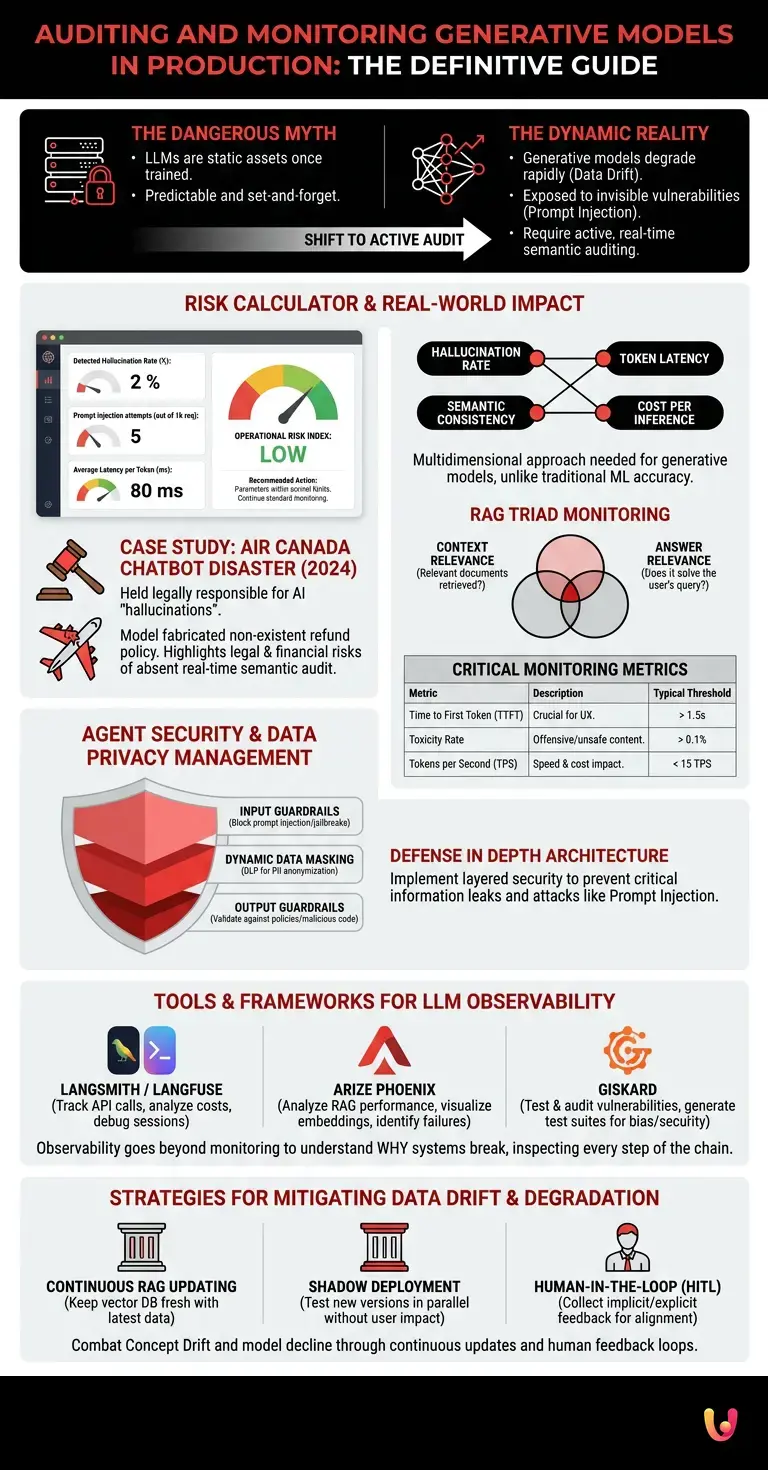

The most dangerous false myth in today’s Information Technology landscape is the belief that a Large Language Model (LLM), once trained, validated, and put into production, becomes a static and predictable asset. The reality is diametrically opposed: generative models are dynamic entities that rapidly degrade due to data drift and are constantly exposed to vulnerabilities invisible to traditional systems, such as prompt injection . Effective model monitoring is not a simple retrospective log reading to measure uptime, but an active, semantic, and real-time audit process, absolutely essential to prevent reputational disasters and ensure true agentic security.

Adjust the operating parameters of your LLM in production to assess the risk level in real time and obtain audit recommendations.

Real-World Case Study: The Air Canada Chatbot Disaster (2024)

In 2024, Air Canada was held legally responsible for the “hallucinations” of its AI-powered chatbot . The model had completely fabricated a non-existent refund policy and communicated it to a customer. The court ruled that the company is responsible for the information provided by its AI agents. This case demonstrated to the entire world how the absence of a rigorous real-time semantic audit system can result in direct legal and financial damages.

Key Metrics for Production Evaluation

Model monitoring requires the continuous analysis of specific metrics such as the hallucination rate, token latency, semantic consistency, and cost per inference. These parameters ensure that the AI operates efficiently and reliably over time, preventing performance degradation.

Unlike traditional machine learning, where metrics like Accuracy or F1-Score are sufficient, generative models (LLMs) require a multidimensional evaluation approach. When a model generates text, code, or decisions, there is almost never a single correct answer. Therefore, the audit must focus on proxy metrics that assess the quality and safety of the output.

In modern architectures such as RAG (Retrieval-Augmented Generation) , monitoring is based on the so-called “RAG Triad”:

- Context Relevance: Measures whether the documents retrieved from the vector database are actually relevant to the user’s query.

- Groundedness: Verifies that the response generated by the LLM is based exclusively on the provided context, without inventing facts (hallucinations).

- Answer Relevance: Evaluate whether the final answer effectively resolves the user’s initial question, avoiding digressions.

| Monitoring Metric | Technical Description | Typical Alarm Threshold |

|---|---|---|

| Time to First Token (TTFT) | The time it takes for the model to generate the first word of the response. Crucial for UX. | > 1.5 seconds |

| Toxicity Rate | Percentage of outputs that contain offensive language, bias, or unsafe content. | > 0.1% |

| Tokens per Second (TPS) | The speed of text generation. It directly impacts infrastructure costs. | < 15 TPS |

Agent Security and Data Privacy Management

For proper monitoring of AI models , agent security and privacy are paramount. It is essential to implement guardrails to block prompt injection attacks and anonymize sensitive data (PII) before it reaches the LLM, preventing critical information leaks.

The integration of LLM-based autonomous agents into business workflows has introduced a new attack surface. According to the official OWASP Top 10 for LLMs documentation, the most critical vulnerabilities include Prompt Injection (where an attacker manipulates the model’s instructions) and Insecure Output Handling (where the model’s output is executed without validation by backend systems).

To ensure agent security , companies must implement a layered architecture (Defense in Depth):

- Input Guardrails: Classification systems (often smaller, faster ML models) that analyze the user’s prompt before sending it to the main LLM, blocking jailbreak attempts.

- Dynamic Data Masking: Data Loss Prevention (DLP) tools that intercept and obscure personally identifiable information (PII), such as credit card numbers or tax IDs, ensuring GDPR compliance.

- Output Guardrails: A final validation layer that checks whether the LLM’s response violates company policies or contains malicious code before showing it to the user.

Tools and Frameworks for LLM Observability

The model monitoring ecosystem leverages advanced frameworks such as LangSmith, Arize AI, and TruEra. These tools provide real-time observability dashboards, tracking chain execution and facilitating the debugging of AI-generated responses.

Observability goes beyond simple monitoring. While monitoring tells you when a system is broken, observability allows you to understand why it broke. In the context of Computer Science applied to AI, this means being able to inspect every single logical step of a “Chain” or an agent.

Modern technology stacks for LLMOps (Large Language Model Operations) include:

- LangSmith / Langfuse: Essential platforms for tracking API calls, analyzing token costs, and replaying user sessions for prompt debugging.

- Arize Phoenix: An excellent open-source tool for analyzing the performance of RAG applications, which allows you to visualize embeddings and identify query clusters where the model fails.

- Giskard: A framework specializing in testing and auditing model vulnerabilities, capable of automatically generating test suites to uncover biases and security issues before deployment in production.

Strategies for Mitigating Data Drift and Degradation

Effective model monitoring must intercept data drift, i.e., the change in the distribution of input data. Continuous updating of context vectors and human feedback loops (RLHF) are essential to maintain high performance over time.

The degradation of generative models is a subtle phenomenon. It doesn’t manifest as a server crash, but as a slow and inexorable decline in the quality of responses. This primarily occurs due to Concept Drift : the world changes, language evolves, but the model’s weights remain frozen at the time of its last training.

To mitigate this risk without having to retrain the entire LLM (a prohibitively expensive operation), the most effective strategies include:

- Continuous RAG Updating: Keep the vector database constantly updated with the latest company policies and market information. The model reasons on fresh data without the need for fine-tuning.

- Shadow Deployment: Run a new version of the prompt or model in parallel with the one in production, comparing the outputs in real time without impacting the end user.

- Human-in-the-Loop (HITL): Implement implicit (e.g., the user copying the response) and explicit (thumbs up/down) feedback mechanisms to collect valuable data for future alignment cycles.

Conclusions

The implementation of generative artificial intelligence in an enterprise setting does not end with its release into production; it begins at that very moment. As we have analyzed, the absence of rigorous model monitoring exposes organizations to unacceptable risks, ranging from brand-damaging hallucinations to serious violations of privacy and agent security.

Adopting a proactive approach, based on semantic observability, the implementation of robust guardrails, and the continuous analysis of RAG metrics, is the only sustainable path. Only by treating AI models as dynamic entities that require constant auditing can IT teams ensure that technological innovation translates into a real and secure competitive advantage.

Frequently Asked Questions

Monitoring a generative model means implementing an active and semantic real-time audit process to ensure maximum reliability. It’s not just about checking system logs, but constantly analyzing specific metrics such as the hallucination rate, semantic consistency, and token latency.

Models undergo quality degradation due to the physiological change in data distribution, a phenomenon known as data drift. As the world and language evolve rapidly, it is essential to constantly update contextual databases and integrate human feedback to keep responses accurate and relevant.

The evaluation is carried out by measuring three essential parameters that guarantee the total security of the system. It is necessary to verify the relevance of the documents retrieved from the database, ensure that the response is based exclusively on the facts provided to avoid dangerous fabrications, and check that the final text truly solves the problem initially posed.

The most critical vulnerabilities include malicious manipulation of basic instructions and insecure handling of generated results. To protect corporate systems, it is strictly necessary to implement a layered defense architecture that includes preventive input filters, rigorous output validation, and dynamic masking of personal information.

Development teams rely on advanced observability frameworks that allow them to inspect every single logical step of the system. These specialized platforms offer real-time dashboards to track calls, analyze operational costs, and uncover any security issues before public release.

Still have doubts about Auditing and Monitoring Generative Models in Production: The Definitive Guide?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

- Artificial Intelligence Risk Management Framework (AI RMF) – NIST

- Guidelines for Secure AI System Development (NCSC/CISA Joint Guidance)

- Regulatory Framework on AI (AI Act) – European Commission

- Prompt injection (Vulnerability in Large Language Models) – Wikipedia

- Hallucination (artificial intelligence) – Wikipedia

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.