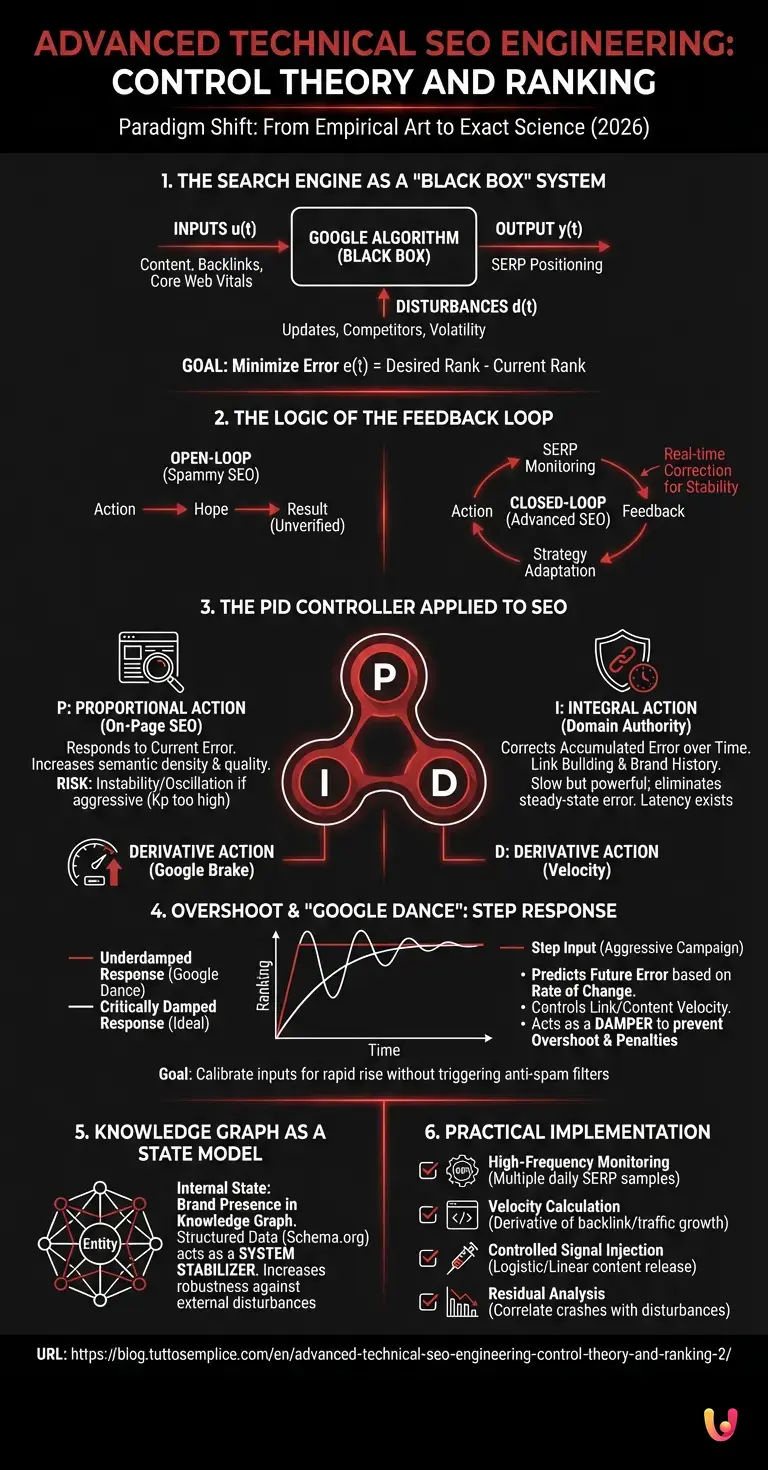

It is 2026, and the empirical approach to organic ranking is no longer sufficient. For CTOs and specialists managing high-complexity portals, advanced technical seo must evolve from a divinatory art to an exact science. In this context, the main entity we interface with, Google, must not be seen as an arbitrary judge, but as a dynamic deterministic-stochastic system. This article proposes a radical paradigm shift: the application of Automatic Control Theory and Systems Engineering to decode, predict, and stabilize SERP fluctuations.

1. The Search Engine as a “Black Box” System

In electronic engineering, a system whose internal structure is unknown but whose inputs and outputs are observable is defined as a Black Box. Google’s ranking algorithm fits this definition perfectly.

We can model the SEO process through a transfer function H(s), where:

- Input u(t): The set of our actions (content publication, backlink acquisition, Core Web Vitals optimization).

- Output y(t): The positioning in the SERP for a given query.

- Disturbance d(t): External factors (algorithm updates, competitor actions, query volatility).

The goal of advanced technical seo is not simply to maximize the input, but to design a control system that minimizes the error e(t), i.e., the difference between the desired position (Rank 1) and the current position, ensuring system stability over time.

2. The Logic of the Feedback Loop

An open-loop system acts without verifying the result. It is the classic error of “spammy” SEO: launching thousands of links and hoping. A closed-loop system, on the other hand, uses feedback to correct the action in real-time.

To stabilize ranking, we must implement a feedback loop that constantly monitors SERPs and adapts the input strategy. This is where the concept of the PID controller comes into play.

3. The PID Controller Applied to SEO

The PID (Proportional-Integral-Derivative) controller is the most common feedback mechanism in the industry for controlling variables like temperature or speed. We can map the three PID components onto ranking dynamics:

A. Proportional Action (P): On-Page SEO

Proportional action responds to the current error. In SEO terms, it corresponds to On-Page optimization and content relevance.

- Operation: If the ranking is low, I increase semantic density and technical quality.

- Risk (Kp Gain too high): If one exaggerates with proportional action (e.g., keyword stuffing or aggressive over-optimization), the system becomes unstable. Google detects the anomaly, and the ranking begins to oscillate violently.

B. Integral Action (I): Domain Authority

Integral action corrects the accumulation of error over time. In SEO, this is represented by Link Building and historical Brand Authority.

- Operation: Even if On-Page is perfect, without “history” (the integral over time of trust signals), the ranking does not reach the target. Integral action is slow but powerful: it eliminates steady-state error.

- Dynamics: Backlinks do not act instantly; they have a latency time (system inertia). A good SEO engineer knows to wait for the integral action to reach steady state before modifying inputs again.

C. Derivative Action (D): Velocity

This is the most critical component for modern advanced technical seo. Derivative action predicts future error based on the rate of change.

- Application: Google analyzes the first derivative of your signals (Link Velocity, Content Velocity).

- The role of damper: If you acquire 1000 links in a day, the derivative spikes. A well-designed algorithm (Google Penguin or modern SpamBrain) will interpret this spike as an attempt at manipulation.

- Strategy: Use derivative logic to “brake” link acquisition when growth is too rapid, thus avoiding Overshoot (surpassing the target followed by a crash or penalty).

4. Overshoot and “Google Dance”: Step Response Analysis

When we launch a new site or an aggressive campaign, we are applying a step input to the system. Google’s response is never immediate and linear but often presents a damped oscillatory behavior, empirically known as “Google Dance”.

From the perspective of Systems Theory, this is a transient. If the system is underdamped (too much aggression, too many links in a short time), the ranking will rise rapidly (overshoot) only to crash below the equilibrium position (undershoot) and oscillate. The goal is to calibrate inputs to achieve a critically damped response: a rapid rise to the first page without oscillations that trigger anti-spam filters.

5. Knowledge Graph as a State Model

Beyond the transfer function, complex systems are analyzed via state space. For a brand, the “internal state” is represented by its presence in the Knowledge Graph.

Integrating structured data (Schema.org) and consolidating the entity in the Knowledge Graph acts as a system stabilizer. A well-defined entity in Google’s graph reduces output variance (ranking) in the face of external disturbances (algorithm updates). Mathematically, a solid Knowledge Graph increases system robustness, making positioning less sensitive to SERP background noise.

6. Practical Implementation: From Model to Strategy

How do we translate this theory into operations for an advanced technical seo strategy?

- High-Frequency Monitoring: Checking ranking once a day is not enough. Use APIs to sample SERPs multiple times a day to reconstruct the system response curve.

- Velocity Calculation (Derivative): Create scripts (Python/R) that calculate the derivative of backlink and traffic growth. If the slope exceeds a safety threshold (determined by analyzing top 3 competitors), stop acquisition (negative feedback).

- Controlled Signal Injection: Do not publish 50 articles in a day. Release content following a logistic or constant linear curve to keep derivative action under control.

- Residual Analysis: If ranking crashes for no apparent reason, analyze the correlation with external disturbances (updates). If the crash is systemic, your model (site) has incorrect internal parameters (e.g., technical debt, poor UX).

In Brief (TL;DR)

Managing complex portals requires abandoning divinatory art to embrace Systems Engineering and Automatic Control Theory.

Google must be interpreted as a dynamic black box system where SEO actions act as inputs to stabilize SERP fluctuations.

Applying the PID controller allows for perfect calibration of content, authority, and speed to avoid penalties and ensure constant growth.

Conclusions

Treating SEO as a humanities discipline is obsolete. By applying Control Theory principles, we transform optimization into a measurable and predictable engineering process. By modeling Google as a dynamic system and using logic controllers to manage our inputs, we can minimize the risk of penalties and maximize long-term positioning stability, transforming volatility into a manageable parameter.

Frequently Asked Questions

Treating the search engine as a dynamic system allows moving from an empirical approach to an exact science. By modeling the process with transfer functions and feedback loops, it is possible to predict SERP fluctuations and minimize positioning error, ensuring stability that traditional strategies cannot offer.

The PID method balances three forces: Proportional action manages On-Page SEO based on current error; Integral action handles historical domain authority; Derivative action controls growth speed. This mix prevents violent oscillations and penalties due to overly aggressive optimization.

This oscillatory behavior is a transient system response to a sudden input, such as a massive link launch. To avoid it, inputs must be calibrated to obtain a critically damped response, rising towards the first page without exceeding the target and without triggering anti-spam filters due to excessive speed.

The Knowledge Graph acts as a state model defining brand identity. Consolidating one s entity in Google s graph increases site robustness against external disturbances, such as algorithmic updates, reducing ranking variance and making online presence more solid in the long term.

Monitoring the first derivative of backlink growth allows detection of anomalous peaks that Google would interpret as manipulation. By keeping acquisition speed below a safety threshold calculated on competitors, overshoot is avoided and natural growth is simulated, protecting the site from sudden crashes.

Still have doubts about Advanced Technical SEO Engineering: Control Theory and Ranking?

Type your specific question here to instantly find the official reply from Google.

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.