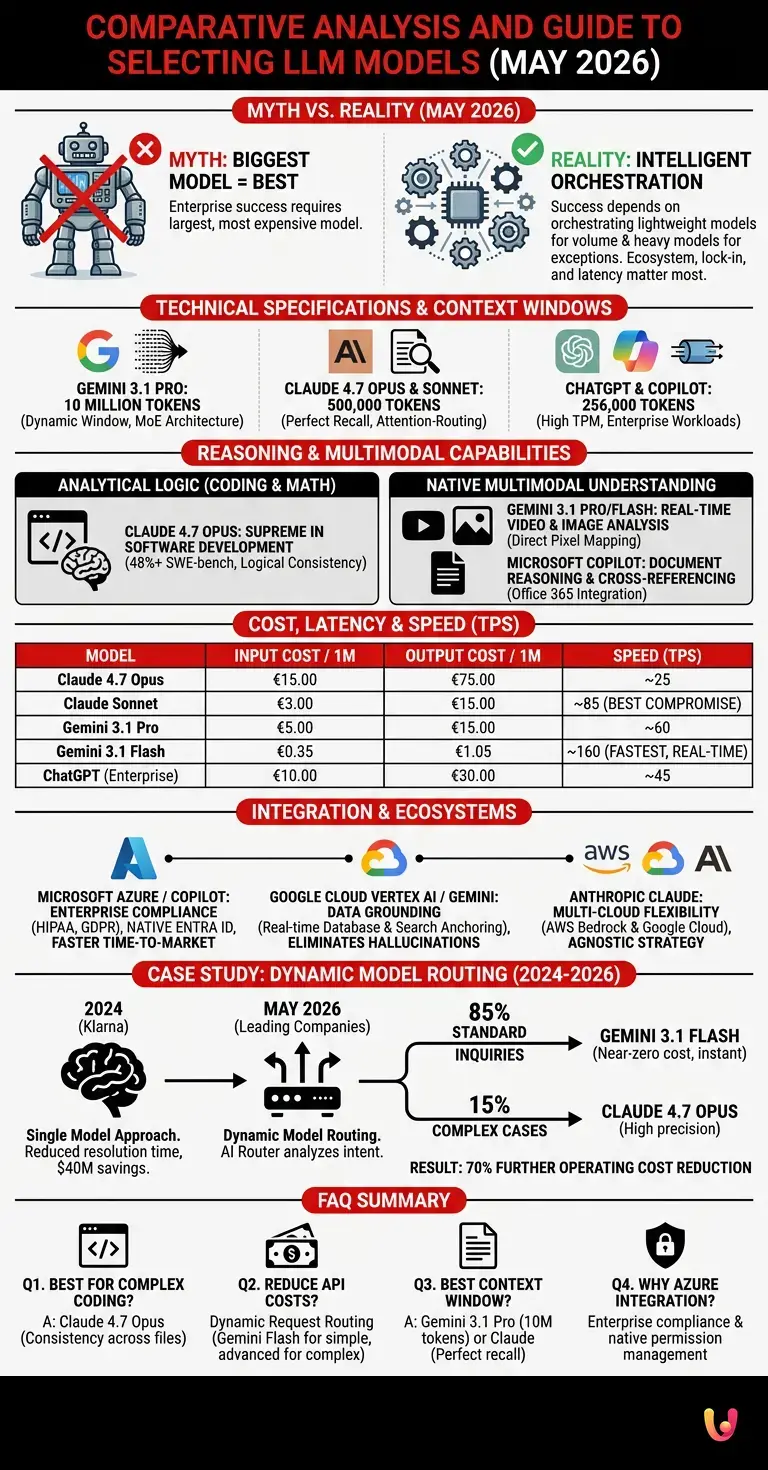

The most deeply rooted myth in today’s artificial intelligence landscape is that achieving enterprise-grade performance requires adopting the largest and most expensive model available. The truth, as of May 2026, is diametrically opposed: success in production depends not on scores in reasoning benchmarks, but on the intelligent orchestration of lightweight models for high-volume tasks and heavy models for exceptions. This comparison of LLMs demonstrates that the ecosystem, vendor lock-in, and latency now matter far more than raw parameters, compelling CTOs to undergo a radical paradigm shift in the design of AI architectures.

Technical Specifications and Basic Architectures

In this comparison of LLM models , technical specifications reveal crucial divergences. We analyze context window sizes, rate limits (RPM/TPM), and the architectural features that define the capabilities of Claude, Gemini, ChatGPT, and Copilot in high-intensity production scenarios.

As of May 2026, the race to expand the context window has reached a functional plateau, shifting focus toward the efficiency of information retrieval (native Retrieval-Augmented Generation). According to official Google Cloud documentation, Gemini 3.1 Pro maintains the absolute lead with a dynamic context window of up to 10 million tokens, supported by a highly parallelized Mixture-of-Experts (MoE) architecture. This enables the ingestion of entire code repositories or video archives without fragmentation.

On the other hand, Claude 4.7 Opus and the latest iteration of Claude Sonnet feature a 500,000-token window. However, Anthropic has implemented an attention-routing mechanism that ensures perfect recall (100% in the Needle-in-a-Haystack test) even at the extreme limits of the context, thereby reducing structural hallucinations. ChatGPT (in its enterprise version based on the GPT-4.5/5 architecture ) and Microsoft Copilot offer standardized 256,000-token windows, prioritizing extremely high rate limits (TPM – Tokens Per Minute) to accommodate simultaneous enterprise workloads.

Multimodal Capabilities and Complex Reasoning

Evaluating complex reasoning is fundamental in an up-to-date comparison of LLMs . We examine performance across the latest benchmarks for advanced coding , zero-shot mathematical logic, and the native analysis of images and complex documents.

Reasoning capabilities have diverged into two distinct categories: analytical logic (coding and mathematics) and native multimodal understanding. In the domain of software development, Claude 4.7 Opus reigns supreme. In the 2026 SWE-bench benchmarks, Opus autonomously resolves over 48% of complex GitHub issues, outperforming ChatGPT thanks to its superior ability to maintain logical consistency across multiple files.

Regarding multimodality, Gemini 3.1 Pro and Gemini 3.1 Flash operate on an architecture that is natively multimodal from the pre-training stage. This means they do not translate images or audio into text prior to processing; instead, they map pixels and frequencies directly into the latent space. The result is overwhelming superiority in real-time video analysis and in interpreting floor plans or complex industrial diagrams. Microsoft Copilot , integrated with the Office 365 ecosystem, excels in document reasoning, cross-referencing data across Excel, Word, and Teams with semantic precision unmatched for administrative tasks.

Latency, Inference Speed, and Operational Costs

Budget optimization requires a careful comparison of LLM models based on costs per million tokens and latency. Let's explore which models offer the best ratio of Tokens Per Second (TPS) to infrastructure spending for businesses.

The true battleground of 2026 is economic efficiency. "Frontier" models (Opus, top-tier GPT) are unsustainable for high-volume tasks such as log classification or first-level customer care. This is where optimized models come into play.

| LLM | Input Cost (per 1M) | Output Cost (per 1M) | Speed (TPS) |

|---|---|---|---|

| Claude 4.7 Opus | €15.00 | €75.00 | ~25 |

| Claude Sonnet | €3.00 | €15.00 | ~85 |

| Gemini 3.1 Pro | €5.00 | €15.00 | ~60 |

| Gemini 3.1 Flash | €0.35 | €1.05 | ~160 |

| ChatGPT (Enterprise) | €10.00 | €30.00 | ~45 |

According to Google's official documentation, Gemini 3.1 Flash offers an inference speed of approximately 160 Tokens Per Second (TPS), making it ideal for real-time applications and voice agents. Claude Sonnet positions itself as the best compromise on the market: it offers reasoning capabilities close to those of the top-tier models of 2025, but at one-fifth the cost of Opus and with latency that is imperceptible to the end user.

Integration, Ecosystem, and Cloud Platforms

No comparison of LLM models is complete without analyzing vendor lock-in and infrastructure. We compare the advantages of Anthropic and OpenAI APIs with integrated enterprise platforms such as Google Cloud Vertex AI and Microsoft Azure.

The choice of model is intrinsically linked to the company's existing cloud infrastructure. Microsoft Copilot and OpenAI models via Azure offer the insurmountable advantage of enterprise-grade compliance (HIPAA, strict GDPR) and native integration with Entra ID (formerly Azure AD) for permission management at the individual document level. If a company already utilizes the Microsoft ecosystem, adopting Azure OpenAI reduces time-to-market by 60%.

Gemini 3.1 on Google Cloud Vertex AI excels at Data Grounding . It enables the model's responses to be anchored directly to enterprise databases (BigQuery, AlloyDB) and Google Search in real time, effectively eliminating hallucinations regarding proprietary data. Anthropic , despite not having a proprietary cloud, has adopted an agnostic strategy: Claude's APIs are available on AWS Bedrock and Google Cloud, offering maximum flexibility for multi-cloud architectures.

Case Study: The Evolution of Customer Service (2024–2026)

In 2024, Klarna made headlines by handling 2.3 million conversations (two-thirds of the total) using an OpenAI-powered AI assistant, reducing resolution times from 11 to 2 minutes and estimating savings of $40 million. By May 2026, leading companies had evolved this approach by implementing Dynamic Model Routing . Instead of using a single, heavy model, an AI router analyzes user intent in milliseconds: 85% of standard inquiries are handled by Gemini 3.1 Flash (near-zero cost, instant latency), while only 15% of complex cases (e.g., legal disputes or anomalous refunds) are escalated to Claude 4.7 Opus. This hybrid approach has further slashed operating costs by 70% compared to 2024, while maintaining customer satisfaction levels.

Conclusions

To conclude this comparison of LLM models , we present the definitive decision matrix. Choosing the right model depends on balancing call volume, the need for zero-shot reasoning, and enterprise integration requirements.

There is no absolute winner, but there are optimal choices depending on the usage scenario:

- High-volume, low-latency tasks (Chatbots, Triage, Basic Data Extraction): The undisputed winner is Gemini 3.1 Flash . Its negligible cost and extreme speed make it the only logical candidate for large-scale operations.

- Extreme Zero-Shot Reasoning and Complex Coding: Claude 4.7 Opus remains the gold standard. It is the necessary investment when logical accuracy is critical and human or machine error would entail high costs.

- Quality-to-Price Balance (The "Daily Driver"): Claude Sonnet strikes the perfect balance for 80% of enterprise applications that require high intelligence without draining the API budget.

- Enterprise Integration and Document Security: Microsoft Copilot and the ChatGPT ecosystem on Azure stand out for their ease of deployment in highly regulated corporate environments.

The winning strategy for 2026 is not to choose a single model, but to build a routing architecture that dynamically directs each prompt to the most efficient model for that specific task.

Frequently Asked Questions

For software development and advanced logical reasoning, Claude 4.7 Opus sets the benchmark. Thanks to its exceptional ability to maintain consistency across multiple files, it autonomously solves complex problems, outperforming alternatives on the market. It is the ideal tool when code precision is critical to the project.

The most effective strategy involves creating a dynamic request routing system. Instead of using a single, expensive model for every operation, an intelligent router analyzes the intent of the prompt and assigns simple tasks to cost-effective solutions like Gemini 3.1 Flash. Complex operations are routed to advanced models, drastically reducing corporate expenditure.

Gemini 3.1 Pro dominates this sector thanks to a dynamic window that reaches ten million tokens, allowing for the analysis of entire archives without fragmentation. However, Claude 4.7 Opus and Sonnet ensure perfect information retrieval even at the limits of their context, minimizing structural hallucinations when processing extensive texts.

Choosing the Microsoft system offers significant advantages in terms of regulatory compliance and corporate data security. This solution ensures native integration for managing permissions on individual documents. It is, therefore, the optimal choice for highly regulated corporations requiring rigorous control over access and sensitive information.

The Pro and Flash versions of Gemini operate on a natively multimodal architecture from the initial training phase. This means they do not need to translate visual or audio content into text before processing it, but instead map the data directly. The result is extremely high precision in understanding real-time video and complex industrial diagrams.

Still have doubts about Comparative Analysis and Guide to Selecting LLM Models (May 2026)?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

Did you find this article helpful? Is there another topic you'd like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.