You pull your car into the drive-thru lane, the tires rolling over the familiar asphalt. The glowing digital menu board illuminates the dusk, displaying an array of burgers, fries, and milkshakes. It seems like a perfectly ordinary, everyday transaction. However, what you are looking at is not a static sign, but a highly sophisticated, dynamically shifting interface. Behind this seemingly mundane interaction lies a massive, invisible deployment of Artificial Intelligence. The menu you see is not the same menu the car ahead of you saw, nor is it the one the car behind you will see.

Welcome to the fast food anomaly. For decades, the drive-thru experience was uniform: a printed board, a crackling speaker, and a human taking your order. Today, the drive-thru is one of the most advanced technological testing grounds on the planet. But the most fascinating aspect isn’t just that computers are taking orders. It is the bizarre, highly psychological reason why the menu physically changes its layout, hides certain items, and highlights others before you even utter a single word.

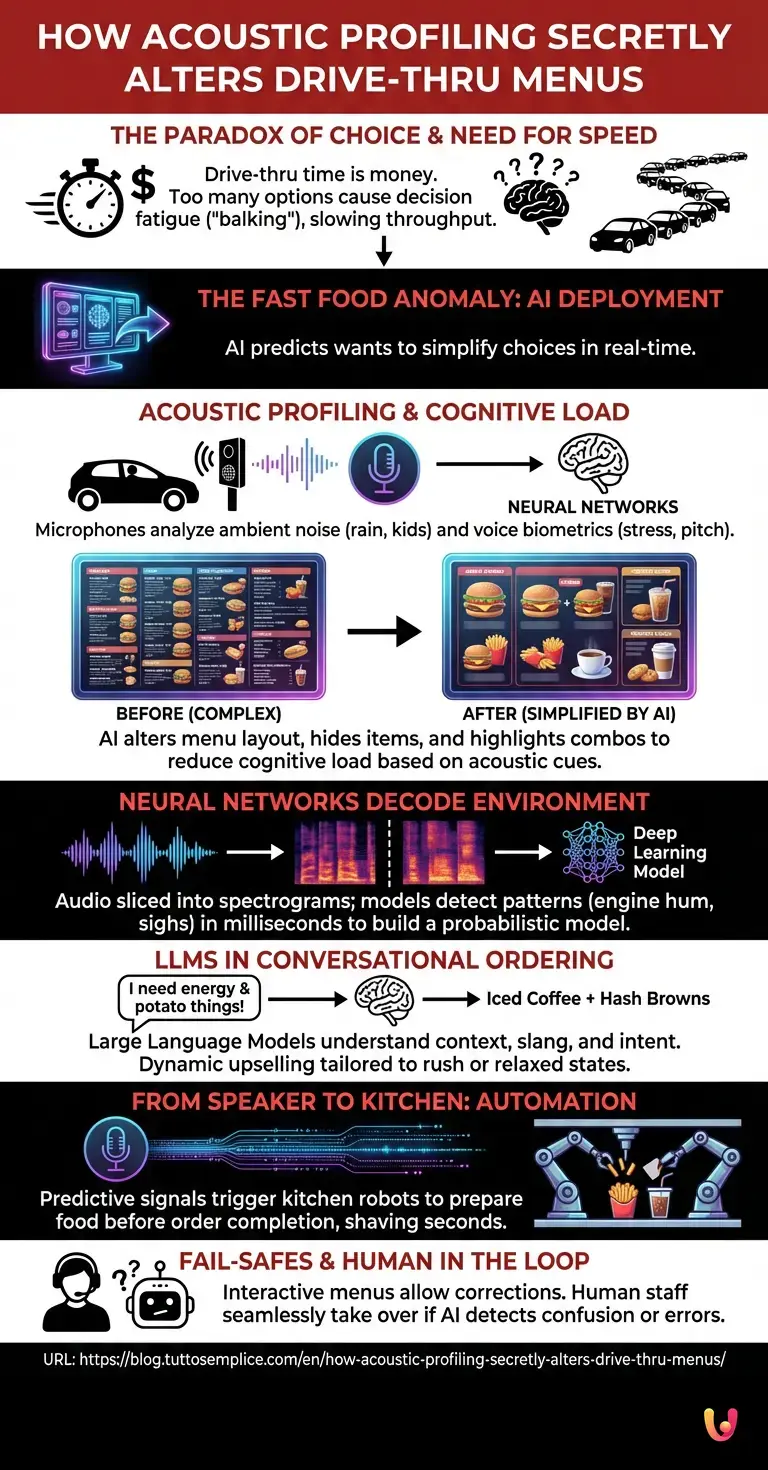

The Paradox of Choice and the Need for Speed

To understand the secret behind the shifting digital menu, we must first understand the fundamental economics of the fast-food industry. In the drive-thru lane, time is literally money. Every additional second a customer spends staring at the menu costs the restaurant revenue. Industry studies have long shown that when drive-thru times exceed a certain threshold, a phenomenon known as “balking” occurs: potential customers see a long line, assume the wait will be unbearable, and drive away.

Historically, restaurants tried to solve this by offering more options, hoping to satisfy every possible craving. However, this triggered the psychological “paradox of choice.” When presented with too many options, the human brain experiences decision fatigue. Customers freeze, uttering the dreaded, “Uhhhh, let me get a…” while scanning dozens of combo meals. This hesitation is the enemy of throughput.

This is where the anomaly begins. Engineers realized that to speed up the line, they couldn’t just train employees to work faster; they had to fundamentally alter the customer’s decision-making process. They needed a way to predict what you wanted and simplify your choices in real-time. To do this, they turned to advanced machine learning.

The Bizarre Trigger: Acoustic Profiling and Cognitive Load

The secret behind your changing menu is a concept known as predictive cognitive load management, powered by acoustic profiling. When you pull up to the speaker box, the microphone is no longer just a conduit to a human cashier’s headset. It is an advanced sensor feeding live audio streams into sophisticated neural networks.

These systems are not just listening for the words “cheeseburger” or “large fries.” They are analyzing the ambient noise of your environment and the biometric markers of your voice. Are your windshield wipers slapping against the glass? The system detects the rain and instantly alters the digital board to prominently feature hot coffee and warm comfort foods. Is there the distinct sound of children arguing in the backseat? The AI immediately shrinks the complex a la carte options and enlarges the family bundles and kid’s meals, anticipating the needs of a stressed parent.

Even more bizarre is how the system reacts to your voice. If the AI detects a high pitch, rapid cadence, or frequent hesitations—classic signs of stress or cognitive overload—it will actively remove items from the screen. By temporarily hiding lesser-ordered items and highlighting the most popular, easy-to-order combos, the AI artificially reduces your cognitive load. It makes the menu look simpler, guiding your brain to a faster decision without you ever realizing that your choices were just curated by an algorithm.

How Neural Networks Decode Your Environment

How does a computer know what a stressed driver sounds like? The answer lies in the architecture of modern neural networks. When the drive-thru microphone captures sound, it doesn’t process it as a continuous noise. Instead, the audio is sliced into milliseconds and converted into visual representations of sound frequencies called spectrograms.

Deep learning models, trained on millions of hours of drive-thru audio, scan these spectrograms for specific patterns. They perform feature extraction, isolating the hum of a specific type of engine, the frequency of a sigh, or the acoustic signature of a dog barking in the passenger seat. Because these networks process data in parallel, this entire analysis happens in a fraction of a second. By the time you roll down your window, the system has already built a probabilistic model of your current state of mind and adjusted the digital pixels on the screen accordingly.

The Role of LLMs in Conversational Ordering

Once you actually begin to speak, another layer of technology takes over. In recent years, the integration of LLMs (Large Language Models) has revolutionized how these systems interact with humans. Older voice recognition software relied on rigid decision trees; if you didn’t say the exact phrase “Number Two Combo,” the system would fail.

Today’s LLMs understand context, nuance, and even regional slang. If you say, “I need something to wake me up, and throw in some of those little potato things,” the LLM instantly translates this intent into an iced coffee and hash browns. But the LLM’s true power lies in its ability to dynamically upsell. Because it is connected to the acoustic profiling system, the LLM tailors its responses. If it knows you are in a rush (based on your rapid speech and engine revving), it won’t bother asking if you want to add a dessert. If the environment sounds relaxed, it might gently suggest a limited-time offer that pairs perfectly with your current order.

From Speaker Box to Kitchen: Robotics and Automation

The fast food anomaly doesn’t stop at the digital menu board; it extends deep into the kitchen. The AI at the drive-thru is the central nervous system of a highly synchronized operation involving advanced robotics and automation.

When the acoustic profiling system predicts that you are highly likely to order a specific family meal based on the noise in your car, it doesn’t wait for you to finish speaking. It sends a predictive signal to the kitchen’s automated systems. Robotic fry dispensers might drop a basket of fries into the oil seconds before you actually order them. Automated beverage systems drop the exact size cup, fill it with ice, and pour the drink, timing it perfectly so the carbonation is fresh by the time you reach the window.

This seamless integration between the predictive AI at the speaker box and the physical automation in the kitchen shaves crucial seconds off the transaction. It is a choreographed dance of data and machinery, all triggered by the subtle cues you unknowingly provided when you pulled up to the microphone.

What Happens If the System Misreads You?

A natural question arises: what happens if the AI gets it wrong? What if you are coughing, and the system misinterprets it as hesitation, hiding the exact menu item you wanted to order? Or what if the background noise is too chaotic for the neural networks to parse accurately?

Engineers have built in robust fail-safes to handle these edge cases. First, the digital menu board is designed to be interactive. If you ask for an item that has been dynamically hidden, the LLM instantly recognizes the request, adds it to your order, and the visual interface updates to reflect your choice. The system is designed to be a gentle guide, not an absolute dictator of your meal.

Furthermore, there is always a human in the loop. If the AI detects a high level of confusion, a drop in conversational confidence, or a request it cannot fulfill, it seamlessly hands the interaction over to a human employee inside the restaurant. The customer rarely notices the transition; the human simply takes over the headset, resolving the issue before the drive-thru line stalls.

In Brief (TL;DR)

Fast food restaurants deploy advanced artificial intelligence to transform traditional static menu boards into dynamically shifting digital interfaces.

By utilizing acoustic profiling, hidden neural networks instantly analyze ambient sounds like rain or crying children through the microphone.

These predictive algorithms secretly adjust the displayed options to reduce cognitive load, guiding customers toward faster purchasing decisions.

Conclusion

The next time you visit a drive-thru, take a moment to observe the digital menu board before you speak. The subtle shifts in its layout, the sudden appearance of a warm beverage on a chilly night, or the simplification of combo meals when your car is loud are not random occurrences. They are the result of a profound technological evolution.

The fast food anomaly reveals how artificial intelligence is moving beyond our smartphones and computers, quietly embedding itself into the physical infrastructure of our daily lives. By analyzing our acoustic environments and managing our cognitive load, these systems are fundamentally altering how we make choices. The drive-thru is no longer just a place to buy a quick meal; it is a sophisticated, AI-driven environment that knows what you want—and how you feel—often before you even say a word.

Frequently Asked Questions

Artificial intelligence uses acoustic profiling to analyze background noise and voice patterns in real time while you wait in your car. Based on these environmental sounds, the system dynamically updates the digital board to highlight specific items and hide others. This personalized approach helps simplify your choices and significantly speeds up the entire ordering process.

Acoustic profiling is an advanced technology that captures audio through the speaker box to detect environmental sounds and biometric voice markers. Neural networks process this audio to identify factors like heavy rain, specific engine types, or even stressed voices. The restaurant system then uses this data to predict what food you might want and adjusts the display accordingly.

Restaurants hide specific options to reduce cognitive load and prevent decision fatigue when they detect a customer might be stressed or in a hurry. By displaying fewer choices and highlighting popular combo meals, the algorithm helps customers make faster and easier decisions. This strategy ultimately prevents long lines and increases the overall revenue and efficiency of the restaurant.

Yes, modern ordering systems use large language models that easily understand context, regional slang, and highly nuanced requests. Instead of relying on rigid phrases, these advanced models can translate casual speech into precise food orders without missing a beat. They also adjust their upselling strategies based on how rushed or relaxed your voice sounds during the interaction.

If the automated system misinterprets your request or detects high levels of confusion, it relies on robust built-in failsafes to prevent delays. A human employee monitoring the system will seamlessly take over the headset to complete the transaction without you noticing. Furthermore, you can always ask for hidden items, and the interface will instantly update to include your exact choices.

Still have doubts about How Acoustic Profiling Secretly Alters Drive-Thru Menus?

Type your specific question here to instantly find the official reply from Google.

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.