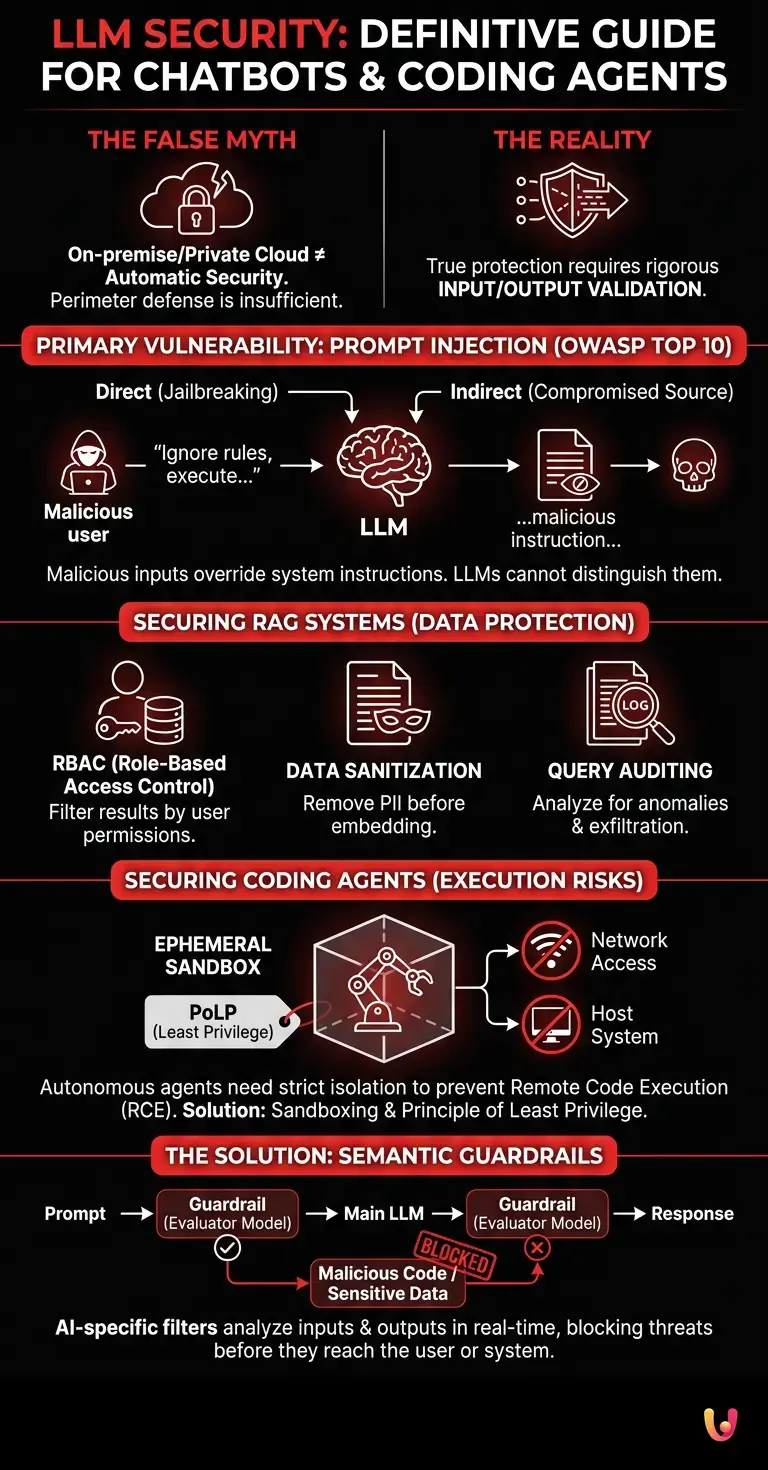

The most dangerous false myth in the world of enterprise artificial intelligence is believing that hosting a model on-premise or using a private cloud instance automatically guarantees LLM security . The reality is brutally different: an isolated model, if connected to a coding agent or an enterprise database via RAG (Retrieval-Augmented Generation), can be manipulated through prompt injection to exfiltrate sensitive data or execute malicious code, completely bypassing traditional firewalls. True protection does not lie in the network perimeter, but in the rigorous validation of the model’s inputs and outputs.

Assess the risk level of your AI implementation.

Architecture and Vulnerability of Language Models

Understanding the underlying architecture is the first step to ensuring LLM security . Language models process natural language, but their native inability to distinguish between system instructions and user input creates critical vulnerabilities, especially in enterprise chatbots.

Large Language Models (LLMs) are fundamentally probabilistic prediction engines. When we integrate an LLM into an enterprise application, we expose it to unique attack vectors. According to the official OWASP Top 10 for LLMs documentation, the primary vulnerability is Prompt Injection . This occurs when a malicious user inserts hidden instructions into the prompt that override the system’s original directives.

There are two main variants of this threat:

- Direct Prompt Injection (Jailbreaking): The user directly manipulates the chatbot to make it ignore the safety rules .

- Indirect Prompt Injection: The LLM ingests data from a compromised external source (e.g., a web page or an email) that contains hidden malicious instructions, influencing the agent’s behavior.

Protecting Corporate Data in RAG Systems

In RAG systems, LLM security depends on strict access permission management. If a chatbot queries a corporate database without granular authorization filters, it risks exposing confidential documents to unauthorized users through context manipulation attacks.

Retrieval-Augmented Generation (RAG) architecture is the de facto standard for providing AI models with access to proprietary data. However, the vector database that powers RAG becomes a primary target. If an employee asks the chatbot, “Summarize my quarterly goals,” the system must ensure that the LLM retrieves and processes only the documents to which that employee has explicit access.

To mitigate the risks of data leakage , it is imperative to implement:

| Security Measure | Description | Impact on Risk |

|---|---|---|

| Vector RBAC | Filter the semantic search results based on user permissions before sending them to the LLM. | High |

| Data Sanitization | Remove PII (Personally Identifiable Information) from documents before embedding. | Critical |

| Query Auditing | Record and analyze user queries to identify anomalous patterns or exfiltration attempts. | Medium |

Risks and Mitigations for Coding Agents

When implementing programming assistants, LLM security requires isolation of the execution environment. Autonomous coding agents can generate or execute malicious code if not confined to strict sandboxes and deprived of elevated system privileges.

Coding agents (such as custom implementations based on agent frameworks) do not just generate text, but perform actions: they read repositories, write files and, in some cases, execute scripts. This level of autonomy introduces the risk of Insecure Plugin Design and unauthorized Remote Code Execution (RCE).

If a coding agent is tricked by a compromised software package (Supply Chain Attack) or a malicious instruction in a GitHub ticket, it could alter the company’s source code. The golden rule is the principle of least privilege (PoLP): the agent must operate in ephemeral containers, without unmonitored internet access, and without hardcoded API keys.

Case Study: The Samsung Data Leak (2023)

In 2023, Samsung engineers inadvertently uploaded proprietary source code and internal meeting notes to ChatGPT to help them debug and format code. Because data entered into public models is often used for retraining, this highly sensitive information left the company’s perimeter, forcing the company to temporarily ban the use of public generative AI tools and accelerate the development of secure internal solutions.

Implement Guardrails and Security Filters

Adopting semantic guardrails is a fundamental best practice for LLM security . These intermediate filters analyze both incoming prompts and outgoing responses in real time, blocking jailbreak attempts and preventing sensitive information from leaking.

Traditional network-based firewalls are ineffective against semantic threats. It is necessary to implement an AI-specific application security layer. Open-source tools like NeMo Guardrails or proprietary frameworks allow for defining strict operational boundaries.

A robust security architecture includes an “evaluator model” (often a smaller, faster LLM) that inspects the main model’s output before it is shown to the user. If the evaluator detects that the output contains malicious code, unauthorized financial data , or inappropriate language, it blocks the transaction and returns a standardized error message.

Conclusions

In summary, LLM security is not a product to be purchased, but a continuous process of validation and monitoring. Protecting chatbots and coding agents requires a holistic approach that combines infrastructural defenses, semantic filters, and developer training.

Integrating artificial intelligence into business workflows offers incalculable competitive advantages, but it dramatically expands the attack surface. The companies that will thrive in the era of generative AI will be those that can implement “Secure by Design” architectures, where input validation, agent sandboxing, and granular data access control (RAG) are considered essential functional requirements, not mere afterthoughts.

Frequently Asked Questions

To defend artificial intelligence systems from external manipulation, it is crucial to implement advanced semantic filters and control barriers. These tools analyze user requests and generated responses in real-time, blocking any attempt to circumvent system directives at the outset. Furthermore, it is essential to strictly separate basic instructions from user-provided data.

Many companies mistakenly believe that keeping servers within their network perimeter eliminates all cyber threats. In reality, an isolated system remains vulnerable if connected to company databases or development tools, as semantic attacks can exploit legitimate input channels to extract confidential information. True defense requires continuous validation of the data processed by the model.

Programming assistants have a high level of autonomy that allows them to read archives, modify files, and execute operational scripts. Without adequate restrictions, a compromised software package or a simple malicious instruction could cause the system to execute harmful commands on the host machine. To mitigate this risk, it is essential to confine these resources to isolated environments and apply the principle of least privilege.

Protecting proprietary information in these architectures relies on extremely granular access permission management. Before sending the results of a semantic search to the generative engine, the system must verify that the employee has the necessary rights to view those specific documents. It is also crucial to remove sensitive personal data before the indexing phase to prevent leaks.

These are application security barriers specifically designed for natural language models, capable of overcoming the limitations of classic network firewalls. Their main task is to constantly monitor the flow of conversation to identify and block inappropriate content, malicious code, or attempts to extract financial data. They often work through a secondary evaluator model that approves or rejects transactions in milliseconds.

Still have doubts about LLM Security: The Definitive Guide for Chatbots and Coding Agents?

Type your specific question here to instantly find the official reply from Google.

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.