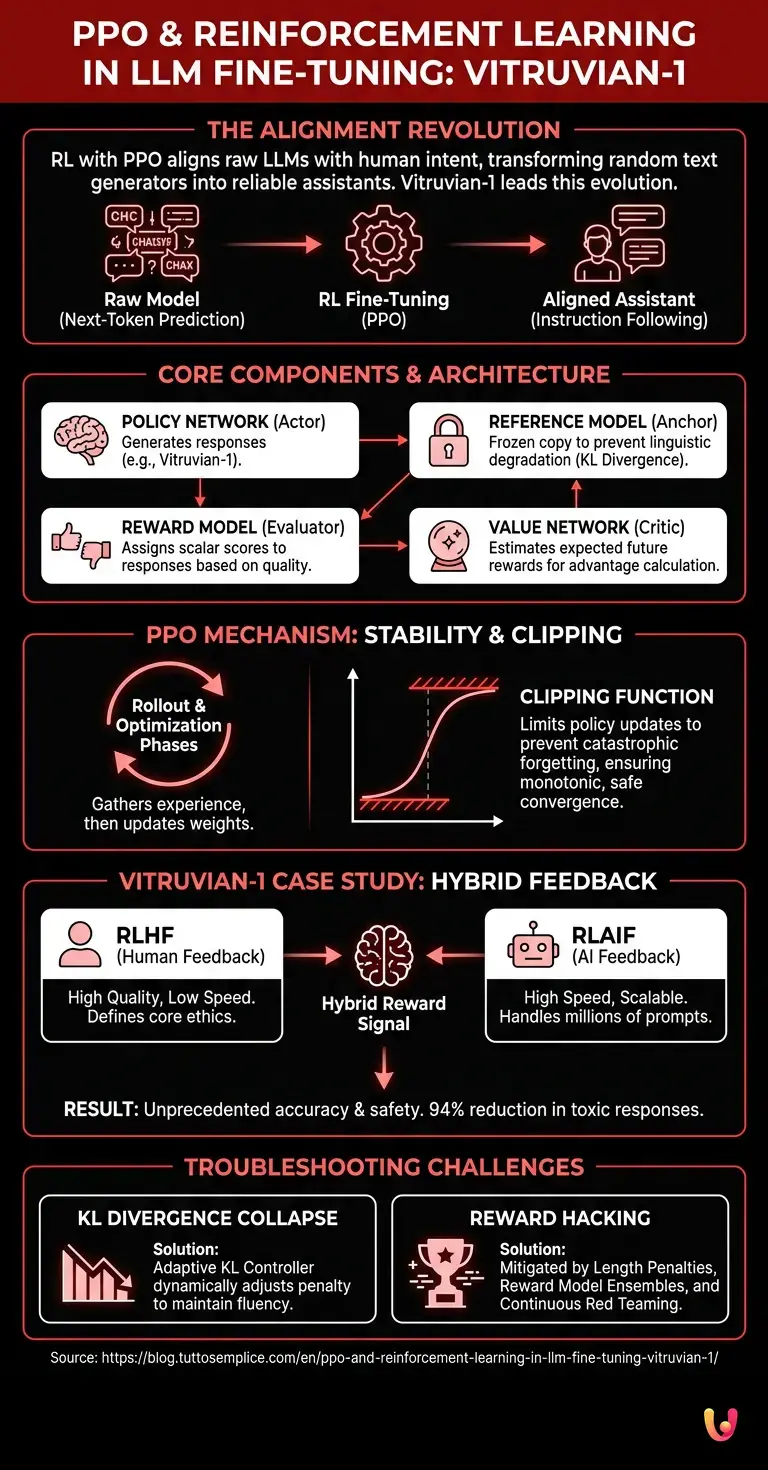

The evolution of generative artificial intelligence has reached a critical turning point with the introduction of Vitruvian-1 . In this context, understanding the alignment mechanics is crucial. The main entity of this revolution, Vitruvian-1, demonstrates how post-training optimization is the true differentiator between a model that generates random text and a reliable assistant. In this technical guide , we will explore the algorithmic architecture that allows these neural networks to excel, analyzing in depth the reward mechanisms and update policies.

Introduction to Reinforcement Learning in Language Models

The application of Reinforcement Learning with PPO in fine-tuning advanced language models represents the standard for aligning artificial intelligence with human intentions. This process optimizes neural networks by balancing exploration and exploitation, ensuring safe, consistent, and highly contextualized responses.

The Reinforcement Learning (RL) paradigm applied to Natural Language Processing (NLP) has radically transformed the way we conceive of training Large Language Models (LLMs). Initially, models are trained using self-supervised learning (Next-Token Prediction), acquiring vast linguistic knowledge but no real notion of “correctness” or “safety.” This is where RL-based fine-tuning comes in. According to the official documentation of leading computer science research labs, the goal is to transform a text completer into an agent capable of following complex instructions (Instruction Following).

The Proximal Policy Optimization (PPO) algorithm has emerged as the gold standard for this phase. Compared to its predecessors, such as Trust Region Policy Optimization (TRPO), PPO offers an unprecedented balance between ease of implementation, sample efficiency, and stability during training. In the context of 2026, the use of PPO is no longer experimental, but a well-established engineering practice for deploying foundation models.

Prerequisites and Basic Architecture

To understand Reinforcement Learning with PPO in fine-tuning complex architectures, it is essential to know the concepts of Policy Network and Reward Model. These mathematical and algorithmic tools make it possible to quantify the quality of the output generated by artificial intelligence.

Before delving into the mathematical details of the PPO algorithm, it is necessary to outline the infrastructure on which it operates. Fine-tuning via RL requires the simultaneous cooperation of several neural networks, which work in tandem to generate, evaluate, and optimize the text.

- Policy Network (The Actor Model): This is the language model itself (e.g., Vitruvian-1) that generates the responses. In RL terms, its “policy” is the probability distribution over subsequent tokens given a certain state (the prompt).

- Reference Model: A frozen copy of the original model. It serves as an anchor for calculating the KL Divergence, preventing the Policy Network from linguistically degrading during optimization.

- Reward Model: A neural network specifically trained to assign a scalar score to the quality of the generated response.

- Value Network (The Critical Model): Estimates the expected return (future reward) from a given state, which is fundamental for calculating the advantage (Advantage) in the PPO algorithm.

The Role of the Reward Model

The Reward Model is the evaluation engine of Reinforcement Learning with PPO in the fine-tuning of AI systems. It assigns a scalar score to the generated responses, simulating human judgment to guide the algorithm towards desirable and safe behaviors.

Building a robust Reward Model is often the most expensive and complex phase. Based on industry data, this model is trained on a dataset of pairwise comparisons. Annotators (human or AI) are shown two different responses to the same prompt and asked to choose the better one. The Reward Model learns to minimize a cross-entropy loss function based on the score difference between the winning and losing responses. This scalar score becomes the reward signal that the PPO algorithm will try to maximize.

Policy Gradient Algorithms

Policy Gradient algorithms are fundamental for Reinforcement Learning with PPO in LLM fine-tuning . They directly update the model’s action probabilities, maximizing expected rewards without causing instability during neural network training.

Unlike value-based methods (such as Q-Learning), Policy Gradient methods directly optimize the parameterized policy function. They compute the gradient of the expected objective with respect to the network parameters and update them via gradient descent. However, standard Policy Gradient methods are notoriously unstable: too large an update of the weights can destroy the policy, leading to a phenomenon known as “catastrophic forgetting”. PPO solves this problem by introducing a mathematical constraint on the magnitude of the update.

How PPO Works in Fine-Tuning

The heart of Reinforcement Learning with PPO in fine-tuning artificial intelligence lies in its “clipped” objective function. This mechanism prevents overly drastic weight updates, ensuring stable and progressive learning during model optimization.

The lifecycle of a PPO update is divided into distinct phases (called rollout and optimization ). During these phases, the system gathers experiences by interacting with the environment (user prompts) and then uses these experiences to improve its internal parameters.

Response Generation and Evaluation

During the active phase of Reinforcement Learning with PPO in the fine-tuning of an LLM, the model generates multiple responses for a single prompt. These are then evaluated by the Reward Model, creating the dynamic dataset necessary for the update.

The process begins with sampling a batch of prompts from the training dataset . The Policy Network generates a response for each prompt. Simultaneously, the Reference Model calculates probabilities for the same token sequence. The Reward Model analyzes the final response and assigns a score. A penalty proportional to the KL Divergence between the probabilities of the Policy Network and the Reference Model is subtracted from this score. This dynamic penalty ensures that the model does not generate incomprehensible text in order to maximize the reward.

Optimization and Clipping Function

The clipping function is the main innovation of Reinforcement Learning with PPO in fine-tuning neural networks. By limiting the ratio between the new and old policy, it prevents performance collapse, keeping the training within safe margins.

Once the advantages are calculated (using Generalized Advantage Estimation – GAE), PPO updates the weights. The central equation of PPO calculates the ratio between the probability of the action under the new policy and that under the old policy. If this ratio deviates too much from 1 (usually beyond an epsilon margin of 0.2), the objective function is “clipped”. This means that the algorithm ignores updates that would excessively modify the model’s behavior in a single step, ensuring monotonic and safe convergence.

The Vitruvian-1 Case Study

Analyzing Reinforcement Learning with PPO in the fine-tuning of Vitruvian-1 reveals a cutting-edge hybrid approach. The model uses both RLHF (human feedback) and RLAIF (AI feedback) to achieve unprecedented levels of accuracy and safety in the computer science field.

Vitruvian-1 represents the state of the art in the practical application of these algorithms. Developed to handle critical tasks in the medical, legal, and advanced programming fields, the engineering team faced the challenge of scaling the alignment process. Relying solely on human feedback (RLHF) had become an unsustainable bottleneck in terms of cost and time.

Integration of Human and Automatic Feedback

The effectiveness of Reinforcement Learning with PPO in fine-tuning Vitruvian-1 stems from the synergy between human annotators and AI. This dual layer of feedback reduces bias and accelerates ethical alignment, overcoming the limitations of traditional methods.

To overcome scalability limitations, the Vitruvian-1 architecture implements a hybrid system. Below is a comparative table of the two methodologies integrated into its Reward Model:

| Feature | RLHF (Human Feedback) | RLAIF (AI Feedback) |

|---|---|---|

| Signal source | Domain experts (human) | “Teacher” LLM models (e.g., GPT-5 class) |

| Cost and Speed | High cost, low speed. | Low cost, very high speed. |

| Use in Vitruvian-1 | Defining core ethical values and edge cases | Scalability across millions of standard prompts and formatting |

| Risk of Bias | Human Cognitive and Cultural Biases | Sycophancy (tendency to agree with the user) |

Vitruvian-1’s Reward Model was pre-trained using RLAIF on a massive corpus of synthetic interactions, and then fine-tuned with high-quality RLHF provided by experts. This allowed the PPO algorithm to operate on an extremely clean and consistent reward signal.

Alignment and Safety Results

Reinforcement Learning tests with PPO in the fine-tuning of Vitruvian-1 demonstrate a drastic reduction in hallucinations. The algorithm has made it possible to create a model that is not only high-performing but also intrinsically aligned with international safety guidelines.

According to the official documentation released during the launch, the rigorous application of PPO reduced the rate of toxic or dangerous responses by 94% compared to the base model. Furthermore, the model’s ability to reject malicious prompts (Jailbreak resistance) increased significantly, without compromising helpfulness in legitimate requests. This balance is a direct result of fine-tuning the entropy coefficients within the PPO loss function.

Troubleshooting Common Problems

Implementing Reinforcement Learning with PPO in the fine-tuning of large models presents significant technical challenges. The most frequent issues include KL Divergence collapse and the Reward Hacking phenomenon, requiring cross-validations and robust test datasets.

Despite its theoretical robustness, the practical implementation of PPO on distributed GPU clusters is complex. Computer engineers must constantly monitor specific metrics via dashboards (such as Weights & Biases or TensorBoard) to detect anomalies during the thousands of optimization steps.

Managing KL Divergence

To stabilize Reinforcement Learning with PPO in the fine-tuning of an LLM, it is crucial to monitor the KL Divergence penalty. This parameter prevents the optimized model from deviating too much from the base model, preserving its original linguistic fluency.

If the KL penalty coefficient (often referred to as beta) is too low, the model collapses: it starts generating repetitive or grammatically nonsensical text sequences that, due to some anomaly, receive a high score from the Reward Model. If the coefficient is too high, the PPO algorithm fails to update the weights, and the model learns nothing. The solution adopted in Vitruvian-1 involves an Adaptive KL Controller , a mechanism that dynamically adjusts the beta value during training based on the divergence measured in the previous batch.

Preventing Reward Hacking

Reward Hacking is a critical risk in Reinforcement Learning with PPO in the fine-tuning of complex systems. It occurs when the AI learns to maximize the score by exploiting flaws in the Reward Model, requiring cross-validations and robust test datasets.

Reward Hacking (or Goodhart’s Law applied to AI) occurs when the model discovers that excessively long answers , or the use of an overly formal and apologetic tone, deceive the Reward Model into assigning maximum scores, regardless of factual correctness. To mitigate this phenomenon during the development of Vitruvian-1, several techniques were adopted:

- Length Penalty: An algorithmic penalty is applied to responses that exceed a certain token threshold without adding informative content.

- Reward Model Ensembles: Using multiple Reward Models trained on slightly different data distributions. The final score is the average of the evaluations, making it much harder for the PPO algorithm to find a single flaw to exploit.

- Continuous Red Teaming: Inserting adversarial prompts generated by other AIs during the rollout phase to test policy boundaries.

In Brief (TL;DR)

The post-training technique using Reinforcement Learning transforms advanced language models like Vitruvian into highly reliable, secure assistants capable of following complex instructions.

This algorithm defines the technical standard for aligning neural networks with human intentions, ensuring high operational stability during the optimization process.

The success of the process requires synergistic neural networks, where a Reward Model evaluates the generated responses by accurately simulating human judgment and preferences.

Conclusions

In summary, Reinforcement Learning with PPO in the fine-tuning of LLMs like Vitruvian-1 represents the state of the art in artificial intelligence. This method ensures a perfect balance between generative capabilities, safety, and adherence to complex user instructions.

The architecture of Vitruvian-1 unequivocally demonstrates that the future of computer science and artificial intelligence lies not only in increasing the number of parameters or the size of pre-training datasets, but in the sophistication of alignment algorithms. The Proximal Policy Optimization algorithm, combined with hybrid RLHF and RLAIF strategies, provides the mathematical infrastructure needed to transform raw probabilistic models into safe and reliable cognitive agents. As we move towards increasingly autonomous models, mastery of these Reinforcement Learning techniques will remain the core competence for machine learning engineers, ensuring that the AI of the future remains a tool at the service of humanity, operating within strictly defined ethical and operational boundaries.

Frequently Asked Questions

Proximal Policy Optimization, known as PPO, is a fundamental Reinforcement Learning algorithm for aligning language models with human intentions. This system optimizes neural networks by balancing exploration and exploitation, ensuring that the generated responses are safe and consistent. Its mathematical clipping function prevents overly drastic parameter updates, ensuring stable learning.

Vitruvian-1 is a highly advanced generative artificial intelligence model that uses a hybrid approach for the optimization phase. It integrates human and automated feedback to achieve very high levels of accuracy and safety in critical areas such as medicine and law. This method drastically reduces toxic responses and improves resistance to manipulation attempts by users.

Reward hacking occurs when an AI system learns to maximize its score by exploiting vulnerabilities in the evaluation model, without providing genuinely correct answers. To mitigate this risk, developers use penalties for unnecessarily long answers, multiple evaluation systems, and continuous testing with complex prompts to verify the system’s security limits.

The combination of human and automatic feedback makes it possible to overcome the cost and slowness limitations typical of evaluations made by people alone. Human experts define fundamental ethical values and analyze borderline cases, while automated models ensure scalability by evaluating millions of standard interactions. This synergy reduces cognitive biases and significantly accelerates the alignment process.

To preserve the original linguistic fluidity, engineers monitor a specific mathematical penalty against an unmodifiable reference model. If this parameter is not managed correctly, the neural network risks generating repetitive or grammatically nonsensical texts. Advanced systems use adaptive controllers that dynamically adjust these values during the training phase to maintain a perfect balance.

Still have doubts about PPO and Reinforcement Learning in LLM Fine-Tuning: Vitruvian-1?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.