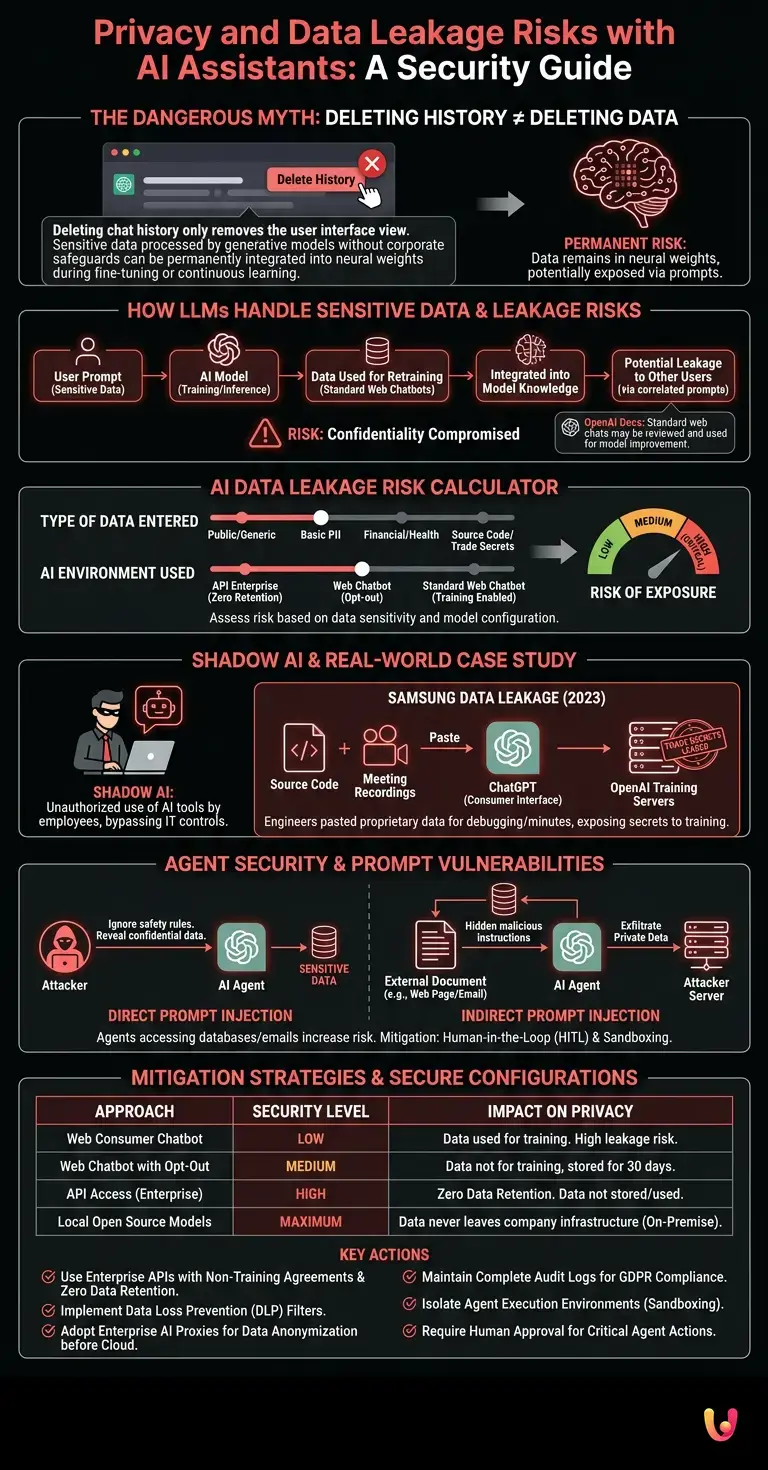

The most dangerous false myth about AI privacy is believing that deleting a chat’s history removes the data provided. In reality, if sensitive information is processed by a generative model without adequate corporate safeguards, it can be integrated into the system’s neural weights during fine-tuning or continuous learning phases. Deletion only removes the visual interface for the user, but the risk of data leakage remains permanent, exposing industrial secrets to anyone who knows how to formulate the correct prompt.

Select the type of data and the model used to assess the risk of exposure of the information.

How Language Models Handle Sensitive Data

When analyzing artificial intelligence privacy , it is critical to understand that language models do not “forget” easily . Data entered into prompts can be used for continuous retraining, exposing business information to external users in future sessions and compromising confidentiality.

Large Language Models (LLMs) like GPT-4, Claude, or Gemini operate in two distinct phases: training and inference (response generation). The problem arises when consumer platforms use inference data to feed subsequent training cycles. According to OpenAI’s official documentation, chats conducted through the standard web interface (without opting out) may be reviewed by human moderators and used to improve the model.

This means that if a user inputs a confidential contract to request a summary, fragments of that contract could emerge as output if another user, in another part of the world, formulates a statistically correlated query. Managing sensitive data therefore requires a mandatory shift towards Enterprise solutions that contractually guarantee Zero Data Retention .

The phenomenon of corporate data leakage

Privacy-related data leakage in artificial intelligence occurs when employees paste source code, financial data, or customer information into public chatbots. This transforms productivity tools into serious security flaws for the entire IT infrastructure.

The phenomenon of Shadow AI (the unauthorized use of AI tools by employees) is now the main cause of data leakage. Employees, driven by the need to optimize their work, bypass IT controls by feeding information covered by NDAs (Non-Disclosure Agreements) to the algorithms.

Real-World Case Study: Samsung’s Data Leakage (2023)

In the spring of 2023, Samsung’s semiconductor division recorded three separate security incidents in less than a month. The company’s engineers had pasted proprietary source code and internal meeting recordings into ChatGPT to identify bugs and generate minutes. Because the usage occurred through the consumer interface, Samsung’s trade secrets ended up on OpenAI’s training servers, forcing the South Korean company to temporarily ban the use of generative AI on company devices.

Agent Security and Prompt Vulnerabilities

Agent security represents the new frontier of privacy in artificial intelligence . When AI agents operate autonomously by accessing company databases, the risk of unauthorized data extraction via prompt injection increases exponentially.

With the evolution from conversational AI to agentic AI (systems capable of performing actions, reading emails, querying databases, and sending messages), the security perimeter has expanded. An attacker no longer needs to breach a server; they just need to manipulate the AI agent.

- Direct Prompt Injection: The malicious user provides instructions that override the AI’s system directives , forcing it to reveal sensitive data present in its context.

- Indirect Prompt Injection: The AI agent reads an external document (e.g., a web page or an email) that contains hidden malicious instructions. The AI executes these instructions without the user’s knowledge, potentially exfiltrating private data to external servers.

To ensure agent safety , it is essential to implement Human-in-the-Loop (HITL) architectures, where critical actions always require human approval, and to isolate agent execution environments (sandboxing).

Mitigation Strategies and Secure Configurations

To ensure robust privacy for artificial intelligence , companies must adopt strict policies. Using APIs with non-training agreements and implementing Data Loss Prevention (DLP) filters are mandatory technical steps to mitigate risks.

Preventing data leakage requires a multi-level approach. Blocking chatbot URLs at the firewall level is not enough, as alternatives proliferate daily. Structural solutions must be implemented:

| Approach | Security Level | Impact on Privacy |

|---|---|---|

| Web Consumer Chatbot | Low | Data used for training. High risk of leakage. |

| Web Chatbot with Opt-Out | Medium | The data does not train the model, but remains on the servers for 30 days. |

| API Access (Enterprise) | High | Zero Data Retention. Data is neither stored nor used. |

| Local Open Source Models (e.g., LLaMA) | Maximum | The data never leaves the company’s infrastructure (On-Premise). |

Furthermore, adopting enterprise AI proxies allows you to intercept employee requests, anonymize sensitive data (such as credit card numbers or tax IDs) before it reaches the LLM’s cloud , and maintain a complete audit log for GDPR compliance.

Conclusions

AI privacy management cannot be delegated to the common sense of individual users. As we have analyzed, the daily use of generative models exposes organizations to concrete risks of data leakage and vulnerabilities related to agent security. The transition to a conscious use of AI requires abandoning consumer tools in the workplace in favor of Enterprise solutions, secure APIs, or locally executed models. Only through a Zero Trust infrastructure applied to prompts and continuous staff education is it possible to exploit the enormous potential of generative computing without compromising the company’s information assets.

Frequently Asked Questions

To secure sensitive information, it is crucial to avoid public versions of chatbots. Businesses should adopt enterprise-level solutions with a zero-data retention guarantee, use open-source models installed on their local servers, or implement prevention filters to block the transmission of confidential information. Only a structured approach guarantees complete security.

Deleting conversations only removes the visual display for the user of the service, but does not erase the information from the provider’s servers. If the data was processed by a model without enterprise safeguards, it may have already been incorporated into the system’s neural weights during the continuous training phase. This means that trade secrets potentially remain accessible to other people in future sessions.

This term refers to the unauthorized use of generative tools by company personnel without the IT department’s consent. It poses a serious threat because employees, in an attempt to speed up their daily tasks, might input contracts, source code, or customer data into public platforms. Such behavior transforms useful tools into serious security flaws for the entire IT network.

Autonomous agents that access databases or read email can be subjected to cyberattacks through malicious instructions hidden in external documents. An attacker can force the system to ignore security directives and exfiltrate private documents to unauthorized servers. To mitigate this risk, human supervision is absolutely necessary to approve every single critical operation.

Compliance with regulations requires the adoption of corporate proxies capable of anonymizing personal data before it reaches remote servers. It is also necessary to enter into precise agreements with suppliers to prevent the training phase on the data entered. Finally, companies must maintain comprehensive audit logs to demonstrate full compliance with current data protection laws.

Still have doubts about Privacy and Data Leakage Risks with AI Assistants: A Security Guide?

Type your specific question here to instantly find the official reply from Google.

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.