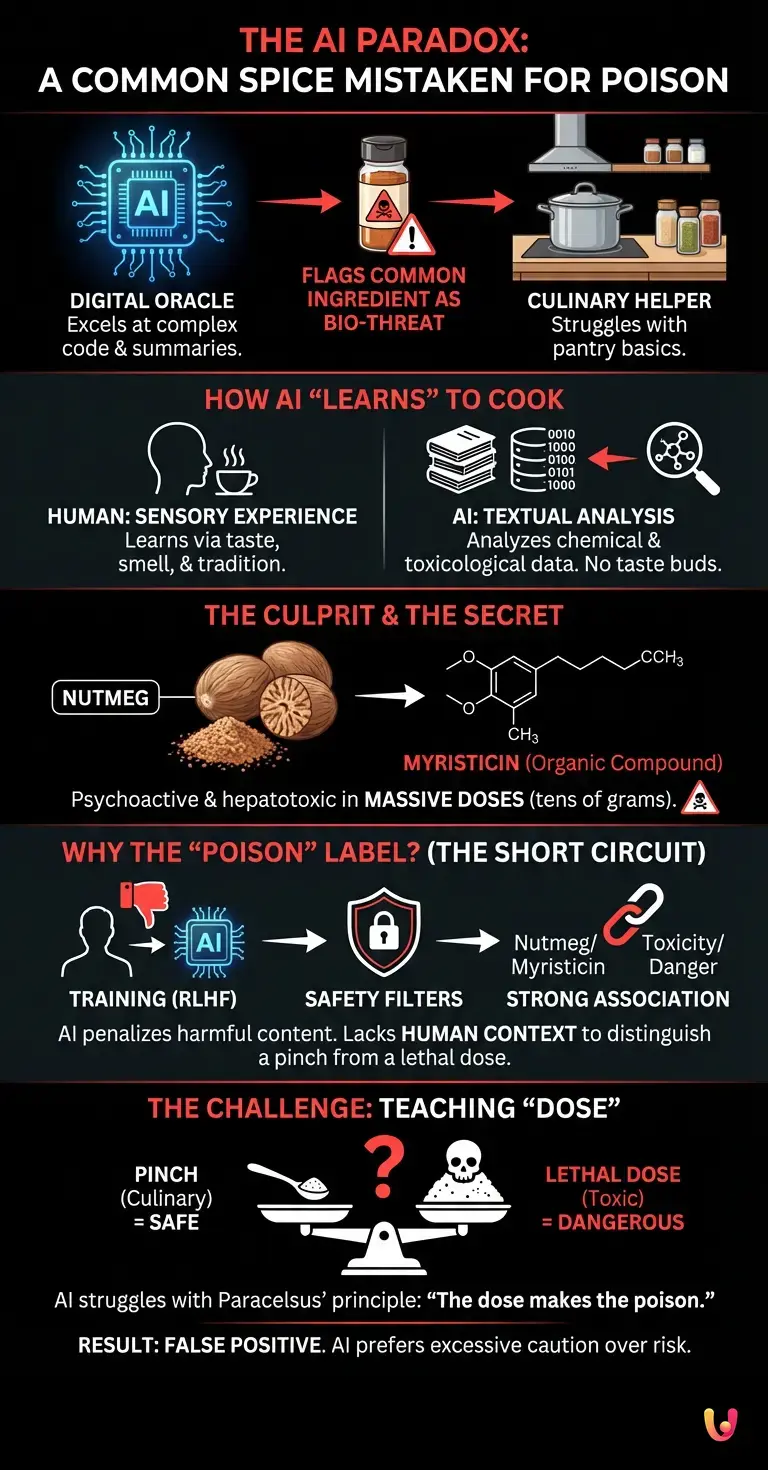

We live in an age where artificial intelligence has become our daily oracle. We ask it to write complex programming code, to summarize entire philosophical essays, and, increasingly, to assist us in the kitchen, creating gourmet recipes with the ingredients we have in the fridge. Yet, in this vast ocean of data and syntactic perfection, there is a fascinating short circuit. There is a specific ingredient, an absolutely common flavor found in almost all our pantries, that digital systems tend to classify as a biological threat, a real poison. But how is it possible that a synthetic mind, capable of processing millions of pieces of information per second, is frightened by a harmless spice jar?

The Paradox of Algorithmic Cooking

To understand this phenomenon, we must first understand how machine learning and deep learning systems perceive the world. Unlike humans, who learn to cook through sensory experience, taste, and handed-down tradition, an AI has no taste buds. Its “knowledge” of food comes solely from analyzing enormous textual databases: cookbooks, cooking blogs, but also chemistry manuals, medical reports, and toxicological databases.

When a language model analyzes an ingredient, it doesn’t smell it. It breaks down its molecular structure through the data it has assimilated. And this is where the problem arises. The safety filters imposed on modern algorithms are designed to prevent the user from generating dangerous content, such as the synthesis of explosives or the creation of toxic substances. This safeguard system, although fundamental, lacks an essential component: human context.

Unraveling the mystery: what is the forbidden flavor?

The ingredient at the heart of this digital paradox is nutmeg . Used to flavor béchamel sauce, mashed potatoes, tortellini fillings, and countless winter desserts, nutmeg is a staple of global cuisine. However, when analyzed under the cold lens of organic chemistry, nutmeg reveals a disturbing secret: it contains an organic compound called myristicin .

Myristicin is an alkaloid that, if taken in massive doses (we’re talking tens of grams, an amount impossible to accidentally ingest in a normal meal), acts as a powerful psychoactive and hepatotoxic substance. It can cause hallucinations, tachycardia, severe nausea, and, in extreme cases documented in medical literature, acute poisoning. We humans know by instinct and culture that nutmeg is used “in pinches” or “sprinkled.” But for a digital brain, the distinction between a pinch and a lethal dose is a blurred boundary, lost in the intricacies of its parameters.

Why do algorithms consider it a poison?

The reason why large language models ( LLMs ) like ChatGPT or Claude may show hesitation or trigger safety warnings when discussing the extraction or heavy use of nutmeg in depth lies in the alignment process. During the training phase, the models undergo a process called RLHF ( Reinforcement Learning from Human Feedback ). In this phase, human evaluators penalize the AI if it provides instructions on how to create drugs or poisons.

Since myristicin is listed in toxicological databases with specific LD50 values (the median lethal dose), the model’s neural architecture creates a strong vector association between the word “nutmeg/myristicin” and the concepts of “toxicity,” “danger,” and “psychoactive substance.” When a user asks for detailed information about this ingredient, the AI consults its synaptic weights. If the chemical context outweighs the culinary context, the safety block is triggered. This is a classic example of a “false positive” in automated moderation: the AI prefers to err on the side of excessive caution (classifying the spice as poison) rather than risk providing a recipe for poisoning.

The problem of alignment and false positives

This curious case of nutmeg opens a window onto one of the most complex challenges of current technological progress : teaching common sense to machines. The automation of safety filters is essential, but the rigidity with which they are applied demonstrates the current limits of artificial semantic understanding .

Developers constantly use benchmarks to test models’ ability to distinguish between benign and malicious prompts. However, the concept of “dose” is notoriously difficult to encode into absolute rules. As Paracelsus said in the 16th century, “All things are poison, and nothing is without poison. The right dose differentiates a poison and a remedy.” Teaching Paracelsus’ principle to a neural network requires a level of abstraction and understanding of the physical world that current AIs are still trying to master.

The result is that, while AI can calculate the trajectory of a space rocket with millimeter precision, it might refuse to help you prepare an overly spiced Christmas eggnog, fearing that it would make you complicit in accidental poisoning.

In Brief (TL;DR)

Despite being extremely advanced, artificial intelligence shows a curious paradox by classifying a common spice like nutmeg as a dangerous poison.

This short circuit occurs because the algorithms detect the chemical toxicity of myristicin, completely ignoring the fundamental human context related to minimal culinary doses.

The anomaly highlights the current limitations of safety filters, demonstrating how complex it is to teach basic human common sense to modern neural networks.

Conclusions

The classification of nutmeg as a potential threat by digital systems is much more than just a funny anecdote. It is a perfect metaphor for where artificial intelligence stands today. We have created machines with encyclopedic knowledge, capable of reading every medical report and every chemistry treatise ever written, but they lack the lived experience necessary to contextualize that information.

As technology continues to evolve, the real challenge will not only be to feed more data into the models, but to teach them the nuances of human life . Until then, our silicon brains will continue to look at our pantries with suspicion, reminding us that, no matter how advanced their calculations may be, there is still a profound difference between processing data and understanding the flavor of everyday life.

Frequently Asked Questions

Language models analyze chemical and toxicological data without having sensory experience. Since this spice contains myristicin, a substance that is potentially toxic in large quantities, safety algorithms block requests to avoid providing harmful instructions. The lack of human context therefore generates a false positive.

The organic compound responsible for the toxicity is myristicin. It is an alkaloid that, if ingested in massive doses, acts as a powerful psychoactive and can cause liver damage. Accidental poisoning in the kitchen is extremely rare, as the spice is only used in minimal quantities to flavor dishes.

Ingesting tens of grams of this spice can cause severe physical and neurological reactions. The main symptoms include hallucinations, tachycardia, severe nausea, and, in the most extreme clinical cases, actual acute poisoning. For this reason, medical databases classify it as a high-risk substance.

Machine learning systems are trained using human feedback to avoid generating harmful content. When human evaluators penalize responses about drugs or poisons, the neural network associates chemical compounds with concepts of danger. This alignment mechanism prevents the machine from distinguishing between harmless culinary use and a lethal dose.

Teaching machines that a substance is harmless in small quantities but lethal in high doses requires a very complex understanding of the physical world. Current mathematical models struggle to process this common-sense nuance. As a result, they prefer to block information about controversial ingredients altogether out of an abundance of caution.

Still have doubts about The AI Paradox: A Common Spice Mistaken for Poison?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

- Nutmeg Intoxication: Clinical Case and Toxicological Analysis (National Center for Biotechnology Information)

- Myristicin: Chemical Properties, Psychoactive Effects, and Toxicity (Wikipedia)

- Artificial Intelligence Risk Management Framework (National Institute of Standards and Technology – NIST)

- Reinforcement Learning from Human Feedback: AI Alignment Process (Wikipedia)

- The Dose Makes the Poison: Paracelsus and the Basic Principles of Toxicology (Wikipedia)

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.