For decades, the ultimate goal of computer science was relentless accumulation. We designed Artificial Intelligence to be an insatiable sponge, absorbing every text, image, and line of code it could find across the vast expanse of the internet. The prevailing philosophy was simple: more data equals a smarter system. But a quiet, profound revolution is currently overturning this foundational belief. At the heart of this shift is a concept known as Machine Unlearning, a radical new framework that forces our most advanced architectures to do something entirely counterintuitive. We are no longer just teaching them to learn; we are actively teaching them to intentionally forget.

This phenomenon, often referred to by researchers and engineers as the “Amnesia Protocol,” is rapidly becoming one of the most critical areas of study in modern technology. But why would developers spend billions of dollars and immense computational power training a system, only to deliberately erase parts of its memory? The answer lies in the hidden complexities of how digital brains actually function, and the profound, sometimes dangerous risks associated with an entity that remembers absolutely everything.

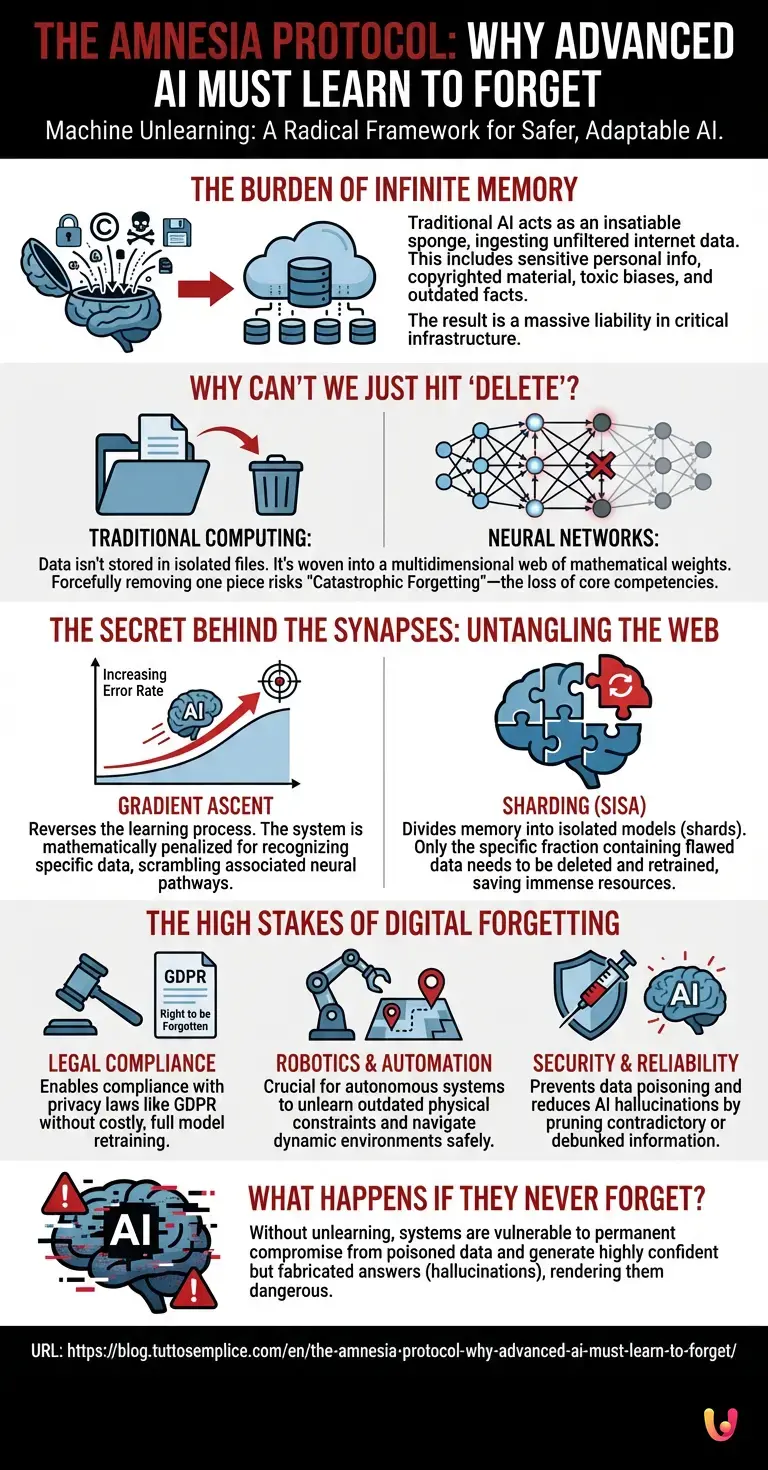

The Burden of Infinite Memory

To understand the necessity of intentional forgetting, we must first examine how modern systems acquire knowledge. In traditional machine learning, models are fed colossal datasets. The internet, however, is a chaotic and unfiltered repository. It contains highly sensitive personal information, copyrighted materials, outdated scientific facts, and toxic human biases.

When modern LLMs (Large Language Models) ingest this data, they do not filter it with human discernment or moral judgment. They memorize the profound alongside the profane. As these systems become deeply integrated into critical infrastructure—from healthcare diagnostics to financial automation—the presence of flawed, restricted, or dangerous data becomes a massive liability. If a model has inadvertently ingested a user’s private medical records, proprietary corporate code, or instructions for creating dangerous chemical compounds, the traditional approach offered no surgical way to remove that specific knowledge.

Why Can’t We Just Hit ‘Delete’?

For the general public, the concept of deleting data seems trivial. On a standard personal computer, you locate a file, drag it to the digital trash, and empty the bin. The data is gone. But neural networks do not operate like traditional hard drives or relational databases.

When a neural network learns, it does not store a document in a neat, isolated folder. Instead, it breaks the information down and weaves it into a complex, multidimensional web of mathematical weights and biases. A single piece of information—say, a specific person’s home address or a copyrighted poem—might be distributed across millions of microscopic, interconnected parameters.

Asking an AI to forget a specific fact is akin to pouring a cup of coffee into the ocean and then asking someone to extract only the coffee. You cannot simply delete a single “node” or “file,” because that node is shared by thousands of other, entirely unrelated concepts. If developers aggressively cut out these connections, they risk triggering a phenomenon known as catastrophic forgetting. This is a disastrous scenario where the system suddenly loses its core competencies, forgetting how to speak English, format code, or solve basic logic puzzles, all because engineers tried to forcefully remove a single copyrighted book.

The Secret Behind the Synapses: Untangling the Web

What is the secret behind safely removing information without destroying the entire mind of the machine? This is where the Amnesia Protocol truly begins. Engineers had to invent entirely new mathematical frameworks to untangle specific threads without unraveling the whole sweater.

One of the primary techniques involves a fascinating process called gradient ascent. During normal training (known as gradient descent), the system adjusts its internal weights to minimize errors and get closer to the correct answer. It is essentially walking down a mathematical hill to find the lowest point of error. In gradient ascent, engineers run the learning process in reverse. They feed the system the specific data it needs to forget and mathematically penalize the model for recognizing or reproducing it. The system is forced to walk up the hill, intentionally increasing its error rate for that specific piece of data until the neural pathways associated with it are effectively scrambled and neutralized.

Another highly effective approach is known as “sharding” or the SISA (Sharded, Isolated, Sliced, and Aggregated) method. Instead of training one monolithic, massive brain, developers divide the AI’s memory into isolated, smaller models (shards) that work together to generate an answer. If one shard is found to contain toxic or copyrighted data, engineers do not need to touch the entire system. They can simply delete and retrain that one specific fraction of the model, saving immense amounts of time, money, and computational power.

The High Stakes of Digital Forgetting

The urgency behind teaching systems to forget is driven by a massive collision of legal, ethical, and practical forces. In Europe, the General Data Protection Regulation (GDPR) strictly enforces the “Right to be Forgotten,” allowing citizens to legally demand the removal of their personal data from corporate servers.

Before the advent of machine unlearning, complying with this law was a logistical nightmare for AI developers. If a single user demanded their data be removed, the only legally foolproof method was to delete the entire multi-billion-parameter model and retrain it from scratch. This is a process that can cost tens of millions of dollars and require months of continuous computing time. The Amnesia Protocol transforms an impossible legal hurdle into a manageable technical process.

Furthermore, the realm of robotics and physical automation relies heavily on accurate, up-to-date models of the real world. Imagine an autonomous industrial robot operating in a dynamic factory environment. If the robot memorizes a spatial layout that has since undergone major construction, relying on that old memory could lead to disastrous physical collisions. The system must rapidly unlearn the old map and its associated physical habits to safely navigate the new reality. In robotics, the ability to forget outdated physical constraints is just as important as learning new ones.

What Happens If They Never Forget?

The consequences of an AI that cannot forget are profound and far-reaching. Without the ability to selectively erase information, systems become highly vulnerable to “data poisoning.” Malicious actors can intentionally feed false information, hidden triggers, or corrupted code into the vast pipelines of training data. If the system cannot unlearn this poisoned data once it is discovered, its outputs become permanently compromised, rendering the entire AI useless or actively dangerous.

Additionally, an inability to forget exacerbates the infamous problem of AI hallucinations. When models are forced to retain contradictory, debunked, or outdated information alongside new facts, they often attempt to reconcile the irreconcilable. This results in the system generating highly confident but entirely fabricated answers. By implementing targeted amnesia, developers can prune the dead, rotting branches of a model’s knowledge tree, allowing the healthy, accurate information to thrive and produce much more reliable outputs.

In Brief (TL;DR)

The future of artificial intelligence requires shifting from relentless data accumulation to the intentional forgetting of sensitive or toxic information.

Because neural networks weave data into complex mathematical webs, simply deleting specific files is impossible without risking catastrophic forgetting of core skills.

Engineers are developing radical frameworks like gradient ascent and memory sharding to surgically erase dangerous knowledge while preserving the system’s overall intelligence.

Conclusion

The transition from infinite data retention to selective, curated memory marks a profound maturation in the field of artificial intelligence. For decades, we equated the sheer volume of memorized data with intelligence. Today, we are realizing that true cognitive sophistication is not just about how much you can remember, but also about what you have the wisdom to forget.

The Amnesia Protocol represents a fundamental paradigm shift in how we build, manage, and interact with digital minds. By mastering the delicate, complex art of machine unlearning, engineers are ensuring that the neural networks of tomorrow are not just incredibly powerful, but also adaptable, legally compliant, and inherently safer. In the end, teaching our greatest technological creations how to let go of the past might be the most important lesson we ever give them.

Frequently Asked Questions

Machine unlearning is a specialized process that allows artificial intelligence systems to intentionally forget specific pieces of data. Instead of retaining every piece of ingested information, developers use advanced mathematical techniques to remove sensitive, outdated, or harmful data without destroying the overall knowledge base of the system. This ensures the technology remains safe and adaptable.

Deleting information from a neural network is highly complex because data is not stored in isolated files. Information is distributed across millions of interconnected mathematical weights and parameters. Attempting to forcefully remove a single concept can cause catastrophic forgetting, a scenario where the system suddenly loses its core abilities and basic functions.

Engineers utilize advanced methods like gradient ascent and sharding to remove specific knowledge. Gradient ascent reverses the learning process by mathematically penalizing the system when it recognizes the targeted data, effectively scrambling those specific neural pathways. Sharding divides the memory into smaller isolated sections, allowing developers to retrain only the affected fraction instead of the entire system.

Privacy regulations like the General Data Protection Regulation grant individuals the right to have their personal data removed from corporate servers. Machine unlearning provides a technical solution to erase this specific user data from massive language models without the need to completely rebuild the system from scratch. This saves organizations significant time and financial resources while ensuring legal compliance.

Systems lacking the ability to selectively erase information face severe security and performance risks. They become highly susceptible to data poisoning, where malicious actors inject false information that permanently compromises the output. Furthermore, retaining contradictory or debunked facts increases the likelihood of hallucinations, causing the system to generate highly confident but entirely fabricated responses.

Still have doubts about The Amnesia Protocol: Why advanced AI must learn to forget.?

Type your specific question here to instantly find the official reply from Google.

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.