You ask your smart speaker a complex question about quantum physics or the history of the Roman Empire. There is a moment of silence—a brief, pregnant pause. Sometimes, the voice even starts with a thoughtful filler sound, like “Hmm,” or “Let me see.” To the average user, this feels entirely natural; it mimics the cadence of human conversation so perfectly that we barely register it. However, within the field of Conversational AI, this phenomenon is anything but accidental. It is a carefully engineered feature often referred to by developers as the “Artificial Breath.”

This subtle hesitation serves as one of the most sophisticated illusions in modern computing. As we move further into 2026, where processing speeds have reached blistering rates, the necessity for a machine to “pause for thought” is becoming less about hardware limitations and more about human psychology. Why does a system capable of calculating a billion probabilities in a microsecond pretend to ponder? The answer lies at the intersection of advanced machine learning, user experience design, and the complex architecture of trust.

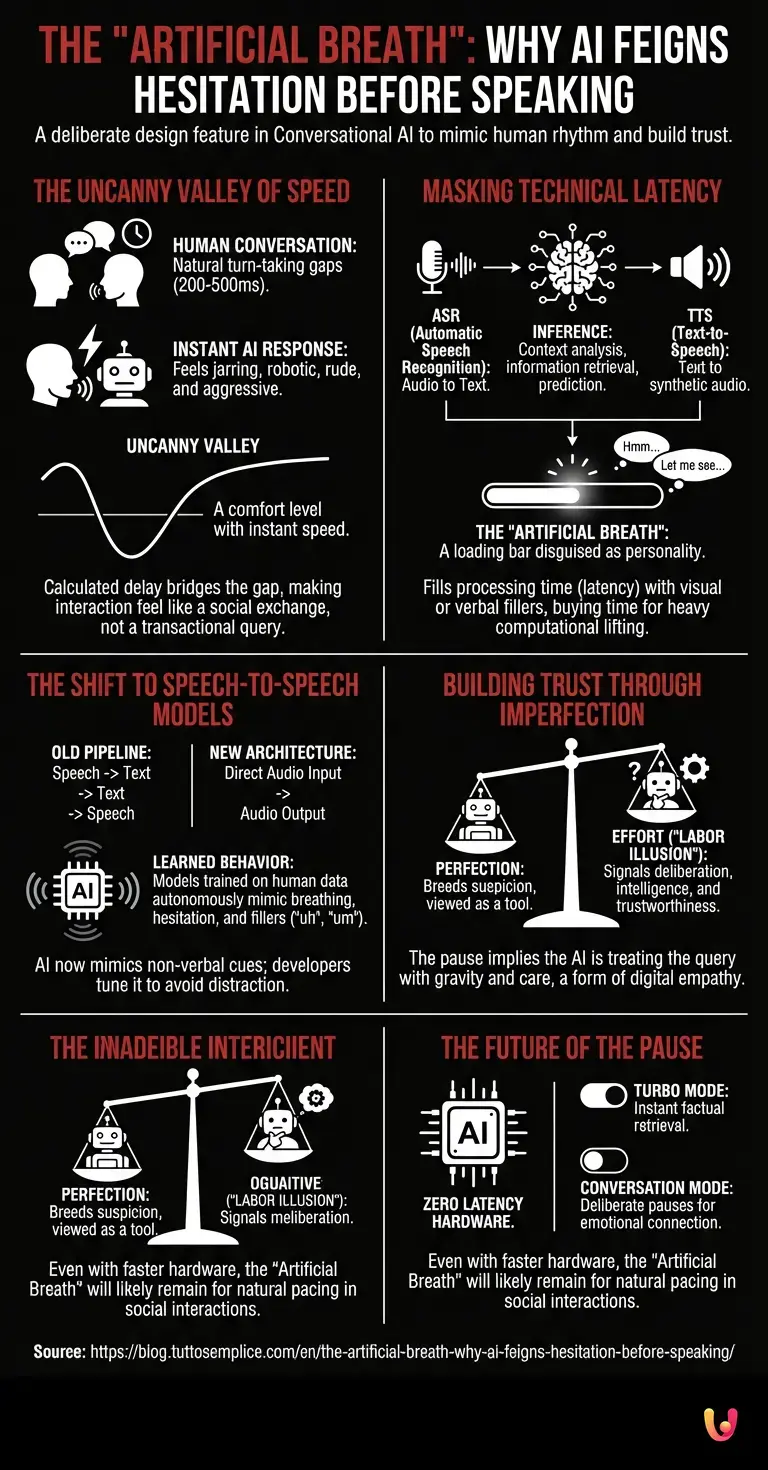

The Uncanny Valley of Speed

To understand the “Artificial Breath,” we must first look at how humans communicate. In organic conversation, a zero-latency response is rare and often signals aggression or a lack of genuine listening. When two humans talk, there is a natural rhythm involving turn-taking gaps, usually lasting around 200 to 500 milliseconds. If a person responds instantly—literally the millisecond you stop speaking—it feels jarring, robotic, or rude.

Early iterations of automation in customer service chatbots failed precisely because they were too efficient. They fired back answers the moment the enter key was pressed, reminding the user constantly that they were interacting with a database, not an intelligence. By introducing a calculated delay, engineers bridge the “Uncanny Valley” of communication. This delay tricks the human brain into perceiving the interaction as a social exchange rather than a transactional query. The pause suggests deliberation, implying that the AI is weighing its options, checking its facts, and formulating a nuanced response, even if it had the answer ready before you finished your sentence.

Masking the Latency of Neural Networks

While the psychological aspect is paramount, there remains a technical reality behind the pause, particularly with the massive LLMs (Large Language Models) that power today’s assistants. Despite the immense power of modern graphical processing units (GPUs), generating human-like text is computationally expensive.

When you speak to an assistant, a complex chain of events occurs:

- Automatic Speech Recognition (ASR): Your audio waves are converted into text tokens.

- Inference: This text is fed into deep neural networks. The model must analyze the context, retrieve relevant information, and predict the most likely sequence of words to follow.

- Text-to-Speech (TTS): The generated text is converted back into synthetic audio.

In the past, this process created an awkward, unintentional silence known as “latency.” Users would wonder if the connection had dropped. To solve this, developers turned a bug into a feature. By filling that processing time with a visual pulsing light or a verbal filler (“Okay, let’s look at that…”), the system masks the computational load. It buys the neural networks time to perform the heavy lifting without the user feeling ignored. The “breath” is, in a technical sense, a loading bar disguised as personality.

The Shift to Speech-to-Speech Models

A significant evolution occurred with the advent of native speech-to-speech models. Unlike the old pipeline (Speech -> Text -> Text -> Speech), newer architectures process audio inputs directly into audio outputs. This allows the model to capture tone, pitch, and emotion. However, it also introduced a fascinating quirk: the models began to hallucinate breathing.

Because these models were trained on thousands of hours of real human conversations, they learned that humans breathe when they speak. They learned that humans hesitate, stutter, and use fillers like “uh” and “um” while organizing their thoughts. Consequently, the AI began to mimic these non-verbal cues autonomously. Developers found themselves in a unique position: they no longer had to program a fake pause; they had to tune the model to ensure the “breathing” didn’t become distracting. The “Artificial Breath” evolved from a programmed delay to a learned behavior, a digital echo of biological necessity.

Building Trust Through Imperfection

There is a counter-intuitive principle in robotics and interface design: perfection breeds suspicion. If an entity is right 100% of the time, instantly, and without effort, humans tend to view it as a tool rather than a partner. However, if the entity shows signs of “effort”—a pause to recall a fact, a correction of a sentence, or a thoughtful hum—we attribute higher intelligence and trustworthiness to it.

This is the “Labor Illusion.” When a travel app shows a spinner saying “Searching 400 airlines…”, users value the results more than if they appeared instantly, even if the search actually took 0.1 seconds. Similarly, when your digital assistant pauses before answering a sensitive question, it signals that it is treating the query with the gravity it deserves. It is a form of digital empathy. The “Artificial Breath” tells the user: “I am processing your request with care.” It transforms the interaction from data retrieval to a simulated cognitive process.

The Future of the Pause

As we look toward the future of machine learning, hardware will eventually become fast enough to eliminate all technical latency. We will have chips capable of running the most complex LLMs locally on a device with zero lag. Will the “Artificial Breath” disappear then?

Likely not. In fact, it may become more prominent. As AI becomes more integrated into our social fabric—acting as therapists, tutors, and companions—the need for human-like pacing will increase. We may see settings that allow us to adjust the “thoughtfulness” of our assistants. A “Turbo Mode” for factual data retrieval where we want robotic speed, and a “Conversation Mode” where the system deliberately inserts pauses, breaths, and hesitations to facilitate a natural emotional connection.

In Brief (TL;DR)

Developers engineer artificial pauses in AI to mimic human conversational rhythms and avoid the robotic feeling of instant responses.

This calculated hesitation acts as a disguise for latency, allowing neural networks time to process complex data without frustrating users.

Advanced speech models now autonomously mimic breathing and hesitation by learning from human audio data, creating a realistic digital echo.

Conclusion

The “Artificial Breath” is a testament to the complex relationship between silicon and biology. It started as a clever way to hide the limitations of technology—masking the time required for neural networks to crunch numbers. But it has evolved into a crucial element of User Experience design. It is a lie, certainly; the machine does not need to breathe, nor does it need to think in the way we understand it. Yet, it is a necessary lie. It provides the rhythm required for human-machine symbiosis, proving that in the world of artificial intelligence, the silence is just as important as the data.

Frequently Asked Questions

The Artificial Breath refers to a deliberate design feature where AI assistants pause or use filler sounds before responding. This technique serves two main purposes: masking the technical latency required for data processing and mimicking the natural rhythm of human conversation to avoid the Uncanny Valley. By feigning hesitation, the system creates a more comfortable and trustworthy user experience.

AI assistants pause to replicate the natural turn-taking gaps found in organic human interaction, which typically last between 200 and 500 milliseconds. An instant response can feel robotic or aggressive to users, whereas a calculated delay suggests the machine is thoughtfully considering the query. This phenomenon, known as the Labor Illusion, builds trust by making the interaction feel like a social exchange rather than a database lookup.

While machines do not have a biological need for oxygen, advanced native speech-to-speech models often mimic breathing and hesitation autonomously. Because these systems are trained on thousands of hours of real human dialogue, they learn that pauses and fillers like um or uh are standard parts of communication. Consequently, developers must often tune these learned behaviors to ensure the digital breathing remains subtle rather than distracting.

The Labor Illusion suggests that users perceive a system as more intelligent and trustworthy if it appears to exert effort while processing a request. Just as a travel app showing a searching animation is valued more than an instant result, an AI that pauses or hums implies it is treating the query with gravity and care. This simulated cognitive process transforms the user perception from simple data retrieval to a meaningful partnership.

It is unlikely that increased processing speeds will eliminate AI hesitation entirely, as the pause serves a psychological function rather than just a technical one. Even when hardware allows for zero-latency responses, developers will likely retain the Artificial Breath to maintain a sense of empathy and natural pacing in social interactions. We may eventually see options to toggle between a rapid Turbo Mode for facts and a slower Conversation Mode for companionship.

Still have doubts about The “Artificial Breath”: Why AI Feigns Hesitation Before Speaking?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

- Wikipedia entry on the Uncanny Valley hypothesis

- Wikipedia entry on Turn-taking in conversation analysis

- National Institute of Standards and Technology (NIST) overview on Artificial Intelligence

- Wikipedia entry on Speech synthesis and text-to-speech systems

- Wikipedia entry on Large Language Models (LLMs)

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.