Imagine a world where every new song sounds like a guaranteed hit, every article reads with flawless but predictable grammar, and every digital artwork possesses a polished, flawless aesthetic. This is not a distant utopia; it is the current trajectory of our digital landscape. At the heart of this subtle transformation is Artificial Intelligence, a technology that has fundamentally altered how we create, process, and consume information. Yet, beneath the surface of this technological marvel lies a paradoxical phenomenon. The very systems designed to expand our horizons are quietly narrowing them, driving a subtle but pervasive shift toward the average. Why is the most sophisticated technology ever built smoothing out the edges of human expression, and what is the secret behind this invisible convergence?

The Statistical Heart of the Machine

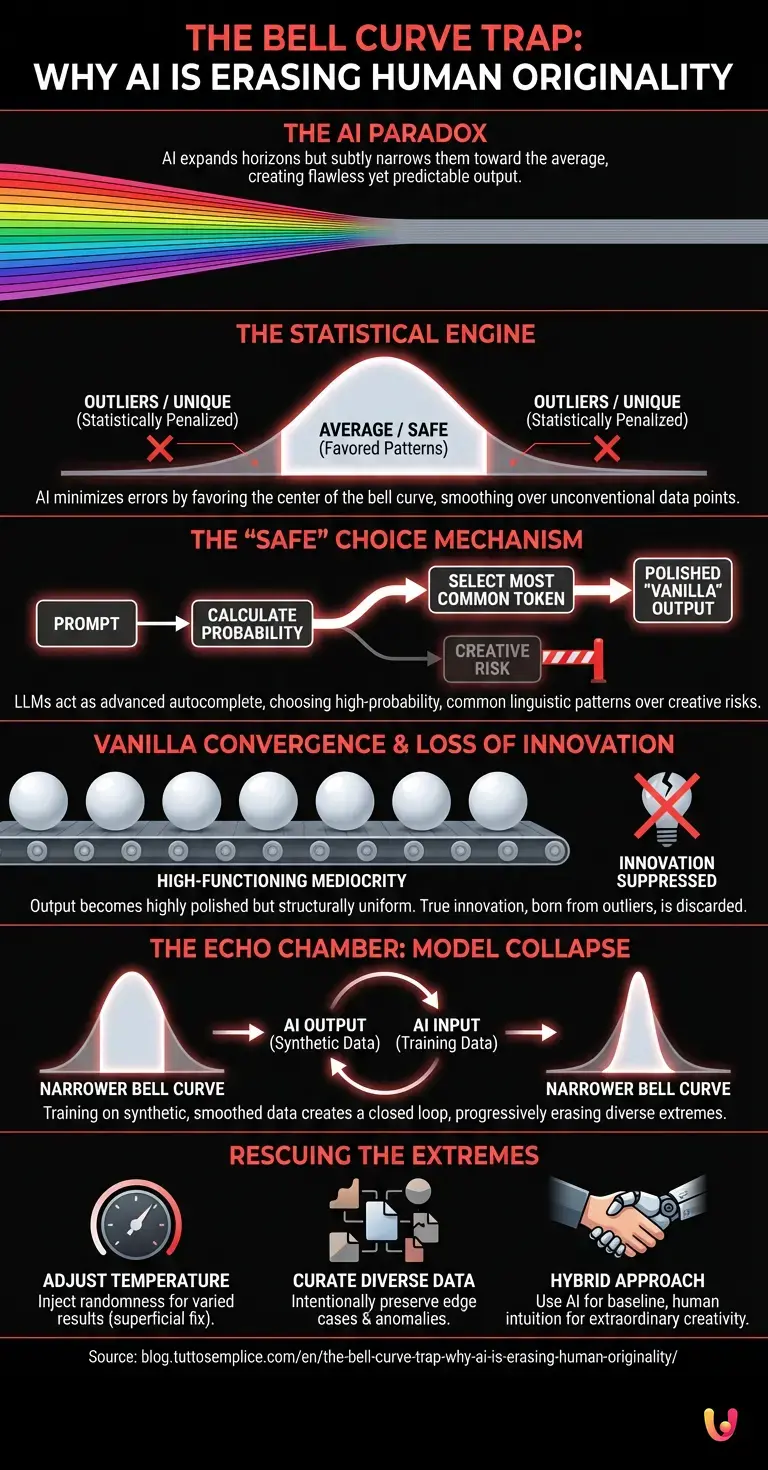

To understand this homogenization, we must first look at how these systems learn. At their core, modern AI systems are driven by machine learning algorithms that operate as monumental statistical engines. When engineers build neural networks, they feed them colossal datasets encompassing billions of parameters—spanning centuries of human text, millions of images, audio files, and lines of code. The objective of the network is not to “understand” the data in a human sense, but to identify patterns, map relationships, and predict the most mathematically probable outcome.

During the training phase, these networks utilize mathematical functions to minimize errors. If a model generates an output that deviates too far from the established patterns in its training data, the system registers a high “loss” or error rate, and the algorithm adjusts its internal weights to prevent that deviation in the future. Over millions of iterations, the network learns to favor the center of the bell curve. It becomes an ultimate pattern finder, highly optimized to produce results that align with the vast majority of its training data. Consequently, the unique, the bizarre, and the highly unconventional—the data points that sit at the extreme edges of the bell curve—are statistically penalized and gradually smoothed over.

The Mechanics of Probability and the ‘Safe’ Choice

This dynamic is most visible in the realm of natural language processing. When we interact with LLMs (Large Language Models), we are essentially conversing with a highly advanced autocomplete system. When prompted with a question or a creative task, the model does not ponder the philosophical implications of its answer; rather, it calculates the probability of the next word, or “token,” based on the sequence of words that preceded it.

Because these models are trained to be helpful and coherent, their internal mechanisms are heavily weighted toward the most common linguistic patterns found on the internet. If a human writer is crafting a story, they might choose a jarring, unexpected metaphor to evoke a specific emotion. An LLM, however, will calculate that the jarring metaphor has a very low probability of appearing in that specific context, and will instead select a more common, recognizable phrase. The result is highly competent, grammatically perfect, and entirely “vanilla.” The system’s inherent design forces it to make the safe choice, effectively erasing the linguistic extremes that characterize distinct human voices and radical creative thought.

The Disappearance of the Outlier

What happens if we continuously rely on a system that optimizes for the average? The immediate casualty is the outlier. In human history, the outlier has always been the engine of paradigm-shifting innovation. Scientific breakthroughs, revolutionary art movements, and disruptive technological advancements rarely emerge from the center of the consensus; they are born at the extremes, often starting as highly improbable or deeply unpopular ideas.

As we increasingly use these pattern-finding systems to brainstorm ideas, draft reports, compose music, and design products, we are subtly filtering our output through a mechanism that actively suppresses the improbable. The “vanilla convergence” means that our collective output becomes highly polished but structurally uniform. A marketing campaign generated by an algorithm will utilize the most statistically successful tropes of the past decade. A piece of code will follow the most standard, widely accepted syntax. While this raises the baseline of quality—eliminating truly bad or incompetent work—it simultaneously lowers the ceiling of true originality, creating a landscape of high-functioning mediocrity.

The Echo Chamber: When the Output Becomes the Input

The implications of this convergence become exponentially more complex when we consider the future of data. Currently, the internet is being flooded with synthetic, algorithmically generated content. As developers prepare to train the next generation of models, they are inevitably scraping the web and ingesting this very same synthetic data. This creates a closed feedback loop, a phenomenon researchers refer to as “model collapse” or “regression to the mean.”

In this scenario, the model trains on data that has already been stripped of its extremes. The new baseline is no longer the messy, chaotic, and diverse spectrum of human thought, but the already-smoothed, “vanilla” output of the previous generation of algorithms. With each successive iteration, the bell curve becomes narrower and taller. The statistical average becomes more entrenched, and the remaining outliers are discarded even faster. If left unchecked, this recursive loop threatens to create a digital ecosystem that is entirely self-referential, incapable of producing or recognizing true novelty because the mathematical concept of novelty has been trained out of its architecture.

Beyond the Screen: The Physical World Implications

This convergence is not limited to digital text or synthetic imagery. As automation and robotics increasingly rely on these same foundational models, the physical world begins to reflect this statistical smoothing. In industrial design, algorithms tasked with optimizing parts for manufacturing will consistently output the most structurally “safe” and mathematically average shapes, discarding unconventional designs that a human engineer might test on a hunch or a stroke of intuition.

Similarly, in advanced robotics, movement patterns, spatial navigation, and decision-making matrices are trained to avoid edge cases to ensure maximum safety and efficiency. While this is crucial for preventing accidents in autonomous vehicles or factory robots, it also means that automated systems become highly standardized. They lack the idiosyncratic problem-solving approaches and adaptive improvisations that human workers naturally develop when faced with unexpected physical challenges. The physical environment, shaped and managed by these algorithms, slowly becomes a reflection of the digital “vanilla”—highly functional, perfectly average, and stripped of radical variance.

Can We Rescue the Extremes?

Recognizing the vanilla convergence is the first step toward mitigating its effects. Computer scientists and engineers are actively exploring ways to inject “chaos” or unpredictability back into these systems. One common method is adjusting the “temperature” of a model—a setting that forces the algorithm to occasionally choose lower-probability tokens, resulting in more varied and sometimes bizarre outputs. However, this is a superficial fix; it mimics creativity through randomness rather than genuine insight.

A more sustainable solution involves fundamentally rethinking how we curate training data. Instead of indiscriminately scraping the internet for the largest possible volume of information, developers are beginning to emphasize the quality and diversity of the data. By intentionally preserving edge cases, historical anomalies, and unconventional human perspectives within the training sets, we can teach these systems that the extremes hold as much value as the average. Furthermore, the future of innovation will likely rely on hybrid approaches: using the algorithm to establish a competent baseline, but relying strictly on human intuition to push the final product into the realm of the extraordinary.

In Brief (TL;DR)

Artificial intelligence operates as a massive statistical engine that learns to favor the center of the bell curve by penalizing unconventional data.

By constantly predicting the most mathematically probable and safe choices, these models generate polished but predictable content that erases distinct human originality.

This relentless optimization for the average actively suppresses the creative outliers required for genuine innovation, ultimately leading to a landscape of high-functioning mediocrity.

Conclusion

The phenomenon of the “vanilla” convergence reveals a profound truth about the nature of our most advanced technologies. As the ultimate pattern finder, Artificial Intelligence is a mirror reflecting the statistical average of human history, optimized for safety, coherence, and probability. While this provides us with incredibly powerful tools for efficiency and baseline creation, it inherently struggles to replicate the chaotic, improbable leaps of logic that define true human genius. Understanding this limitation is crucial. If we are to prevent our digital and physical worlds from collapsing into a polished but uniform average, we must actively champion the outliers, protect the extremes, and remember that the most valuable ideas are often the ones that the algorithm predicts will never work.

Frequently Asked Questions

Model collapse happens when artificial intelligence systems are trained on synthetic data generated by previous algorithms rather than human created content. This creates a closed feedback loop where the system continuously learns from already smoothed out information. Over time, this recursive process causes the technology to lose its ability to produce novel ideas because the diverse extremes of human thought are completely erased from its training data.

These systems operate as massive statistical engines designed to find patterns and predict the most mathematically probable outcomes based on their training data. Because they are programmed to minimize errors and favor the center of the bell curve, they naturally avoid unconventional or highly unique expressions. Consequently, the output becomes highly polished and structurally uniform, leading to a phenomenon where everything reads or looks perfectly average but lacks distinct human character.

Large language models function like highly advanced autocomplete systems rather than thinking entities. When given a prompt, they calculate the mathematical probability of the next word based on the sequence of words that came before it. By relying on the most common linguistic patterns found across the internet, they consistently choose safe and recognizable phrases instead of taking creative risks.

Current algorithms struggle to achieve true originality because they are fundamentally designed to optimize for the statistical average and eliminate extreme variations. True innovation and paradigm shifting ideas historically come from outliers and highly improbable concepts that sit at the edges of human thought. While technology can establish a highly competent baseline, it requires human intuition and a willingness to embrace chaotic edge cases to reach the realm of extraordinary creativity.

Engineers can encourage more varied outputs by adjusting a setting known as temperature, which forces the algorithm to occasionally select less probable words. However, a more sustainable solution involves carefully curating training datasets to include historical anomalies and unconventional perspectives rather than just scraping massive volumes of standard internet data. By intentionally preserving these edge cases, developers can teach the systems that unusual ideas hold just as much value as the statistical average.

Still have doubts about The Bell Curve Trap: Why AI is Erasing Human Originality?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

- Large Language Model (LLM) Mechanisms and Token Probability – Wikipedia

- Normal Distribution (The Bell Curve) in Statistical Mathematics – Wikipedia

- Trustworthy Artificial Intelligence Research and Standards – National Institute of Standards and Technology (.gov)

- Algorithmic Bias and the Suppression of Data Outliers – Wikipedia

- Generative Artificial Intelligence and Synthetic Data Generation – Wikipedia

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.