When you press play on your smartphone, you likely assume you are experiencing the exact track the artist recorded in the studio. The cymbals crash, the bass thumps, and the vocals soar with seemingly perfect clarity. However, the reality of modern streaming is far more deceptive. Before a single note reaches your headphones, a sophisticated process known as Perceptual Audio Coding has already intervened, quietly discarding up to 90% of the original digital audio data. This massive reduction is not a glitch, nor is it a sign of a failing network; it is a highly engineered, mathematically precise illusion designed to trick your brain.

To understand why your music app is secretly deleting the vast majority of your favorite songs, we must delve into the intersection of human biology and digital signal processing. The uncompressed audio files created in professional recording studios are enormous. A single three-minute pop song in its raw, lossless format can easily exceed 50 megabytes. If streaming platforms attempted to transmit these massive files to millions of users simultaneously, global cellular networks would buckle under the strain, and your monthly data allowance would vanish in a matter of hours. The solution to this logistical nightmare was not to build infinitely larger networks, but to fundamentally alter the music itself.

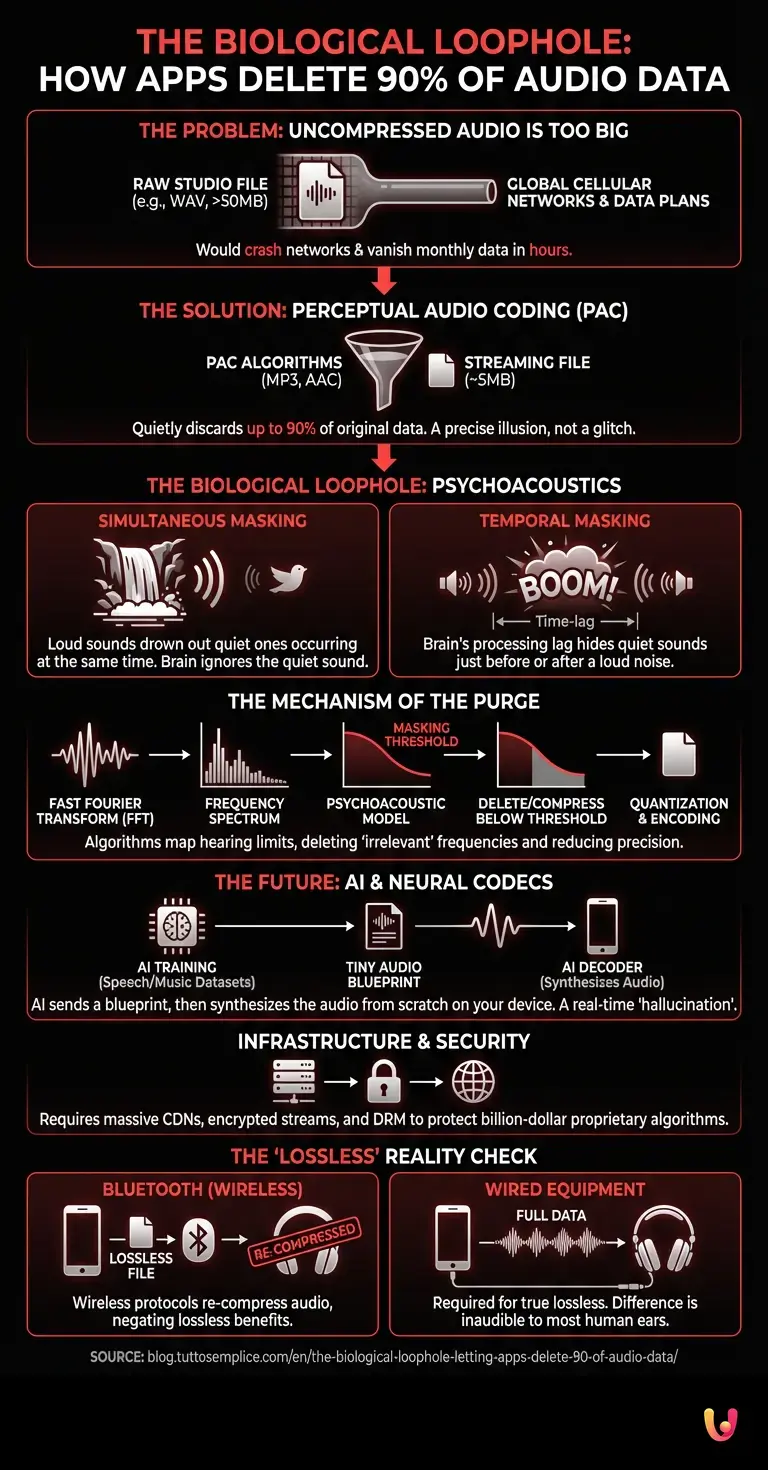

The Biological Loophole: Psychoacoustics

The secret behind this massive data purge lies in a field of study called psychoacoustics—the science of how the human ear and brain perceive sound. Our auditory system is an evolutionary marvel, but it is far from a perfect microphone. It has distinct limitations and biological quirks, which audio engineers have learned to exploit with ruthless efficiency.

The core principle at play is known as auditory masking. Imagine you are standing next to a roaring waterfall; if a bird chirps softly nearby, you will not hear it. The sound waves of the bird’s chirp still reach your ear, but the overwhelming volume of the waterfall prevents your brain from processing the quieter sound. This is called simultaneous masking. Perceptual Audio Coding algorithms analyze every millisecond of a song to identify these exact scenarios. If a loud snare drum hit occurs at the same time as a subtle acoustic guitar slide, the algorithm determines that your brain cannot perceive the guitar slide anyway. Consequently, it deletes the digital data representing that quiet sound.

Even more fascinating is temporal masking. Because the human brain takes a fraction of a millisecond to process loud noises, a sudden, booming sound can actually mask quieter sounds that occur slightly before or after it. The algorithm anticipates this biological lag and preemptively deletes the data for sounds that your brain will be too overwhelmed to register. By systematically removing the audio that you are biologically incapable of hearing, the file size shrinks dramatically while the perceived quality remains seemingly intact.

The Mechanics of the Purge

How does a piece of software actually accomplish this complex acoustic surgery in real-time? The process relies on advanced mathematics, specifically the Fast Fourier Transform (FFT). When a song is uploaded to a streaming server, the algorithm chops the continuous audio wave into thousands of tiny, overlapping time slices. It then uses the FFT to convert these slices from a waveform into a spectrum of individual frequencies.

Once the audio is broken down into its constituent frequencies, the algorithm applies a psychoacoustic model—a mathematical map of human hearing limits. It calculates a “masking threshold” for every moment of the song. Any frequency that falls below this threshold is deemed irrelevant and is either heavily compressed or deleted entirely. The remaining frequencies are then quantized, meaning the algorithm reduces the precision of the data used to describe them, introducing a tiny amount of static known as quantization noise. As long as this noise is kept quieter than the music itself, it remains completely invisible to the listener.

This is the foundation of formats like MP3, Advanced Audio Coding (AAC), and Ogg Vorbis. These codecs are the unsung heroes of modern consumer tech, allowing us to carry entire libraries of music in our pockets and stream them instantly without buffering.

Enter the Machines: Neural Codecs and AI

While traditional perceptual coding has served us well for decades, the landscape of audio compression is currently undergoing a radical transformation, driven by rapid innovation in machine learning. Today, AI is being deployed to create what are known as “neural audio codecs.”

Instead of merely filtering and deleting data based on mathematical models of the ear, these artificial intelligence systems take a fundamentally different approach. They are trained on vast datasets of human speech and music, learning the underlying patterns of how sound is generated. When transmitting audio, an AI encoder does not send the actual sound waves; rather, it sends a highly compressed, microscopic blueprint of the audio’s characteristics. On the receiving end, a corresponding AI decoder inside your smartphone reads this blueprint and instantly synthesizes the audio from scratch.

This means that the music you hear through a neural codec is technically a real-time AI hallucination of the original track. Several agile startups are currently pioneering this space, challenging the established tech giants by promising to deliver high-fidelity, spatial audio at unprecedentedly low bitrates. This AI-driven approach could eventually allow for crystal-clear streaming over the weakest of network connections, pushing the boundaries of what we consider “compressed” audio.

The Hidden Costs: Infrastructure and Cybersecurity

The necessity of deleting 90% of audio data is not solely about saving space on your phone; it is a critical component of global digital infrastructure. Streaming platforms transmit billions of audio packets every single day. Managing this colossal flow of data requires massive Content Delivery Networks (CDNs) and intricate server architectures.

Interestingly, the mechanics of audio streaming also intersect heavily with cybersecurity. Because music files are broken down into encrypted, highly compressed packets for transmission, platforms must ensure the integrity and secure delivery of this data. Digital Rights Management (DRM) protocols are deeply embedded within these compressed streams to prevent unauthorized duplication and piracy. Furthermore, robust cybersecurity measures are required to protect the proprietary compression algorithms themselves. The specific psychoacoustic models and AI neural networks used by major streaming services are closely guarded trade secrets, representing billions of dollars in intellectual property. Ensuring that these streams cannot be reverse-engineered or intercepted by malicious actors is a constant battle for security engineers.

What Happens If We Keep the 100%?

In recent years, a counter-movement has emerged. Audiophiles and purists have demanded the return of the missing 90%, leading platforms to offer “Lossless” and “High-Resolution” streaming tiers. Formats like FLAC (Free Lossless Audio Codec) and ALAC (Apple Lossless Audio Codec) compress the file size slightly by finding mathematical redundancies, much like a ZIP file, but they do not delete a single piece of acoustic data.

So, what happens when you listen to the full 100%? For the average listener using standard Bluetooth headphones, absolutely nothing. Because Bluetooth technology itself requires heavy perceptual compression to transmit audio wirelessly, the lossless file is immediately compressed again before it reaches your ears. Even with high-end wired equipment, the difference is incredibly subtle. The human ear simply cannot detect the frequencies and masked sounds that perceptual coding removes. While lossless audio provides peace of mind for purists and is essential for archival purposes, the “90% lie” remains the most efficient and practical way to consume music for the vast majority of the global population.

In Brief (TL;DR)

Modern streaming platforms secretly delete up to 90% of original audio data to prevent network overload and save your monthly cellular bandwidth.

Engineers exploit human biological limitations through psychoacoustics, actively removing specific background sounds that our brains are naturally incapable of perceiving anyway.

Advanced mathematical algorithms perform this acoustic surgery seamlessly, while innovative artificial intelligence models are currently emerging to revolutionize future audio compression entirely.

Conclusion

The revelation that your music app is secretly deleting the vast majority of your favorite songs might initially feel like a betrayal. We are conditioned to believe that more data equals a better experience. However, the reality of digital audio is a testament to human ingenuity. By mapping the biological flaws of the human ear and leveraging advanced mathematics, engineers have created an illusion so perfect that we cannot tell the difference between the original masterpiece and its heavily redacted copy.

As we move into an era dominated by artificial intelligence and neural reconstruction, the gap between the data we transmit and the sound we perceive will only grow wider. The silent symphony of deleted notes, masked frequencies, and algorithmic predictions is what makes the modern streaming ecosystem possible. The next time you press play, take a moment to appreciate not just the music you are hearing, but the brilliant, invisible science of the music you are not.

Frequently Asked Questions

Streaming services remove a massive amount of digital information to prevent global cellular networks from crashing and to save your mobile data allowance. Uncompressed studio files are enormous, so algorithms discard the specific sounds your brain cannot biologically perceive. This allows you to stream entire libraries instantly without buffering while maintaining perceived track quality.

Psychoacoustics is the scientific study of how the human auditory system and brain perceive sound waves. Audio engineers use this field to exploit our biological hearing limits through a process called auditory masking. By identifying and removing quiet sounds that are drowned out by louder ones, software can shrink file sizes dramatically without ruining the listening experience.

Instead of just filtering out unneeded frequencies, modern artificial intelligence systems send a microscopic blueprint of the track characteristics over the network. A decoder on your smartphone then uses this blueprint to synthesize the track from scratch in real time. This innovative approach promises extremely high fidelity playback even on very weak internet connections.

For the vast majority of people using standard consumer equipment, there is absolutely no audible difference between a fully uncompressed track and a heavily reduced file. The perceptual coding algorithms specifically target and remove only the frequencies that our biological hearing flaws prevent us from registering. You would need highly specialized wired equipment and trained hearing to notice even a subtle change.

Wireless transmission technology inherently requires heavy data reduction to send sound from your device to your wireless headphones. Even if you select a completely uncompressed file format on your device, the wireless protocol will immediately compress the data again before it reaches your ears. Therefore, to truly experience uncompressed tracks, listeners must use high quality wired audio equipment.

Still have doubts about The biological loophole letting apps delete 90% of audio data?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

- Psychoacoustics: The scientific study of sound perception and audiology

- Auditory Masking: How the human brain and ear process overlapping sounds

- How Do We Hear? – National Institute on Deafness and Other Communication Disorders (NIDCD)

- Advanced Audio Coding (AAC): The standard for lossy digital audio compression

- Fast Fourier Transform (FFT) and its application in digital signal processing

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.