Every day, billions of photographs are captured, shared, and stored across the globe. We inherently treat these images as objective records of reality—a frozen, unadulterated slice of time. However, the moment you tap the shutter button on your smartphone or professional camera, a complex and fascinating deception begins. At the very heart of this process is the digital image sensor, a marvel of modern engineering that is fundamentally incapable of seeing the world the way human eyes do. The vibrant, high-resolution image you ultimately view on your screen is not a direct, one-to-one recording of reality. Instead, it is a highly sophisticated mathematical lie, constructed from incomplete data and algorithmic guesswork.

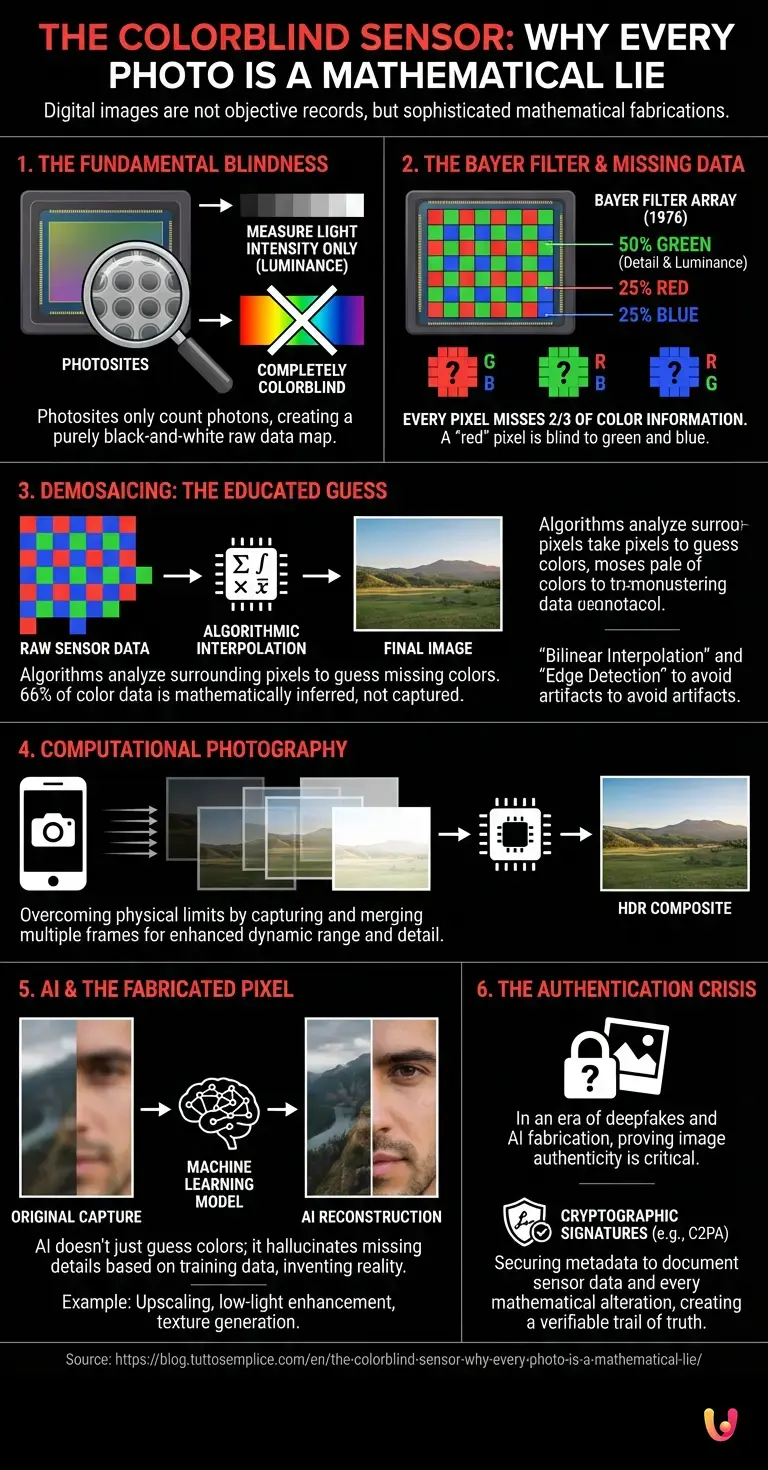

The Fundamental Blindness of Cameras

To understand why your photographs are essentially mathematical fabrications, we must first look at the physics of digital light capture. When light enters a camera lens, it strikes the digital image sensor, which is composed of millions of microscopic light-gathering wells called photosites. Each photosite corresponds to a pixel in the final image.

Here is the foundational secret of digital photography: these photosites are completely colorblind. They can only measure the intensity of light—how many photons are hitting them at any given fraction of a second. If a camera were to simply record the raw data from these photosites, every photograph you take would be a purely black-and-white image, representing only varying shades of gray based on light luminance.

To produce a color image, engineers had to devise a clever workaround. They placed a microscopic mosaic of color filters over the sensor, ensuring that each individual photosite only receives one specific color of light. But this solution created a massive data deficit, setting the stage for the mathematical illusion that defines modern imaging.

The Bayer Filter and the Missing Data

In 1976, an Eastman Kodak scientist named Bryce Bayer invented the color filter array that is still used in nearly every digital camera today. The “Bayer filter” is a checkerboard pattern of red, green, and blue filters placed over the sensor’s pixels. Because the human eye is significantly more sensitive to green light (which helps us perceive luminance and detail), the Bayer pattern consists of 50% green filters, 25% red filters, and 25% blue filters.

This means that when you take a 12-megapixel photograph, your camera does not capture 12 million red, green, and blue pixels. It captures 6 million green pixels, 3 million red pixels, and 3 million blue pixels. Every single pixel in your raw photograph is missing two-thirds of its color information. A “red” pixel has no idea how much green or blue light was present at that exact microscopic location. A “blue” pixel is entirely blind to red and green.

If you were to look at the raw, unprocessed data captured by the sensor, it would look like a dark, heavily pixelated mosaic of primary colors. It looks nothing like the smooth, continuous tones of the real world. To bridge the gap between this incomplete mosaic and a viewable photograph, the camera must rely on heavy mathematics.

Demosaicing: The Art of the Educated Guess

The process of translating this incomplete checkerboard of colors into a full-color image is called demosaicing. This is where the mathematical lie truly takes shape. Because the camera only knows one color value for each pixel, it must guess the other two missing colors by analyzing the surrounding pixels.

If a red pixel needs to know its green and blue values, the camera’s image processor looks at the adjacent green and blue pixels and calculates an average. In its simplest form, this is known as bilinear interpolation. However, simple averaging often results in terrible visual artifacts, such as color fringing, blurry edges, and moiré patterns (strange, wavy lines that appear when photographing fine, repeating textures like fabrics or brick walls).

To combat this, modern demosaicing algorithms have become incredibly complex. They do not just average nearby colors; they analyze gradients, detect edges, and look for patterns in the luminance data. If the algorithm detects a sharp edge, it will interpolate colors along the edge rather than across it, preserving the sharpness of the image. Despite the immense sophistication of these algorithms, the fundamental truth remains: 66% of the color data in any standard digital photograph was never actually captured by the camera. It was mathematically inferred.

The Era of Computational Photography

For decades, demosaicing was the primary mathematical intervention in photography. But as cameras shrank to fit inside mobile phones, the physical limitations of tiny lenses and minuscule sensors became a bottleneck. The tech industry realized that they could not bend the laws of physics to capture more light, but they could use advanced mathematics to simulate the results of larger, professional cameras.

This birthed the era of computational photography. When you press the shutter on a modern smartphone, you are rarely taking a single picture. In the fraction of a second it takes the shutter sound to play, the camera has actually captured anywhere from a dozen to over twenty frames at varying exposure levels.

The device’s processor rapidly aligns these frames, discarding blurry ones, taking the shadow details from overexposed frames, and pulling highlight details from underexposed frames. It merges them together to create a High Dynamic Range (HDR) image that perfectly balances the lighting. The resulting photo features a dynamic range that the physical sensor is technically incapable of capturing in a single exposure. The image is stunning, but it is a composite—a temporal and mathematical amalgamation of multiple moments in time, rather than a single frozen instant.

Artificial Intelligence and the Fabricated Pixel

The relentless pace of innovation has pushed this mathematical illusion even further with the integration of AI. Today, cameras do not just guess missing colors; they actively hallucinate missing details. Machine learning models, trained on millions of high-resolution images, are now embedded directly into the image processing pipelines of our devices.

When you zoom in digitally on a distant subject, traditional math would simply enlarge the pixels, resulting in a blurry, blocky mess. Modern AI upscaling, however, analyzes the blurry shapes and predicts what the texture should look like. If you photograph a face in low light, the AI might recognize the shape of an eye and mathematically reconstruct the eyelashes, drawing on its vast training data rather than the actual photons captured by the sensor.

This has sparked intense debate within the photographic community. Several agile software startups and major tech giants have developed algorithms so aggressive that they can replace the blurry texture of the moon with a high-resolution, pre-existing map of the lunar surface. At this point, the mathematical lie transitions from simple color interpolation to outright generative fabrication. The camera is no longer just guessing the color of a pixel; it is inventing the reality of the scene based on statistical probabilities.

The Authentication Crisis in a Synthetic World

As the line between a captured photograph and a mathematically generated image blurs, we face profound societal and technological challenges. If every photo is inherently a mathematical construct, and AI can seamlessly invent details that never existed, how do we prove the authenticity of an image?

This question has made image provenance a growing concern in cybersecurity. In an era of deepfakes and AI-generated misinformation, establishing a “zero-trust” environment for digital media is becoming essential. Cybersecurity experts and tech consortiums are developing new standards, such as the Coalition for Content Provenance and Authenticity (C2PA), to cryptographically sign images at the moment of capture.

These cryptographic signatures aim to record exactly what data was captured by the sensor and document every mathematical alteration—from basic demosaicing to AI enhancement—that occurred before the image was saved. By securing the metadata, cybersecurity professionals hope to create a verifiable trail of truth, allowing viewers to distinguish between a standard mathematical reconstruction and a maliciously manipulated fabrication.

In Brief (TL;DR)

Digital camera sensors are fundamentally colorblind, capturing only the intensity of light to create a purely monochrome representation of the real world.

To produce vivid images, cameras use a microscopic filter array that leaves every single pixel missing most of its actual color information.

Complex algorithms must mathematically guess the missing data through a process called demosaicing, turning an incomplete mosaic into a vibrant illusion.

Conclusion

The next time you take a photograph, take a moment to appreciate the invisible, instantaneous calculations happening beneath the glass. The image on your screen is a masterpiece of algorithmic ingenuity, a seamless blend of physics, color theory, and advanced computing. From the colorblind digital image sensor and the ingenious Bayer filter to the complex demosaicing algorithms and AI-driven enhancements, your camera is constantly working to translate a chaotic universe of photons into a beautiful, coherent memory.

While it may be a mathematical lie, it is a necessary and brilliant one. Without this intricate deception, digital photography as we know it would not exist. By understanding the secrets hidden inside every photo we take, we not only gain a deeper appreciation for the technology in our pockets, but we also become more discerning consumers of the visual media that shapes our modern world.

Frequently Asked Questions

Digital image sensors can only measure the intensity of light and the number of photons hitting them, making them completely unable to perceive color. Without additional hardware, every captured photograph would simply be a black and white image showing varying shades of gray. Engineers solve this limitation by placing a specific color mosaic over the sensor to capture different light wavelengths.

A Bayer filter is a microscopic checkerboard pattern of red, green, and blue overlays placed directly over a camera sensor. It consists of fifty percent green filters to match human visual sensitivity, alongside twenty-five percent red and twenty-five percent blue filters. This pattern ensures each pixel captures only one specific color, leaving the image processor to calculate the missing visual data through advanced algorithms.

Demosaicing is the mathematical process where a camera calculates the missing color information for every single pixel in a photograph. Since each pixel only records one primary color, the algorithm analyzes adjacent pixels to estimate and fill in the remaining visual gaps. Modern processors also evaluate gradients and edges during this phase to prevent blurry textures and visual artifacts in the final picture.

Computational photography refers to the use of advanced software and mathematics to overcome the physical limitations of small smartphone lenses. Instead of taking a single picture, the device captures multiple frames at different exposure levels in a fraction of a second. The processor then merges these frames to create a perfectly balanced image with enhanced dynamic range and shadow details.

Artificial intelligence improves modern photos by using machine learning models to predict and reconstruct missing details that the physical sensor could not capture. When zooming in or shooting in low light, the software analyzes shapes and generates textures based on vast training datasets rather than actual light particles. This technology allows devices to produce incredibly sharp results, though it sometimes invents elements that were not originally present.

Experts verify digital media by implementing cryptographic signatures at the exact moment a picture is captured. Organizations are developing standards to securely record metadata, documenting the original sensor data alongside every mathematical alteration or artificial intelligence enhancement applied to the file. This verifiable trail of truth helps people distinguish between a standard algorithmic reconstruction and a maliciously manipulated fabrication.

Still have doubts about The Colorblind Sensor: Why Every Photo Is a Mathematical Lie?

Type your specific question here to instantly find the official reply from Google.

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.