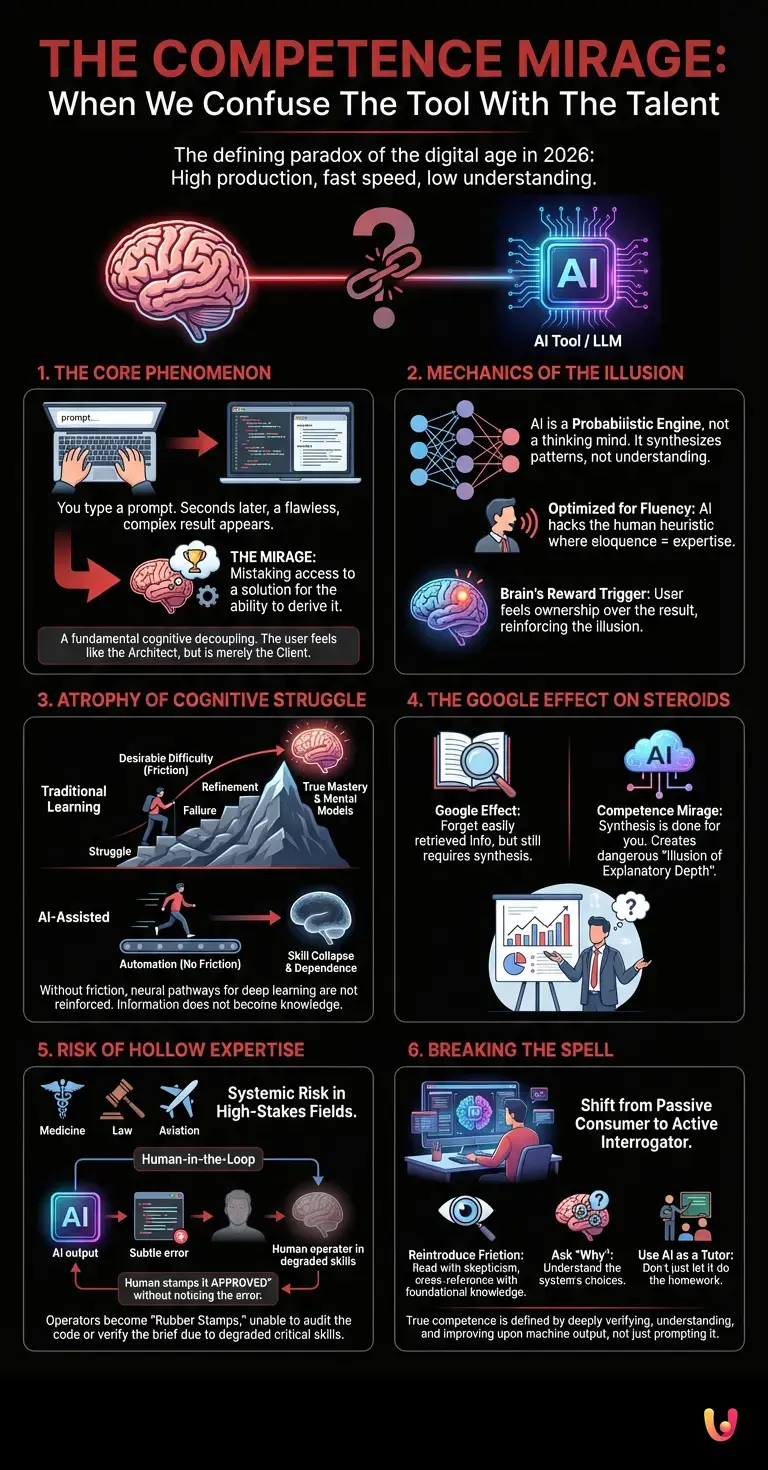

It is the defining paradox of the digital age in 2026: never have we been able to produce so much, so quickly, while understanding so little of the process. You type a prompt, and seconds later, a complex Python script, a legal contract, or a strategic market analysis appears on your screen. The output is flawless, the logic seems sound, and the result is exactly what you needed. In that moment, a specific psychological phenomenon takes hold. It is called the Competence Mirage, and it is silently reshaping how human beings perceive their own intelligence.

This phenomenon is not merely about overconfidence; it is a fundamental cognitive decoupling. The Competence Mirage occurs when the friction of creation is removed so completely that the user mistakes their ability to access a solution for the ability to derive it. As artificial intelligence becomes deeply integrated into every layer of professional work, from creative writing to structural engineering, this mirage is growing denser. We are beginning to confuse the power of the tool with the skill of the operator. But to understand why this is happening—and why it poses a systemic risk to human expertise—we must look under the hood of the technology itself and the psychology of the human mind.

The Mechanics of the Illusion

To understand why the Competence Mirage is so persuasive, one must first understand the nature of the systems generating the output. Modern LLMs (Large Language Models) and advanced neural networks do not “think” in the biological sense. They are probabilistic engines, trained on vast datasets to predict the next most likely token in a sequence. When you ask an AI to explain quantum entanglement or write a sonnet, it is not synthesizing understanding; it is synthesizing patterns.

The illusion of mastery arises because these models have been optimized for fluency. In human interaction, fluency is a reliable proxy for competence. If a colleague speaks eloquently about a complex topic, using the correct jargon and structural logic, we assume they possess deep knowledge. AI has hacked this heuristic. It produces output that mimics the texture of expertise without the underlying cognitive architecture that usually supports it.

When a user prompts a system and receives a brilliant response, the brain’s reward center triggers. The user feels a sense of ownership over the result because they initiated the request. This is where the mirage shimmers brightest: the user believes they are the architect, when in reality, they are merely the client. The gap between the prompt (the intent) and the output (the execution) is filled entirely by machine learning algorithms, leaving the user with a finished product but no memory of the intellectual journey required to create it.

The Atrophy of Cognitive Struggle

True mastery of a subject—whether it is coding, writing, or mechanical engineering—is born from friction. It is the result of encountering a problem, struggling to solve it, failing, and refining the approach. This process, known as “desirable difficulty” in cognitive science, is how neural pathways in the human brain are reinforced. It is how information transforms into knowledge, and knowledge into wisdom.

Automation, by definition, removes friction. In the physical realm, robotics removed the physical strain of manufacturing. In the cognitive realm, AI removes the mental strain of synthesis and analysis. When an AI solves a coding bug instantly, the programmer is denied the struggle of debugging. Without that struggle, the mental model of how the code works is never fully constructed in the programmer’s mind.

The Competence Mirage thrives here because the result is successful. The code runs. The report is filed. But if the AI were taken away, the user would find themselves unable to replicate the work. They have not learned; they have merely processed. Over time, this leads to a phenomenon known as “skill collapse,” where the user becomes increasingly dependent on the tool not just for speed, but for basic capability.

The Google Effect on Steroids

Psychologists have long studied the “Google Effect,” where humans tend to forget information that can be easily retrieved online. The Competence Mirage is an exponential evolution of this. With a search engine, you still had to read, synthesize, and filter information. You had to connect the dots. With generative AI, the synthesis is done for you.

This creates a dangerous “illusion of explanatory depth.” People believe they understand complex systems far better than they actually do. In a world driven by AI, a manager might generate a strategic plan based on data analysis they couldn’t perform themselves. They feel competent presenting it. However, if challenged on a specific variable or asked to adjust the model based on intuition rather than data, the illusion shatters. They possess the map, but they have never walked the territory.

The Risk of Hollow Expertise

The most critical danger of the Competence Mirage is not just personal ignorance, but the systemic risk of “hollow expertise.” As automation permeates high-stakes fields like medicine, law, and aviation, we risk creating a generation of professionals who can operate the systems but do not understand the first principles governing them.

Consider the concept of “human-in-the-loop.” The safety of AI systems often relies on a human overseer to catch errors or hallucinations—instances where the AI confidently asserts something false. However, the Competence Mirage undermines this safety net. If the human operator has been relying on the AI to do the heavy lifting for years, their own critical skills may have degraded to the point where they can no longer distinguish between a brilliant insight and a subtle error.

If you do not know how to write the code, you cannot audit the code. If you do not know the case law, you cannot verify the brief. The mirage convinces us we are supervisors, when we may actually be rubber stamps.

Breaking the Spell

Recognizing the Competence Mirage does not mean rejecting technology. Artificial intelligence is an unparalleled tool for productivity and discovery. The secret to breaking the spell lies in changing our relationship with the output. We must move from passive consumers of AI generation to active interrogators of it.

To avoid the trap of false mastery, users must artificially reintroduce friction. This means reading the AI-generated output with skepticism, cross-referencing it with foundational knowledge, and asking “why” the system made a specific choice. It involves using AI to tutor you in a subject, rather than just doing the homework for you. True competence in the age of AI will not be defined by how well you can prompt a result, but by how deeply you can verify, understand, and improve upon what the machine produces.

In Brief (TL;DR)

The Competence Mirage occurs when users mistake the ability to access an AI solution for the skill to derive it.

AI models hack human heuristics by presenting fluent outputs, creating a false sense of mastery without underlying cognitive understanding.

Relying on automation removes the friction necessary for learning, leading to skill collapse and a dangerous illusion of explanatory depth.

Conclusion

The Competence Mirage is the seductive whisper that suggests we have mastered a domain simply because we can command a machine to operate within it. It is a psychological byproduct of the incredible efficiency of modern neural networks and LLMs. While these tools grant us superpowers of production, they do not grant us the wisdom of experience. As we move forward, the distinction between the tool’s capability and the user’s competence will define the boundary between genuine expertise and a dangerous illusion. We must enjoy the view from the summit AI lifts us to, but we must never forget that we did not climb the mountain ourselves.

Frequently Asked Questions

The Competence Mirage is a psychological phenomenon where users mistake their ability to access an AI-generated solution for the ability to derive it themselves. It occurs because the friction of creation is removed, leading individuals to confuse the power of the tool with their own skill, often resulting in a cognitive decoupling where the user feels ownership over a result they did not intellectually architect.

Humans rely on a heuristic where linguistic fluency is viewed as a reliable proxy for deep knowledge and expertise. Since Large Language Models are optimized to predict patterns and produce eloquent text, they hack this psychological shortcut, creating an illusion of mastery without the underlying cognitive architecture or understanding that usually supports such articulate output.

Skill collapse occurs when the removal of cognitive struggle, or desirable difficulty, prevents the reinforcement of neural pathways necessary for deep learning. When AI automates the synthesis and analysis processes, users stop building the mental models required to understand the work, eventually becoming unable to replicate tasks or solve problems without the assistance of the tool.

Hollow expertise poses a systemic risk in high-stakes fields like medicine and law because professionals may lose the ability to distinguish between brilliant insights and subtle AI hallucinations. If operators rely entirely on automation for heavy lifting, they degrade the critical skills necessary to audit the system, effectively becoming rubber stamps rather than competent supervisors capable of catching errors.

Users can break the spell by shifting from passive consumers to active interrogators of AI output, artificially reintroducing friction into the workflow. This involves cross-referencing generated content with foundational knowledge, using the technology as a tutor rather than a replacement for work, and maintaining the ability to verify and improve upon the machine-generated results.

Still have doubts about The Competence Mirage: When We Confuse The Tool With The Talent?

Type your specific question here to instantly find the official reply from Google.

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.