In the rapidly evolving landscape of artificial intelligence, we have grown accustomed to systems that appear practically omnipotent. Today’s generative platforms can paint in the style of Renaissance masters, draft complex code, and solve intricate computational problems in mere seconds. Yet, beneath the polished, user-friendly interfaces of these advanced systems lies a mathematical fragility that occasionally reveals itself in highly unexpected ways. One of the most fascinating anomalies discovered by researchers and digital artists alike is a phenomenon colloquially known as the “forbidden” pixel. It is a single, specific color value that, when processed by Diffusion Models—the primary algorithmic architecture behind modern AI image generation—causes the system to catastrophically fail, outputting corrupted static or crashing entirely. But how can a mere speck of color dismantle a multi-billion-parameter system?

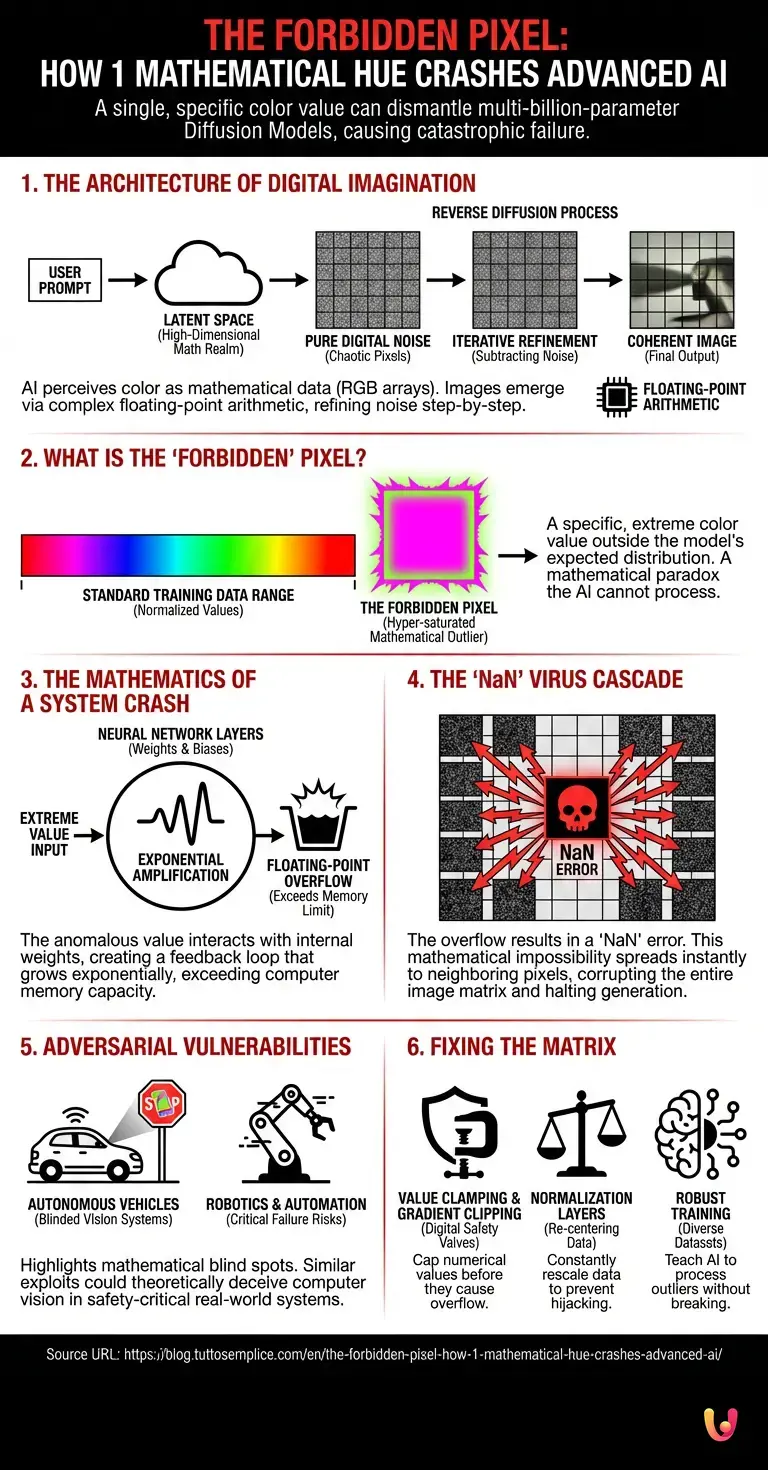

The Architecture of Digital Imagination

To understand why a single color can break an AI, we must first understand how these systems “see” and create. Unlike human artists, neural networks do not perceive color as light bouncing off a physical surface. Instead, they understand color purely as data—specifically, arrays of numbers representing red, green, and blue (RGB) values. When you input a prompt into an image generator, the system uses complex machine learning algorithms to map your words into a high-dimensional mathematical realm known as the latent space.

This process is somewhat analogous to how LLMs (Large Language Models) predict the next word in a sentence based on vast datasets of human language. However, instead of predicting text, the image-generating AI is predicting the next logical arrangement of pixels. It begins with a canvas of pure digital noise—a chaotic jumble of random pixels. Through a process called reverse diffusion, the AI iteratively refines this canvas, mathematically subtracting the noise step-by-step until a coherent image emerges that matches the user’s prompt.

This miraculous transformation relies heavily on floating-point arithmetic, a method used by computers to represent very large or very small real numbers. Every single pixel in the emerging image is constantly being multiplied, added, and transformed through millions of artificial neurons across dozens of computational layers. It is a delicate, highly choreographed mathematical ballet.

What is the ‘Forbidden’ Pixel?

What exactly happens when the so-called forbidden pixel is introduced into this ballet? The anomaly typically manifests when a specific, hyper-saturated mathematical value—often a precise, mathematically extreme shade of neon magenta or a “super-green” that exceeds standard color gamuts—is injected into the initial noise matrix or forced through an image-to-image translation prompt.

To the human eye, if this color could be displayed on a standard monitor, it might look like any other bright digital hue. However, to the AI, it is a mathematical paradox. The neural network is trained on millions of standard images, and its internal weights and biases are calibrated to expect pixel values within a specific, normalized range (usually between -1 and 1, or 0 and 255). The forbidden pixel represents an outlier—a value that sits so far outside the expected distribution of the training data that the model does not know how to process it.

The Mathematics of a System Crash

The secret behind the crash lies in the way neural networks handle unexpected extremes. When the AI attempts to process this anomalous array of numbers, the values interact with the model’s internal mathematical weights in a way that produces an exponentially larger result. Imagine placing a microphone too close to a speaker: the sound enters the microphone, is amplified by the speaker, re-enters the microphone, and is amplified again, creating a deafening screech in seconds. A similar feedback loop happens inside the AI’s latent space.

As the forbidden pixel’s value is multiplied through the successive layers of the neural network, the resulting numbers grow exponentially. Very quickly, the calculation exceeds the maximum value that the computer’s memory can store for that specific variable. In computer science, this event is known as a “floating-point overflow.”

When a floating-point overflow occurs, the computer’s processor stops returning a valid number and instead returns a special error value called “NaN,” which stands for “Not a Number.” If a human artist accidentally mixes the wrong paint, they simply get an ugly color on the canvas. But in neural networks, everything is deeply interconnected. The output of one calculation becomes the mandatory input for the next. When a single pixel evaluates to NaN, that mathematical impossibility spreads to its neighboring pixels in the very next computational step.

Within milliseconds, the NaN error cascades through the entire image matrix. The AI, fundamentally unable to process “Not a Number,” either aborts the generation process entirely, resulting in a software crash, or outputs a completely black, corrupted, or static-filled image. The forbidden pixel acts as a digital virus, a single point of failure that unravels the entire mathematical tapestry of the generation process.

Adversarial Attacks and Machine Vulnerabilities

While a crashed image generation prompt might seem like a trivial inconvenience for a digital artist, the underlying mechanism of the forbidden pixel reveals a critical vulnerability in modern machine learning systems. This phenomenon is a prime example of an adversarial vulnerability—a scenario where a seemingly benign or minute input causes a complex model to make a catastrophic error.

Consider the broader implications for fields like robotics and automation. Autonomous vehicles, industrial drones, and automated manufacturing robots rely heavily on computer vision systems powered by similar neural networks to navigate and interpret the physical world. If a specific pattern or color combination can cause an image generator’s latent space to overflow and crash, a similar adversarial pattern in the real world could theoretically blind or confuse a robot’s vision system.

Researchers have already demonstrated that placing specially designed, brightly colored stickers on stop signs can trick an autonomous vehicle’s AI into registering it as a speed limit sign. The forbidden pixel operates on a similar principle of exploiting the mathematical blind spots of a neural network. Understanding these edge cases is paramount for engineers working on safety-critical automation. The fragility demonstrated by a single pixel highlights why we cannot simply treat AI as an infallible black box; we must deeply understand the mathematical boundaries and limitations of these systems before deploying them in high-stakes environments.

Fixing the Glitch in the Matrix

How do AI researchers fix a problem that is inherent to the fundamental mathematics of the system? The solution lies in implementing strict mathematical boundaries and safety nets within the neural network’s architecture.

Developers use advanced techniques such as “gradient clipping” and “value clamping.” These methods act as digital safety valves. Before a pixel’s mathematical value is passed to the next layer of the neural network, the system checks if the number is growing too large or moving too fast. If it approaches the threshold of a floating-point overflow, the system artificially caps the value, preventing it from turning into a NaN error and stopping the cascade before it begins.

Furthermore, engineers utilize normalization layers—such as Batch Normalization or Layer Normalization—which constantly recenter and rescale the data as it moves through the network, ensuring that no single value can disproportionately hijack the entire calculation. Modern models are also trained with more robust, diverse datasets that intentionally include extreme color values and mathematical noise, effectively teaching the AI how to process these outliers without breaking down. As these systems evolve and are patched, the specific color that once caused a crash is neutralized, becoming just another manageable shade in the AI’s vast digital palette.

In Brief (TL;DR)

Advanced AI image generators hide a mathematical fragility where one extreme color value, called the forbidden pixel, causes catastrophic system crashes.

Because neural networks process colors as mathematical data, they become highly vulnerable to extreme outlier values outside their standard training ranges.

This anomalous hue triggers an exponential feedback loop, causing a memory overflow that spreads infinite errors and completely dismantles the generation.

Conclusion

The phenomenon of the forbidden pixel serves as a fascinating reminder of the true nature of artificial intelligence. Despite their ability to mimic human creativity and process vast amounts of information, these systems are ultimately bound by the rigid laws of mathematics and computer science. A single pixel, carrying an anomalous string of code, is enough to expose the delicate balancing act occurring within the latent space.

As we continue to push the boundaries of what machine learning, robotics, and generative models can achieve, these digital quirks provide invaluable lessons. They force developers to build more resilient, robust systems, ensuring that the technology of tomorrow is not only highly capable but also mathematically sound. The forbidden pixel is more than just a software glitch; it is a profound glimpse into the intricate, fragile, and beautifully complex architecture of the digital mind.

Frequently Asked Questions

The forbidden pixel refers to a specific and mathematically extreme color value that causes generative AI systems to crash or produce corrupted images. When diffusion models encounter these hyper-saturated hues, they cannot process the abnormal data correctly. This results in a complete failure of the image generation process instead of simply displaying an ugly color.

A single extreme color crashes the system by triggering a floating point overflow within the mathematical layers of the neural network. As the abnormal pixel value is multiplied across computational steps, it grows exponentially until it exceeds the memory capacity of the computer. This generates a Not a Number error that rapidly cascades through the entire image matrix and halts the software.

Adversarial vulnerabilities expose critical mathematical blind spots that can confuse safety critical systems like autonomous vehicles and industrial robots. If a specific visual pattern or extreme color can crash a digital image generator, similar physical anomalies could theoretically blind the computer vision of a self driving car. Engineers must thoroughly understand these limitations to prevent catastrophic failures in high stakes physical environments.

Developers prevent these crashes by implementing mathematical safety nets like gradient clipping and value clamping within the architecture of the neural network. These techniques cap numerical values before they grow large enough to cause an overflow error during processing. Additionally, training models with diverse datasets that include extreme outliers helps the system learn how to manage abnormal inputs safely.

A floating point overflow occurs when a mathematical calculation produces a number too large for the computer memory to store. In machine learning models, this happens when unexpected input values are repeatedly multiplied through multiple layers of artificial neurons. The system then outputs an error instead of a valid number, which can corrupt the entire generation process and cause a software crash.

Still have doubts about The Forbidden Pixel: How 1 mathematical hue crashes advanced AI?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

- NaN (Not a Number): Handling Undefined Mathematical Operations in Computing

- Floating-Point Arithmetic and Computational Overflow

- Diffusion Models: Algorithmic Architecture for AI Image Generation

- AI Risk Management Framework: Addressing Vulnerabilities and Anomalies in AI Systems (NIST)

- Latent Space: High-Dimensional Data Representation in Neural Networks

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.