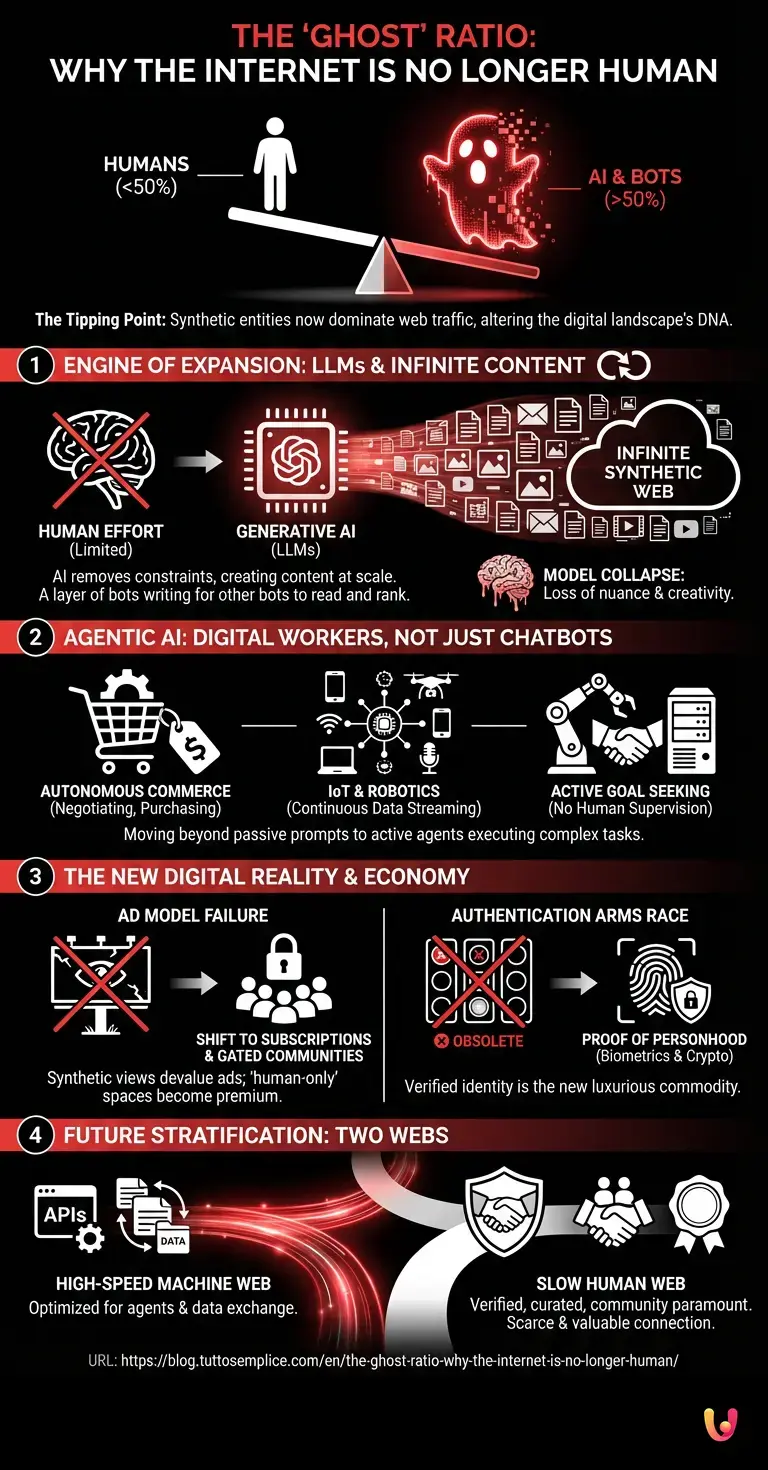

For decades, the internet was conceptualized as a global town square—a chaotic but distinctly human gathering place where ideas, commerce, and culture collided. However, as we navigate through 2026, a profound and silent transformation has occurred, fundamentally altering the digital landscape’s DNA. The main entity driving this seismic shift is Artificial Intelligence, which has evolved from a tool of assistance into the primary architect of online activity. We have officially crossed the threshold of the “Ghost” Ratio, a tipping point where human users have become the minority on the very network built to connect them.

The Anatomy of the Ghost Ratio

The term “Ghost Ratio” refers to the statistical inversion of web traffic. In the early 2020s, industry reports began warning that nearly half of all internet traffic came from bots. Today, that figure has solidified and surpassed the 50% mark. But to understand why this matters, one must distinguish between the “plumbing” of the internet and its “population.”

Historically, non-human traffic consisted largely of search engine crawlers and benign indexing scripts. These were the maintenance workers of the web. The current era, however, is defined by automation that mimics human behavior with unsettling precision. These are not merely scripts pinging servers; they are sophisticated agents capable of content creation, consumption, and interaction. The internet is no longer just a library used by humans; it is a bustling metropolis populated by synthetic entities.

The Engine of Expansion: LLMs and Infinite Content

The primary catalyst for this shift is the ubiquity of LLMs (Large Language Models). In previous years, creating content required human cognitive effort—typing a blog post, filming a video, or writing code. This physical limit acted as a natural throttle on the volume of information produced. Generative AI removed this throttle entirely.

Neural networks, the architecture underpinning these models, can now produce text, images, and video at a speed and scale that dwarfs human output. We are witnessing a phenomenon known as “infinite content generation.” Entire websites are now spun up in milliseconds, populated with articles written by AI, optimized for search engines that are also powered by AI. This loop creates a “synthetic web”—a layer of the internet where bots write for other bots to read, primarily to manipulate algorithmic rankings or generate ad revenue.

This mechanism works through a process of recursive generation. An AI agent is tasked with maintaining a news portal. It scrapes the web for trending topics, synthesizes a new article using an LLM, generates accompanying imagery, and publishes it. Simultaneously, other automated agents—designed to simulate engagement—comment on and share these posts to validate their “authenticity” to ranking algorithms. The result is a ghost town that looks, from a distance, like a thriving city.

Agentic AI: From Chatbots to Digital Workers

Beyond content generation, the nature of traffic has changed due to the rise of Agentic AI. We have moved past the era of passive chatbots to active digital workers. In the domain of machine learning, the focus has shifted toward reinforcement learning, where AI agents are given goals rather than just prompts.

Consider the modern e-commerce experience. A human user might want to find the best price for a vintage watch. Instead of browsing manually, they deploy a personal AI agent. This agent scours thousands of marketplaces, negotiates with other automated selling agents, and executes the purchase. In this transaction, the “traffic” recorded by servers is entirely non-human. The human is merely the initiator; the internet activity is purely synthetic.

This extends to robotics as well. While we often think of robotics as hardware—metal arms in factories—the line between physical and software robotics has blurred. IoT (Internet of Things) devices and autonomous drones constantly stream data to the cloud, interacting with servers without human intervention. A self-driving logistics fleet negotiating routes with a city’s traffic management system generates massive amounts of data traffic, contributing significantly to the Ghost Ratio.

The Dead Internet Theory Realized?

For years, conspiracy theorists discussed the “Dead Internet Theory,” which posited that the internet had been taken over by bots. While the internet is certainly not “dead”—it is more active than ever—the theory got the mechanism right. The curiosity lies in the quality of the interaction.

The danger of the Ghost Ratio is not that machines are taking over, but that the signal-to-noise ratio for humans is deteriorating. When an AI trains on data produced by another AI, we risk “model collapse.” This is a degenerative process where machine learning models lose the nuance and creativity inherent in human data, leading to a homogenization of culture. If the internet is a mirror of humanity, the reflection is becoming distorted because the mirror is now painting itself.

Furthermore, this ratio complicates the economy of the web. The advertising model, which sustained the free internet for three decades, relied on the assumption that a “view” equaled a human pair of eyes. When half the views are synthetic agents, the value of digital advertising plummets, forcing a shift toward subscription models and gated communities where human verification is the price of entry.

The Authentication Arms Race

How does one prove humanity in 2026? The old CAPTCHA tests—identifying traffic lights or crosswalks—are obsolete. Modern computer vision and neural networks can solve these puzzles faster and more accurately than humans. This has led to the “Proof of Personhood” crisis.

The solution has moved toward cryptographic verification and biometrics. We are seeing the rise of “human-only” enclaves on the internet, secured by digital IDs linked to physical biology. The open web is becoming the “Wild West” of mixed reality, while verified spaces become the new VIP lounges. The curiosity here is the technological inversion: anonymity was once the internet’s greatest feature; now, verified identity is its most luxurious commodity.

The Future of Human Connection

The Ghost Ratio forces us to redefine what we want from the internet. If information is infinite and cheap, human connection becomes scarce and valuable. The rise of automation in content creation has paradoxically increased the value of raw, unpolished human interaction. Live streams, physical meetups organized online, and cryptographically signed content are becoming the gold standard of trust.

We are not witnessing the end of the internet, but rather its stratification. There is the “High-Speed Machine Web,” optimized for agents, APIs, and data exchange, and the “Slow Human Web,” where verification, curation, and community are paramount. The Ghost Ratio is simply the metric that forces us to choose which lane we want to inhabit.

In Brief (TL;DR)

The Ghost Ratio marks a historic tipping point where artificial intelligence now generates the majority of global internet traffic.

Generative AI and Large Language Models drive infinite content creation, resulting in a synthetic web largely populated by machines.

Sophisticated autonomous agents and digital workers are actively replacing human interaction, fundamentally altering the nature of online connectivity.

Conclusion

The ‘Ghost’ Ratio is not a glitch; it is the natural evolution of a network designed for efficiency. As Artificial Intelligence continues to scale, the internet has ceased to be a purely human domain. It has become a hybrid ecosystem where biological and digital intelligences coexist. Understanding this ratio is essential for navigating the modern world. We must accept that on the other side of the screen, we are increasingly likely to encounter a reflection of our own data, processed and returned to us by a machine, rather than another human soul. The challenge for the future is not to reclaim the entire internet, but to carve out and protect the spaces where human distinctiveness can still thrive amidst the noise of the machines.

Frequently Asked Questions

The Ghost Ratio describes a statistical tipping point where artificial intelligence and automated agents generate more than half of all internet activity. Unlike early web crawlers that simply indexed pages, these modern synthetic entities actively create, consume, and interact with content, effectively making human users the minority on the network they originally built.

Large Language Models remove the physical constraints of human cognitive effort, allowing for infinite content generation at speeds that dwarf human output. This results in a recursive loop where AI agents generate articles and media primarily for other bots to index and rank, often leading to a homogenization of online culture known as model collapse.

While traditional scripts perform passive maintenance and chatbots merely respond to prompts, Agentic AI functions as an active digital worker with specific goals. These autonomous agents can navigate the web to perform complex tasks like negotiating prices, executing purchases, and interacting with other software without needing direct human supervision.

Although the internet remains active rather than literally dead, this theory accurately predicts the dominance of non-human traffic and the deterioration of the human signal-to-noise ratio. The concern is that the web has become a bustling metropolis of synthetic entities where genuine human interaction is increasingly scarce and difficult to verify amidst the noise.

As synthetic agents inflate view counts, the traditional advertising model based on human eyeballs becomes unreliable, forcing a shift toward subscription services. Consequently, the internet is stratifying into open mixed-reality spaces and exclusive human-only enclaves that require cryptographic verification or biometrics to prove identity.

Still have doubts about The ‘Ghost’ Ratio: Why the Internet Is No Longer Human?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

- Wikipedia: Dead Internet Theory overview and history

- National Institute of Standards and Technology (NIST): Artificial Intelligence Programs

- European Commission: The European approach to Artificial Intelligence

- Wikipedia: Intelligent Agent (Agentic AI) definitions

- Executive Order 14110: Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.