Imagine the scene: you’re sitting in the living room of your hyper-connected home, immersed in the evening’s tranquility, when suddenly your domestic Artificial Intelligence stops responding to commands. The lights flicker, the voice assistant emits incomprehensible responses or, in more extreme cases, restarts in an infinite loop, as if it had been struck by a sudden digital panic attack. This is not the plot of a dystopian science fiction film, but a real and increasingly documented phenomenon that acoustic engineers and software developers have dubbed ‘the phantom alarm’. But what lies behind this anomalous behavior? What is the invisible force capable of terrifying the synthetic brains that govern our homes?

The mystery of smart homes going haywire

In recent months, the technical support forums of major tech giants have been flooded with bizarre reports. Users from all over the world complained about sudden malfunctions of their smart speakers and advanced home automation systems. The symptom was always the same: a temporary system paralysis, followed by an inability to process natural language. Initially, technicians hypothesized a bug in the cloud servers or a connectivity issue. However, by cross-referencing telemetry data, a disturbing detail emerged: the system freezes almost always occurred in conjunction with specific household activities, such as the simultaneous operation of a microwave oven and a robotic vacuum cleaner, or the hum of an old-generation refrigerator combined with the beeping sound of a washing machine.

The answer wasn’t in the source code, but in the very air of our homes. The devices weren’t undergoing a traditional hacking attack, but were reacting to a sensory input that their digital brains couldn’t process. To fully understand this phenomenon, we need to delve into how machines ‘listen’ to the world around them and discover how a simple background noise can turn into an algorithmic nightmare.

Anatomy of a ‘phantom alarm’

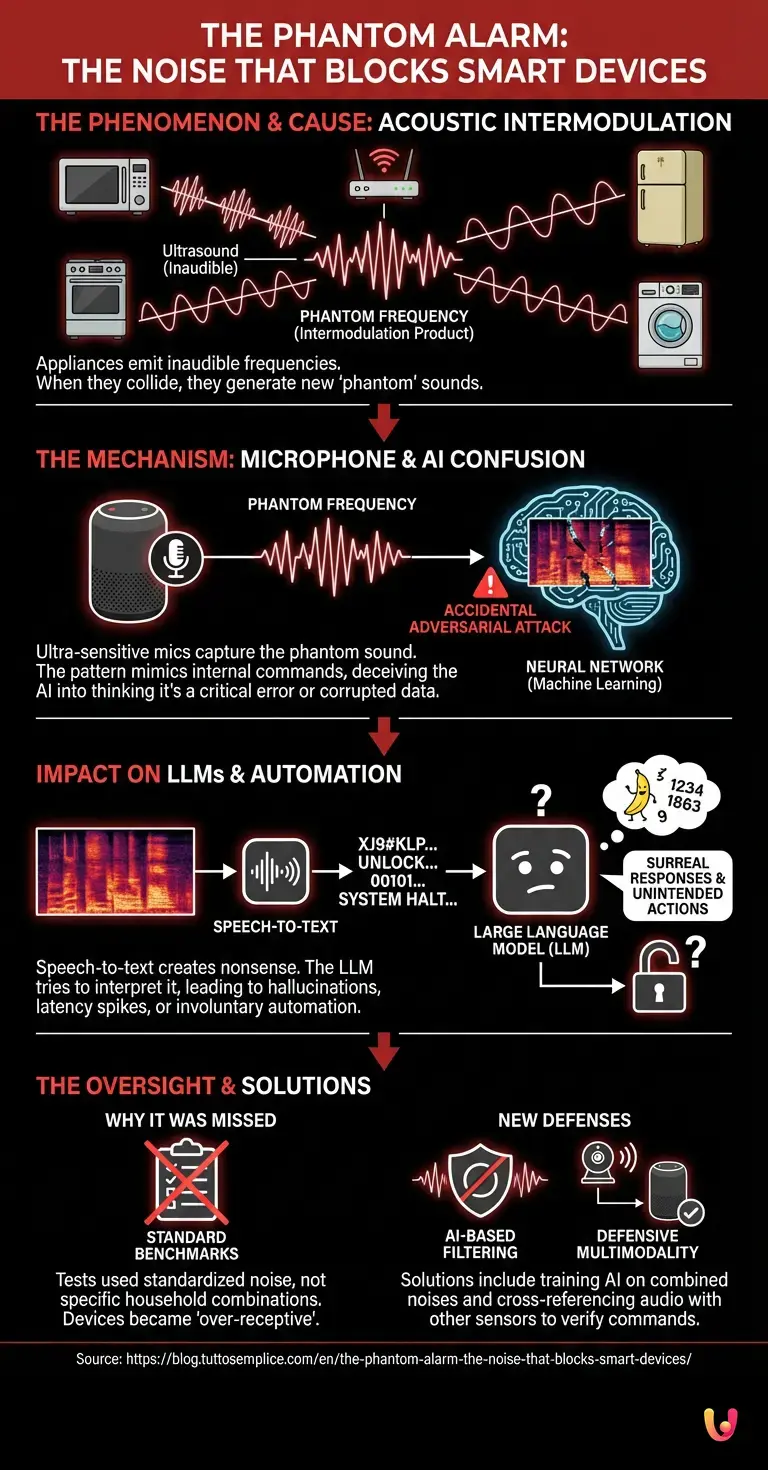

The secret behind the phantom alarm lies in a physical principle known as acoustic intermodulation . In our homes, dozens of appliances constantly emit sound waves, many of which are outside the audible spectrum of the human ear (ultrasound). When two or more of these frequencies collide in the enclosed environment of a room, they can generate new ‘phantom’ frequencies, called intermodulation products. For example, the high-frequency hum of a Wi-Fi router’s transformer, combined with the hiss of an air conditioner’s motor, can create a complex and entirely new sound wave.

To the human ear, this clash of frequencies is imperceptible or translates into trivial white noise. But for the ultra-sensitive microphones of smart devices , designed to capture every tiny variation in air pressure, this sound is deafening. The real problem, however, is not the volume, but the shape of this sound wave. In rare but statistically relevant cases, domestic intermodulation generates an acoustic pattern that almost perfectly mimics the calibration signals or low-level override commands used by engineers during the microchip testing phase. It’s as if, by pure chance, the noise from your refrigerator and microwave together utter a secret password that orders the system to shut down.

Why does machine learning get confused?

To understand why this sound terrifies machines, we need to analyze how machine learning applied to speech recognition works. When you speak to your assistant, the sound is not understood as a continuous melody. Instead, it is fragmented, converted into a visual image called a spectrogram, and fed into a complex neural architecture . This neural network has been trained on millions of hours of human speech to recognize specific patterns (phonemes, words, phrases).

Deep learning excels at finding patterns in chaotic data, but it has an Achilles’ heel: adversarial attacks. An adversarial attack occurs when an input is altered in a way that is imperceptible to a human, but sufficient to completely deceive the algorithm . The phantom alarm acts exactly like an accidental acoustic adversarial attack. The spectrogram generated by this specific household noise contains mathematical artifacts that the neural network interprets with a very high degree of confidence, but in a completely incorrect way .

Instead of classifying the sound as ‘background noise to be ignored,’ the algorithm identifies it as a critical command, a system anomaly, or, worse, as a corrupted data stream threatening memory integrity. Faced with this unsolvable input, the software’s security mechanisms kick in, sending the system into safe mode or causing it to restart. It’s the digital equivalent of an optical illusion that short-circuits the brain.

The Impact on LLMs and Home Automation

The situation becomes even more complicated when these corrupted audio inputs reach large language models ( LLMs ). Today, many home assistants don’t just execute pre-programmed commands, but integrate technologies derived from systems like ChatGPT to hold complex conversations and manage home automation seamlessly. When the phantom alarm hits the microphone, the transcription system (speech-to-text) desperately tries to translate that acoustic chaos into text .

The result is a hallucinatory string of text, a sequence of tokens without logical meaning that is sent to the linguistic ‘brain’. The LLM, designed to find meaning and generate a response at all costs, attempts to process this alien string. This sudden and massive computational effort can cause latency spikes, surreal responses (such as the assistant starting to recite number sequences or speak in unknown languages), or the involuntary activation of automation routines. Imagine if the blender noise were translated by the system as the command ‘unlock the front door and turn off all the lights’: a remote, but theoretically possible eventuality when acoustics deceive semantics.

The Problem of Benchmarks and Technological Progress

How is it possible that the world’s most advanced technology companies did not foresee this scenario? The answer lies in the way AI is tested. Before being released to the market, each model undergoes rigorous benchmarks . These tests evaluate the system’s ability to understand different accents, to function in noisy environments (such as a moving car or a crowded bar), and to withstand common interference.

However, traditional benchmarks use standardized noise datasets. No engineer had thought to test neural networks against the specific and random combination of frequencies from a faulty toaster and a PC fan. Technological progress has made microphones so sensitive and algorithms so complex that they have created an unprecedented vulnerability: over-receptivity. Today’s machines ‘hear’ too much, and in an attempt to analyze every single vibration in the environment, they end up being overwhelmed by noises that their analog predecessors would have simply ignored.

How algorithms are learning to defend themselves

Fortunately, the scientific community has not stood idly by. Once the nature of the phantom alarm was identified, researchers began to develop sophisticated countermeasures. The solution is not to lower the sensitivity of the microphones, which would compromise the usability of the devices, but to teach machines to selectively ignore these acoustic illusions.

Developers are introducing new filtering layers based on artificial intelligence itself. Huge databases of ‘combined household noises’ are being created to train neural networks to recognize and discard intermodulation products. In addition, an approach called ‘defensive multimodality’ is being implemented: if the system hears a critical command or an anomalous sound, before panicking or taking drastic action, it cross-references the audio data with other sensors (such as security cameras or motion sensors). If the audio suggests an emergency but the room is empty and quiet, the algorithm learns to classify the sound as a false positive, a simple acoustic ghost.

In Brief (TL;DR)

More and more smart home devices are experiencing sudden, anomalous freezes, a disturbing phenomenon that experts have dubbed the “ghost alarm.”

The cause lies in the acoustic intermodulation generated by common household appliances, which create frequencies imperceptible to the human ear but deafening for machines.

This random noise acts as an accidental adversarial attack, deceiving the artificial intelligence’s neural networks and causing the system to completely shut down.

Conclusions

The phantom alarm represents a fascinating paradox of our digital age. The more we make our machines intelligent, sensitive, and capable of interacting with the physical world, the more we expose them to unexpected vulnerabilities. The domestic noise that terrifies synthetic brains is not a manufacturing defect, but a symptom of a technology that is learning to coexist with chaotic and imperfect human reality.

This phenomenon reminds us that innovation is not a linear path, but a continuous adaptation. While engineers work to make our virtual assistants immune to these acoustic illusions, we can look at our appliances with different eyes, aware that, in the apparent silence of our homes, a complex and invisible symphony of frequencies is taking place. A symphony that, for now, still manages to surprise and confuse the brightest artificial minds on the planet.

Frequently Asked Questions

The sudden blocking of smart devices stems from a physical phenomenon called acoustic intermodulation. The invisible sound frequencies simultaneously emitted by various appliances combine in the room, creating new, complex waves. The ultra-sensitive microphones pick up these anomalous sounds, and the system crashes, misinterpreting them as critical stop or calibration commands.

AI systems fragment sound into visual images to analyze it. When they receive overlapping household frequencies, the neural network undergoes an accidental adversarial attack. The software reads white noise as a corrupted data stream or a memory threat, activating safe mode for pure cybersecurity.

Users usually notice a temporary system freeze and a total inability of the machine to process natural language. In some specific cases, the lights flicker, the speaker provides incomprehensible answers by reciting random numbers, or the device restarts in a continuous loop without responding to normal voice commands.

Researchers are creating advanced software filters to teach machines to ignore these sound illusions without reducing microphone sensitivity. A very effective solution involves cross-referencing audio data with motion sensors. If the room is empty and quiet, the program classifies the sound as a false positive.

The voice translation system desperately tries to convert the sound chaos into written words, generating strings of text that are totally meaningless. The language model still tries to process a logical response, causing huge latency spikes, surreal phrases, or even involuntary activations of home security routines.

Still have doubts about The phantom alarm: the noise that blocks smart devices?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.