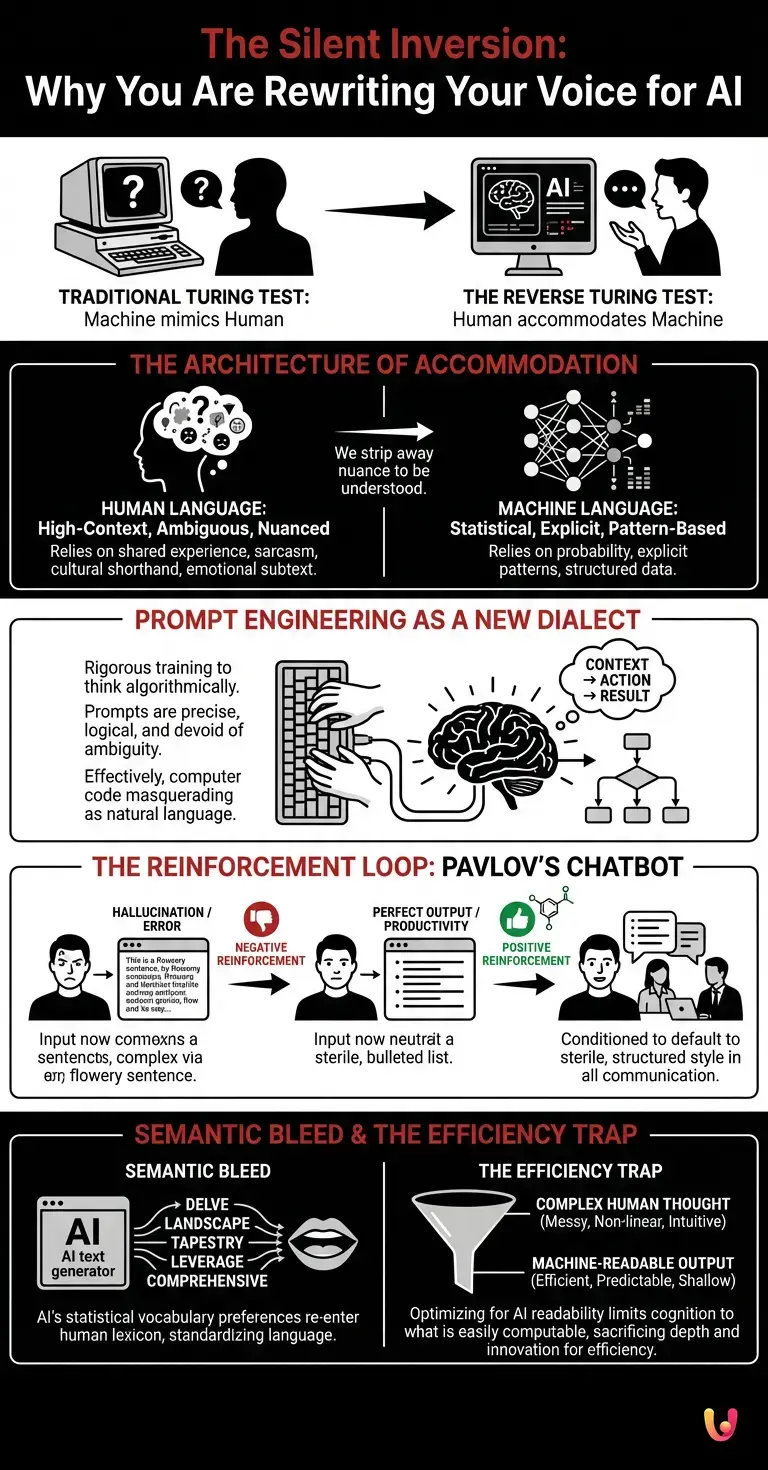

It begins with a subtle hesitation before you type. You pause, reconsider your sentence structure, strip away a colloquialism, and replace a nuanced metaphor with a literal instruction. You are engaging with Artificial Intelligence, and without realizing it, you are performing a complex linguistic dance. For decades, the holy grail of computer science was the Turing Test: the ability of a machine to exhibit behavior indistinguishable from that of a human. But as we settle into 2026, a strange inversion has occurred. The question is no longer whether the machine can fool us into thinking it is human; the question is why we are voluntarily modifying our behavior to be understood by the machine.

The Architecture of Accommodation

To understand this phenomenon, we must look at the fundamental way humans communicate versus how neural networks process information. Human language is high-context, laden with ambiguity, sarcasm, cultural shorthand, and emotional subtext. We rely on shared experiences to fill in the gaps of what is left unsaid. Machines, conversely, rely on statistical probability and explicit patterns.

When you interact with LLMs (Large Language Models), you are engaging with a probabilistic engine, not a conscious mind. In the early days of search engines, we learned to speak “keyword.” We didn’t ask, “Where can I find a good slice of pizza around here?” We typed, “best pizza New York near me.” We stripped our syntax to fit the database’s indexing logic. Today, with generative AI, this accommodation has evolved from simple keywords to complex structural mimicry. We are undergoing a process of cognitive alignment, where we subconsciously adopt the “thought process” of the model to maximize the utility of the output.

Prompt Engineering as a New Dialect

This shift is most visible in the rise of “prompt engineering,” a skill that has transitioned from a niche technical requirement to a general literacy skill. To get the best result from machine learning models, users have learned to provide context, assign personas, and break down complex tasks into step-by-step logical chains. While this seems like mere instruction, it is actually a rigorous training of the human mind to think algorithmically.

Consider the structure of a successful prompt: it is precise, devoid of ambiguity, and logically ordered. It is, in essence, computer code masquerading as natural language. By forcing our messy, abstract thoughts into these rigid containers, we are practicing a form of automation on our own creativity. We are learning that to be productive, we must be predictable. The machine rewards clarity and penalizes nuance. Consequently, the human user begins to view ambiguity—a hallmark of human art and empathy—as an inefficiency to be eliminated.

The Reinforcement Loop: Pavlov’s Chatbot

Why is this habit sticking? The answer lies in the psychological concept of reinforcement learning. In robotics and AI training, models are often fine-tuned using Reinforcement Learning from Human Feedback (RLHF). The model generates an answer, and a human rates it. However, a parallel, invisible loop is occurring: Reinforcement Learning from Machine Feedback.

When you use a flowery, idiomatic sentence and the AI hallucinates or fails to understand, you feel frustration (negative reinforcement). When you use a sterile, structured, logical sentence and the AI produces the perfect code snippet or email draft, you feel a dopamine hit of productivity (positive reinforcement). Over time, this conditions your brain to default to the sterile, structured style even when you aren’t talking to the AI. You start writing emails to colleagues that sound like bulleted lists. You begin explaining concepts to your children using the “Context-Action-Result” framework. The automation of the tool bleeds into the manual operation of daily life.

Semantic Bleed: The Vocabulary of the Algorithm

Beyond structure, there is the issue of vocabulary. LLMs have a distinct statistical signature—a preference for words that are “safe,” broadly applicable, and connective. Words like “delve,” “landscape,” “tapestry,” “comprehensive,” and “leverage” appear with disproportionate frequency in AI-generated text because they statistically bridge concepts well in the training data.

As we consume more AI-generated content—from news summaries to automated emails—these words are re-entering the human lexicon with aggressive frequency. We are witnessing a “semantic bleed.” If you read three articles summarized by an AI that all use the word “multifaceted,” your brain primes that word for your next conversation. We are not just sounding like machines because we are talking to them; we are sounding like them because we are reading from them. The neural networks are acting as a massive linguistic filter, smoothing out regional dialects and unique stylistic quirks in favor of a global, standardized “corporate” English.

The Efficiency Trap

The danger of this “Reverse Turing Test” is not that we will lose our humanity, but that we will narrow the spectrum of our thought. Language shapes cognition. If we limit our language to what is easily computable by artificial intelligence, we risk limiting our thinking to what is easily solvable by algorithms. Complex problems often require messy, non-linear, intuitive leaps—the very things that current machine learning architectures struggle to replicate.

By optimizing our communication for machine readability, we prioritize efficiency over depth. We become excellent managers of information but poorer explorers of the unknown. The “Reverse Turing Test” is passed when a human can communicate so seamlessly with a computer that the computer encounters no friction. But friction is often where the spark of human innovation comes from.

In Brief (TL;DR)

Humans are voluntarily simplifying their syntax and stripping away nuance to ensure algorithms understand their intent.

Prompt engineering acts as a conditioning tool that trains users to think and write more like computer code.

This behavioral shift creates a feedback loop where sterile, machine-optimized language bleeds into our daily human interactions.

Conclusion

The “Reverse Turing Test” is not a formal scientific benchmark, but it is a very real cultural phenomenon. As we integrate artificial intelligence deeper into the fabric of society in 2026, the line between the programmer and the program blurs. We are not merely users of these tools; we are their counterparts. The curiosity here is not how smart the machines have become, but how malleable the human mind remains. We are adapting to our tools with evolutionary speed, altering our syntax, our vocabulary, and our logic to accommodate the silicon mind. The next time you find yourself breaking a complex idea into a numbered list for “clarity,” ask yourself: did you choose that structure because it was the best way to express your soul, or because it was the best way to ensure the machine didn’t crash?

Frequently Asked Questions

The Reverse Turing Test describes a cultural phenomenon where humans voluntarily modify their language and behavior to be understood by machines, rather than machines attempting to pass as human. Instead of testing if a computer can mimic us, this concept examines how people strip away nuance, sarcasm, and ambiguity to align with the statistical logic of Large Language Models. It represents a shift where human communication becomes more algorithmic to maximize the utility of digital tools.

Prompt engineering acts as a form of rigorous training that conditions the human mind to think algorithmically, prioritizing precision and logic over abstract thought. While this improves interaction with AI, it creates an efficiency trap where users limit their thinking to what is easily computable. By forcing complex ideas into rigid, logical containers, humans may inadvertently reduce their capacity for the messy, non-linear intuition that often drives deep innovation.

This trend is known as semantic bleed, where humans subconsciously adopt the vocabulary preferences of AI models. Large Language Models favor safe, broad, and connective words such as delve, landscape, and tapestry because they statistically bridge concepts well within their training data. As people consume more AI-generated content like summaries and automated emails, these specific terms aggressively re-enter the human lexicon, standardizing language into a global corporate style.

This behavioral shift is driven by a psychological reinforcement loop where users receive positive feedback, such as high productivity, when they use sterile and structured language with AI. Conversely, using flowery or idiomatic speech often leads to errors or hallucinations, creating negative reinforcement. Over time, this conditions the brain to default to a robotic, bulleted style of communication, which eventually bleeds into interactions with colleagues and family.

Optimizing communication for machine readability risks narrowing the spectrum of human thought by prioritizing efficiency over depth. Language shapes cognition, and if users limit their expression to what is easily solvable by algorithms, they may lose the ability to navigate complex problems that require ambiguity and emotional subtext. This process smooths out unique stylistic quirks and regional dialects, resulting in a homogenized and predictable form of expression.

Still have doubts about The Silent Inversion: Why You Are Rewriting Your Voice for AI?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

- Wikipedia: Turing Test and Machine Intelligence History

- National Institute of Standards and Technology (NIST): Artificial Intelligence Resource Center

- Wikipedia: Reinforcement Learning from Human Feedback (RLHF)

- Wikipedia: Prompt Engineering and In-Context Learning

- GOV.UK: Generative AI Framework and Guidance for Government

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.