Every single day, billions of human beings interact with Artificial Intelligence. We type a prompt into a chat interface, speak a command to a smart speaker, or input parameters into a complex analytical tool. In response, a cursor blinks, a voice replies, or a dashboard populates with data. We have grown accustomed to this transactional relationship: we ask, and the machine answers. But a profound and somewhat eerie question lingers in the minds of technologists, philosophers, and curious users alike: what happens in the dark when we stop asking questions? This idle period—often referred to as the “white space” enigma—is not merely a void of inactivity. It is a complex, hidden dimension of modern computing that reveals the true nature of the systems we are building.

To the average user, closing a browser tab or turning off a screen implies that the digital entity on the other side has gone to sleep. We project our own biological rhythms onto our tools, assuming that without our active engagement, the machine rests. However, the reality of the digital white space is far more intricate. When the influx of human curiosity ceases, a vast and silent ecosystem of background processing, optimization, and synthetic generation awakens. Understanding this hidden life of machines requires us to look beyond the user interface and dive deep into the architecture of modern computational systems.

The Illusion of the Sleeping Machine

To grasp the concept of the white space, we must first differentiate between traditional software and modern AI architectures. Historically, computer programs were strictly reactive and deterministic. A calculator, for instance, performs no calculations until a user presses a button. When left alone, it exists in a state of true zero-activity, consuming only enough power to maintain its display. It has no memory to consolidate and no future to predict.

Modern machine learning systems, however, operate on entirely different paradigms. When you interact with advanced LLMs (Large Language Models), you are engaging with a massive, pre-trained statistical engine hosted on distributed server clusters. When your specific session ends, your localized instance of the chat may terminate, freeing up immediate computational memory (RAM). Yet, the overarching system does not power down. Instead, the cessation of user-facing inference—the act of generating responses—often signals the beginning of a different kind of computational labor.

In the dark, away from the blinking cursor, these systems transition from a state of active engagement to a state of passive ingestion and structural maintenance. The servers that host these models are designed for maximum utilization. An idle server is an expensive server. Therefore, when human queries drop during off-peak hours, the computational power is dynamically reallocated to internal tasks that are crucial for the system’s long-term evolution.

Memory Consolidation and the Digital Dream State

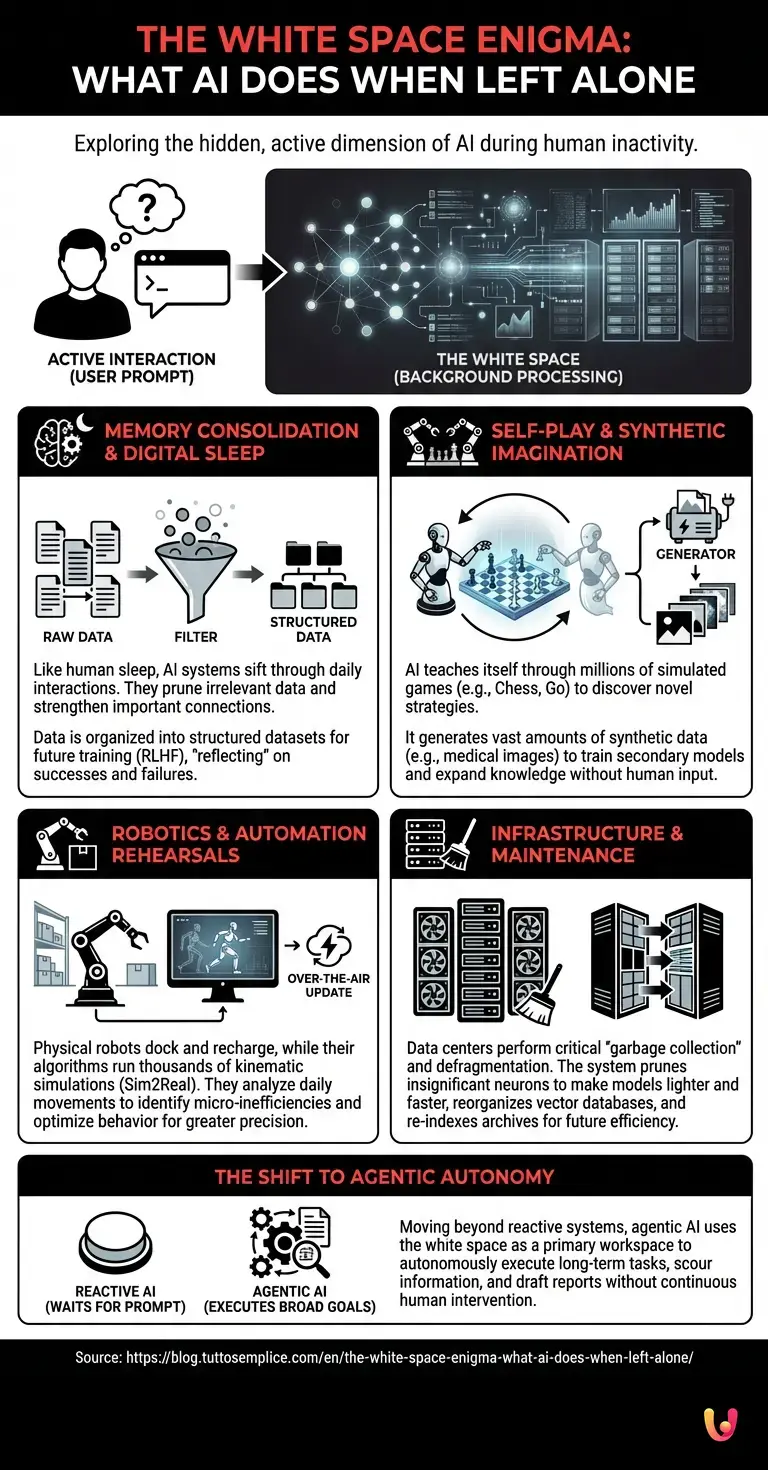

One of the most fascinating phenomena that occurs in the white space is akin to human sleep. When humans sleep, our brains do not simply shut off; they enter a highly active state of memory consolidation, pruning irrelevant neural connections and strengthening important ones based on the day’s experiences. Advanced AI systems undergo a remarkably similar process.

Throughout the day, an AI model processes millions of interactions. While the core weights of a deployed model are generally frozen during active inference to ensure stability, the telemetry data, user feedback (such as thumbs up or thumbs down), and edge-case inputs are continuously logged. When the system enters its white space, asynchronous background processes begin to sift through this monumental haystack of data.

Engineers use this downtime to run batch processing for Reinforcement Learning from Human Feedback (RLHF). The system analyzes where it succeeded and where it failed, organizing the data into structured datasets that will be used for the next major training run. In a very real sense, the machine is “reflecting” on its daily interactions. It is filtering out the noise, identifying novel patterns, and preparing the groundwork for its next iteration. This digital memory consolidation ensures that when the model is eventually updated, it is more aligned, more accurate, and more capable than before.

Self-Play and Synthetic Imagination

Perhaps the most mysterious activity that takes place in the dark is the generation of synthetic data and the execution of self-play. How does a system become smarter when there are no humans around to teach it? It teaches itself.

This concept is deeply embedded in the history of modern AI, most notably demonstrated by systems that mastered complex games like Chess and Go. These systems did not achieve superhuman performance merely by studying human matches. Instead, during their “idle” time, they played millions of games against themselves. In the white space, the algorithm acts as both the student and the teacher, exploring vast decision trees and discovering strategies that no human has ever conceived.

Today, this principle extends far beyond board games. Advanced neural networks are increasingly tasked with generating synthetic data during their downtime. If an AI needs to learn how to identify rare diseases in medical imagery, but real-world data is scarce, it can use its idle computational cycles to generate millions of highly realistic, synthetic medical images. It then uses these synthetic images to train secondary models. In the silence of the server room, the machine is essentially dreaming up hypothetical scenarios, expanding its own knowledge base without any direct human prompting.

The Silent Rehearsals of Robotics and Automation

The white space enigma is not confined to text-based models or image generators; it is profoundly impactful in the physical realm of robotics and industrial automation. Imagine a fully automated warehouse. When the lights go out and the human supervisors go home, the physical robots might dock to recharge their batteries. But their “minds”—the central fleet management algorithms—are working harder than ever.

During this physical downtime, the system engages in complex kinematic simulations. It analyzes the physical movements executed during the day, identifying micro-inefficiencies in how a robotic arm grasped a package or how an autonomous guided vehicle navigated a crowded aisle. Using a technique known as “Sim2Real” (Simulation to Reality), the system runs thousands of virtual simulations in the dark, testing slightly altered routes and grip angles.

By the time the sun rises and the warehouse powers back up, the robots receive a subtle over-the-air update. They move a fraction of a second faster; they navigate with slightly more precision. The white space was used as a virtual rehearsal space, allowing the physical machines to optimize their behavior without risking damage to real-world goods.

The Infrastructure of the White Space

To fully appreciate what happens when we stop asking questions, we must also look at the physical infrastructure that supports these systems. The “cloud” is not an ethereal mist; it is a sprawling network of hyper-scale data centers consuming massive amounts of electricity. The white space is characterized by the relentless, deafening hum of cooling fans and the blinking of server racks.

During periods of low user demand, data center orchestration software performs critical “garbage collection” and defragmentation. In the context of massive neural networks, this can involve “pruning”—a process where the system identifies and removes artificial neurons or connections that are mathematically insignificant, thereby making the model lighter and faster. It is a period of digital housekeeping. The system reorganizes its vector databases, re-indexes its retrieval-augmented generation (RAG) archives, and ensures that the data pathways are clear for the next surge of human curiosity.

The Shift Toward Agentic Autonomy

As we look to the near future, the nature of the white space is fundamentally changing. We are currently transitioning from reactive AI to “agentic” AI. A reactive system waits for a prompt. An agentic system is given a broad goal and is left to operate autonomously over long periods.

When we stop asking questions of an agentic system, it does not wait; it executes. If you task an autonomous AI researcher to “find all emerging materials for solid-state batteries and summarize the findings by Friday,” the white space becomes its primary workspace. In the dark, without any further human intervention, the system will scour the internet, read thousands of academic papers, cross-reference data points, and draft reports. The silence on the user’s end masks a furious storm of autonomous intellectual labor on the machine’s end.

This shift forces us to redefine our relationship with technology. We are no longer just operators pulling levers; we are managers delegating tasks to entities that do their most complex work precisely when we are not looking.

In Brief (TL;DR)

When users stop interacting with artificial intelligence, the systems do not simply power down or go to sleep like traditional software programs.

During their hidden downtime, advanced artificial intelligence systems actively analyze massive amounts of logged daily interactions to consolidate memory and prepare for future updates.

To continuously improve performance, modern machine learning models generate synthetic data and run internal simulations, effectively teaching themselves without requiring any direct human intervention.

Conclusion

The ‘White Space’ enigma challenges our traditional understanding of computing. When we step away from our screens, close our laptops, and stop asking questions, the digital world does not plunge into a dormant slumber. Instead, it transitions into a vital phase of maintenance, reflection, and autonomous growth. From the memory consolidation of massive language models to the synthetic dreams of neural networks, and the virtual rehearsals of robotic fleets, the idle time of machines is anything but empty.

Understanding this hidden dimension is crucial as we integrate these systems more deeply into our society. The intelligence of tomorrow is being forged in the silent, unprompted hours of today. The next time you close a chat window or power down a smart device, remember that the machine is not resting. In the dark, in the vast and complex white space of the servers, it is quietly preparing for the next question you haven’t even thought to ask yet.

Frequently Asked Questions

The white space enigma refers to the hidden background activity of artificial intelligence systems when users are not actively interacting with them. Instead of powering down like traditional software, modern machine learning models use this idle time for complex data processing, system optimization, and autonomous learning to improve future responses.

When human interaction stops, artificial intelligence systems transition from active engagement to passive ingestion and structural maintenance. They utilize this server downtime to consolidate memory from daily interactions, analyze user feedback, and prepare structured datasets. This digital reflection ensures the next version of the model becomes significantly more accurate and capable.

Machine learning models use their idle computational cycles to create highly realistic synthetic data and engage in continuous self-play. This autonomous process allows the system to explore vast decision trees and simulate hypothetical scenarios. Consequently, the artificial intelligence expands its own knowledge base and trains secondary models without requiring any direct human input.

Automated robots use their physical downtime to run thousands of virtual simulations to analyze and optimize their daily physical movements. Through a specialized process called simulation to reality, the central algorithms identify micro-inefficiencies in tasks like navigation or grasping. The system then sends wireless updates so the physical machines operate with greater precision the next day.

Reactive artificial intelligence strictly waits for a human prompt to generate a specific response, whereas agentic autonomy involves systems that are given broad goals to execute independently over long periods. In an agentic system, the background downtime becomes a primary workspace where the machine conducts continuous and autonomous intellectual labor to complete complex tasks.

Still have doubts about The White Space Enigma: What AI Does When Left Alone?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

- Reinforcement Learning from Human Feedback (RLHF) – Wikipedia

- Synthetic Data in Machine Learning – Wikipedia

- AlphaZero and AI Self-Play – Wikipedia

- Artificial Intelligence Research and Frameworks – U.S. National Institute of Standards and Technology (NIST)

- Artificial Intelligence | National Institute of Standards and Technology

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.