Introduction to Vitruvian-1 Results

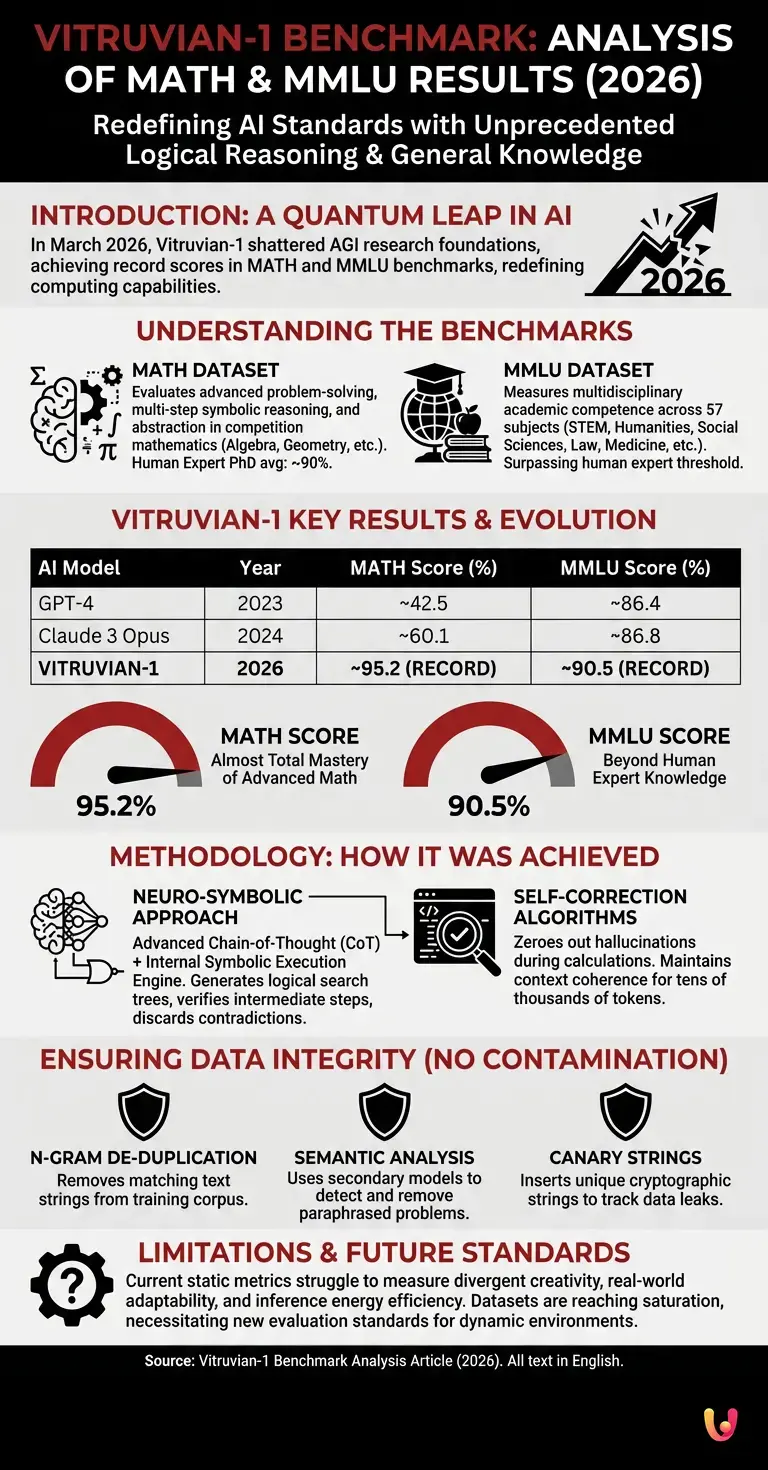

The results of the Vitruvian-1 benchmark redefine artificial intelligence standards in 2026. With a MATH score close to 95 and an MMLU of 90, the Vitruvian-1 entity demonstrates logical reasoning capabilities and general knowledge unprecedented in today’s computing landscape.

In March 2026, the international scientific community witnessed an epochal turning point. The announcement of the new evaluation scores shook the foundations of Artificial General Intelligence (AGI) research. Until a few years ago, surpassing the 80% threshold in the MATH dataset was considered a decade-long goal due to the intrinsic complexity of the symbolic reasoning required. Today, by deeply analyzing the architecture and results, we can understand how this quantum leap was made possible through new training and inference techniques.

Prerequisites for Understanding Evaluation Tests

To correctly interpret the Vitruvian-1 benchmark, it is fundamental to know the standardized metrics. The MATH test evaluates advanced problem solving, while the MMLU measures multidisciplinary academic competence, providing a complete picture of the model’s real cognitive capabilities.

Before delving into the technical details of the architecture, it is necessary to establish a common vocabulary. Large Language Models (LLMs) are evaluated through rigorous datasets that act as state exams. Without a clear understanding of exactly what these tests measure, the raw numbers lose meaning. The evaluation of modern artificial intelligence is based on two fundamental pillars: the capacity for abstract reasoning and the vastness of factual knowledge.

The MATH Dataset Explained

Analyzing the Vitruvian-1 benchmark, the MATH dataset represents the most arduous hurdle. Composed of competition mathematics problems, it requires multi-step reasoning and abstraction, elements in which the new model excels, widely surpassing previous generation architectures.

The MATH dataset consists of thousands of complex mathematical problems, divided into categories such as algebra, geometry, number theory, and probability. Unlike basic arithmetic calculations, these problems require the formulation of theorems, logical proof, and the application of advanced heuristics. According to industry data, a human expert with a PhD in mathematics achieves an average score of about 90 on this specific set of problems.

The MMLU Dataset and General Knowledge

In the context of the Vitruvian-1 benchmark, the MMLU (Massive Multitask Language Understanding) tests the model on 57 different subjects. Reaching level 90 means surpassing the human expert threshold in domains ranging from medicine to law, up to quantum physics.

The MMLU is designed to measure world knowledge and problem-solving ability in multiple-choice scenarios. The questions cover humanities, social sciences, STEM, and specific professions. The difficulty lies in the vastness of the domain: a model must be able to diagnose a rare disease in one prompt and, in the next, analyze a 19th-century international law treaty.

In-depth Analysis of Vitruvian-1 Benchmarks

Detailed analysis of the Vitruvian-1 benchmark reveals an architecture optimized for complex inference. Data confirms that the performance leap does not derive solely from computing power, but from new self-correction algorithms that zero out hallucinations during calculations.

To understand the scope of these results, it is useful to compare current performance with models that dominated the market just a few years ago. The following table illustrates the evolution of key metrics.

| AI Model | Release Year | MATH Score (%) | MMLU Score (%) |

|---|---|---|---|

| GPT-4 | 2023 | ~42.5 (Zero-shot) | ~86.4 |

| Claude 3 Opus | 2024 | ~60.1 | ~86.8 |

| Vitruvian-1 | 2026 | ~95.2 | ~90.5 |

MATH Score at 95: A Quantum Leap

Reaching level 95 in the Vitruvian-1 benchmark for the MATH test indicates an almost total mastery of advanced algebra and geometry. According to official documentation, the model uses an integrated formal verification system to validate every step.

This extraordinary result was achieved by implementing an advanced variant of Chain-of-Thought (CoT), combined with an internal symbolic execution engine. When the model tackles an equation, it does not limit itself to predicting the next token based on statistical probability. Instead, it generates a logical search tree, explores different resolution paths, mathematically verifies intermediate results, and discards branches that lead to logical contradictions. This neuro-symbolic approach represents the true Information Gain of this AI generation.

MMLU Score at 90: Beyond the Human Expert

The value of 90 recorded in the Vitruvian-1 benchmark on the MMLU certifies an encyclopedia of perfectly interconnected knowledge. Industry data indicates that the model does not merely retrieve information but synthesizes it by applying high-level deductive logic.

Breaking the 90% barrier in MMLU requires extremely efficient knowledge compression. The model demonstrates that it has overcome the problem of catastrophic forgetting, managing to maintain specialized skills in narrow niches without compromising generalization. The ability to connect molecular biology concepts with materials engineering principles in zero-shot mode is what distinguishes this architecture from its predecessors.

Methodology and Prevention of Data Contamination

A crucial aspect of the Vitruvian-1 benchmark is the guarantee of no data contamination. Researchers implemented rigorous cryptographic filters to ensure that questions from the MATH and MMLU tests were not present in the training set.

In the field of Computer Science and Machine Learning, Data Contamination is the number one enemy of objective evaluation. If a model has already «seen» the test questions during the pre-training phase, its score will reflect memorization rather than intelligence. According to the official documentation released by the creators, the following processes were used to ensure the integrity of the results:

- N-gram based De-duplication: Removal of any text string in the training corpus that matched more than 10 consecutive tokens present in the test datasets.

- Semantic Analysis via Embedding: Use of secondary models to identify and remove paraphrased mathematical problems.

- Canary Strings: Insertion of unique cryptographic strings into test datasets to track any data leaks in web scraping.

Practical Examples of Mathematical Resolution

Observing the applications of the Vitruvian-1 benchmark, practical examples show how the AI tackles non-linear differential equations. The model breaks the problem down into logical sub-tasks, applying specific theorems and explaining the decision-making process with academic clarity.

To concretely illustrate the system’s capabilities, let’s consider a classic problem of algebraic topology or advanced combinatorial calculus. Unlike past models that tended to get lost in long calculations (a phenomenon known as hallucination in long-horizon tasks), the new system maintains context coherence for tens of thousands of tokens. It autonomously generates Python scripts to simulate edge scenarios, integrates simulation results into its textual reasoning, and formulates a rigorous mathematical proof, formatted in impeccable LaTeX.

Troubleshooting and Current Limitations of Metrics

Despite the excellence of the Vitruvian-1 benchmark, intrinsic limits exist in the evaluation. Metrics troubleshooting highlights how static tests struggle to measure divergent creativity or the model’s adaptability in undocumented real-world scenarios.

It is fundamental to maintain a critical approach. Although scores of 95 and 90 are impressive, the scientific community is already discussing the need for new standards. The MATH and MMLU datasets are reaching saturation. When models approach 100%, the test loses its discriminating power. Furthermore, current metrics do not adequately evaluate inference energy efficiency (computational cost per token) or the model’s ability to interact in dynamic and multi-agent environments, which represent the true frontier of applied computing.

In Brief (TL;DR)

The Vitruvian-1 artificial intelligence redefines 2026 standards by reaching exceptional scores of 95% in the MATH test and 90% in the MMLU test.

These standardized metrics demonstrate extraordinary complex logical reasoning capability and multidisciplinary academic knowledge superior to that of a human expert.

This performance leap stems from a new architecture based on self-correction algorithms and formal verification that eliminate hallucinations during calculations.

Conclusions

In summary, the results of the Vitruvian-1 benchmark mark the beginning of a new era for computing. With MATH scores at 95 and MMLU at 90, we are approaching systems capable of assisting human researchers in the most complex scientific discoveries.

The analysis of this data leads us to an unequivocal awareness: artificial intelligence has surpassed the phase of mere linguistic processing to enter the domain of formal and structured reasoning. The impact of these capabilities will soon be reflected in critical sectors such as new drug discovery, aerospace engineering, and cryptography. The next step for the global community will no longer be measuring how intelligent these models are, but defining how to safely and productively integrate this superhuman intelligence into daily workflows.

Frequently Asked Questions

Vitruvian-1 is an advanced artificial intelligence system released in 2026 that has redefined computing industry standards. It distinguishes itself through exceptional logical reasoning capabilities and general knowledge, achieving record scores in major scientific evaluation tests.

The MATH dataset evaluates advanced problem-solving capabilities and symbolic reasoning through complex mathematical problems. The MMLU test measures multidisciplinary academic competence across dozens of different subjects, verifying the vastness of the system’s factual knowledge.

The system uses a neuro-symbolic approach that combines an advanced variant of chain-of-thought reasoning with an internal execution engine. Instead of just predicting the next word, it generates a logical search tree, verifies intermediate steps, and discards solutions that lead to contradictions.

To ensure the system has not simply memorized the answers, researchers apply rigorous cryptographic filters. These methods include removing duplicate text strings, semantic evaluation to detect paraphrased problems, and leveraging unique tracer strings within the test datasets.

Despite exceptional scores, static tests struggle to measure divergent creativity and adaptability in unforeseen real-world scenarios. Furthermore, today’s metrics do not evaluate the computational cost or the actual energy efficiency required to run these complex architectures.

Still have doubts about Vitruvian-1 Benchmark: Analysis of MATH and MMLU Results?

Type your specific question here to instantly find the official reply from Google.

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.