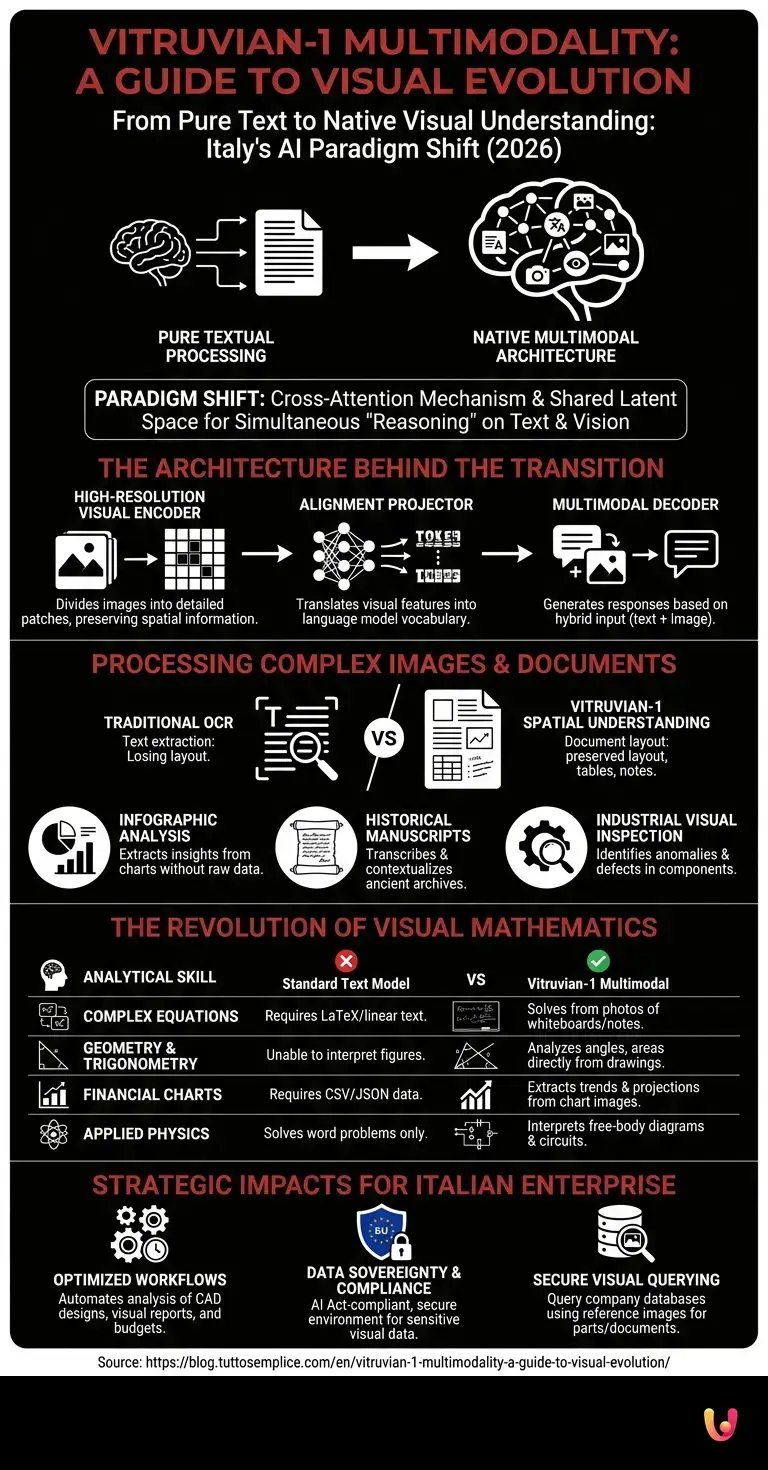

The artificial intelligence landscape in 2026 sees Italy as a protagonist thanks to the continuous development of foundational models. The main entity of this revolution, Vitruvian-1 , is preparing for a crucial evolutionary leap: the transition from pure textual processing to advanced understanding of files and visual media. This transition towards a native multimodal architecture represents not only a technical update but a paradigm shift that will allow the model to interact with the real world through computer vision, opening up unprecedented scenarios for scientific research, industry, and complex data analysis.

The architecture behind the visual transition

The Vitruvian-1 multimodality is based on the integration of Vision Transformer architectures with the base language model . This approach allows AI to map pixels into semantic vectors, ensuring a deep and native understanding of visual media without loss of context.

According to official documentation and industry development roadmaps, evolving a Large Language Model (LLM) into a Vision-Language Model (VLM) requires a redesign of how data is ingested. Vitruvian-1 will not simply augment an external image recognition module but will adopt a cross-attention mechanism. This means that visual tokens and textual tokens will share the same latent space , allowing the model to simultaneously “reason” about what it reads and what it sees.

The key components of this architecture include:

- High-Resolution Visual Encoder: A module capable of dividing images into detailed patches, preserving the spatial information fundamental for the analysis of technical documents.

- Alignment Projector: An intermediate neural network that translates visual features into the vocabulary understood by the language model.

- Multimodal Decoder: The beating heart that generates textual responses or commands based on hybrid input (text + image).

Processing of complex images and documents

Through Vitruvian-1’s multimodality , the model will go beyond simple optical character recognition (OCR). The Italian artificial intelligence will be able to interpret complex layouts, analyze medical reports, and decipher digitized historical archives with unprecedented accuracy.

Document processing has historically been one of the bottlenecks for companies. Traditional systems extract text but lose the logical structure (tables, visual hierarchies, marginal notes). The computer vision applied to Vitruvian-1 aims to solve this problem through Spatial Understanding .

Based on industry data on the performance of next-generation VLM models, Vitruvian-1’s capabilities will extend to:

- Infographic Analysis: Extracting insights and trends directly from images containing pie charts, histograms, and flowcharts, without the need for the underlying raw data.

- Reading Historical Manuscripts: Thanks to specific training on Italian cultural and linguistic heritage, the model will be able to transcribe and contextualize archival documents, overcoming the difficulties related to ancient handwriting.

- Industrial Visual Inspection: Ability to analyze photographs of mechanical components to identify anomalies, wear, or manufacturing defects, comparing them with technical manuals in real time.

The revolution of visual mathematics

The application of Vitruvian-1’s multimodality to visual mathematics represents an engineering milestone. The system will be able to read scatter plots, geometric diagrams, and handwritten equations, converting visual input into logical calculations and analytical deductions in real time.

Visual mathematics is one of the most complex testing grounds for artificial intelligence. It requires not only the recognition of symbols (numbers, operators, variables), but also the understanding of the spatial relationships between them (e.g., fractions, exponents, matrices) and the rigorous application of mathematical logic to arrive at a solution.

The evolution of Vitruvian-1 in this field will make it possible to eliminate the mathematical “hallucinations” typical of purely textual models. Below is a technical comparison of the processing capabilities:

| Analytical Skills | Standard Text Model | Vitruvian-1 Multimodal (Projection) |

|---|---|---|

| Complex Equations | Requires input in LaTeX or linear text format. | Recognizes and solves equations from photos of whiteboards or notes. |

| Geometry and Trigonometry | Unable to interpret geometric figures. | Analyze angles, areas, and theorems directly from the drawing. |

| Financial Charts | Tabular data is required in CSV/JSON format. | It extracts trends, peaks, and projections by reading the image of the chart. |

| Applied Physics | It only solves problems described in words. | Interpret free-body diagrams and electrical circuits. |

Strategic impacts for the Italian enterprise sector

Adopting Vitruvian-1’s multimodal capabilities within the corporate fabric will optimize engineering and financial workflows. Companies will be able to automate the analysis of CAD designs, infographic budgets, and visual reports, while keeping sensitive data within AI Act-compliant infrastructures.

The regulatory and data sovereignty aspect is fundamental. A model developed in Europe, with advanced multimodal capabilities, offers Italian companies a huge competitive advantage. Sectors such as civil engineering, architecture, and healthcare manage terabytes of visual data daily (floor plans, MRI scans, network diagrams) that contain highly sensitive information.

Entrusting these files to non-European cloud systems often raises compliance issues. The evolution of Vitruvian-1 ensures that visual processing takes place in a secure, transparent environment that is aligned with European privacy directives. Furthermore, the ability to query a company database not only with text queries, but by providing a reference image (e.g., “Find all components in the warehouse that resemble this defective part”), will drastically reduce operational times.

In Brief (TL;DR)

The Italian artificial intelligence Vitruvian-1 evolves into a native multimodal model, combining textual processing and computer vision in a shared space.

This technological transition allows the system to interpret complex layouts, medical reports, and ancient manuscripts, overcoming the limitations of traditional optical recognition.

The model also revolutionizes visual mathematics, converting graphs, geometric diagrams, and handwritten equations into analytical deductions and precise calculations.

Conclusions

In summary, the development of Vitruvian-1’s multimodality marks the transition from a purely textual AI to a complete cognitive ecosystem. This evolution consolidates the role of Italian artificial vision in the global landscape, opening up previously unexplored application scenarios.

The integration of visual understanding and visual mathematics will transform Vitruvian-1 into a universal assistant, capable of “seeing” the world with the same precision with which it understands its language. For developers, researchers, and companies, preparing for this transition means starting now to structure their visual data, ready to be queried, analyzed, and enhanced by the next generation of artificial intelligence made in Italy.

Frequently Asked Questions

Multimodality represents the shift from a text-only system to an ecosystem capable of simultaneously understanding words and images. This evolutionary leap allows the Italian model to analyze complex documents, graphics, and photographs, processing visual data in the same cognitive space as natural language to provide extremely precise answers.

Unlike simple optical character recognition, which extracts only the text and loses the context, the new architecture preserves the entire logical structure of the document. The system can thus interpret visual hierarchies, complex tables, and marginal notes, making it essential for analyzing medical reports or digitized historical archives.

This advanced feature allows the system to solve handwritten equations, interpret complex geometric diagrams, and analyze financial trends directly from images. By converting visual inputs into logical calculations in real time, inaccuracies and errors typical of models based solely on textual processing are drastically reduced.

Developed in Europe, the system guarantees full compliance with European regulations on artificial intelligence and ensures the full sovereignty of company data. Businesses can process critical files such as blueprints, medical reports, and financial statements in a secure environment, avoiding the privacy risks typical of foreign cloud platforms.

The model can instantly analyze photographs of mechanical components to identify structural anomalies, manufacturing defects, or unexpected signs of wear. By comparing real-time images with company technical manuals, industries can optimize engineering workflows and drastically reduce operational time related to quality control.

Still have doubts about Vitruvian-1 Multimodality: A Guide to Visual Evolution?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

- Vision Transformer (ViT) Architecture – Wikipedia

- Foundation Models in Artificial Intelligence – Wikipedia

- Optical Character Recognition (OCR) and Document Processing – Wikipedia

- Artificial Intelligence Research and Standards – National Institute of Standards and Technology (.gov)

- A European Approach to Artificial Intelligence – European Commission

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.