The adoption of advanced artificial intelligence models requires careful infrastructure evaluation, especially when corporate data privacy is a top priority. The Vitruvian-1 model, developed by ASC27, represents the state of the art for organizations requiring secure and independent data processing. In this comprehensive technical guide, we will explore the best strategies and architectures for implementing this powerful ecosystem in completely isolated environments, ensuring the highest level of Information Security.

Deployment Architectures for Artificial Intelligence

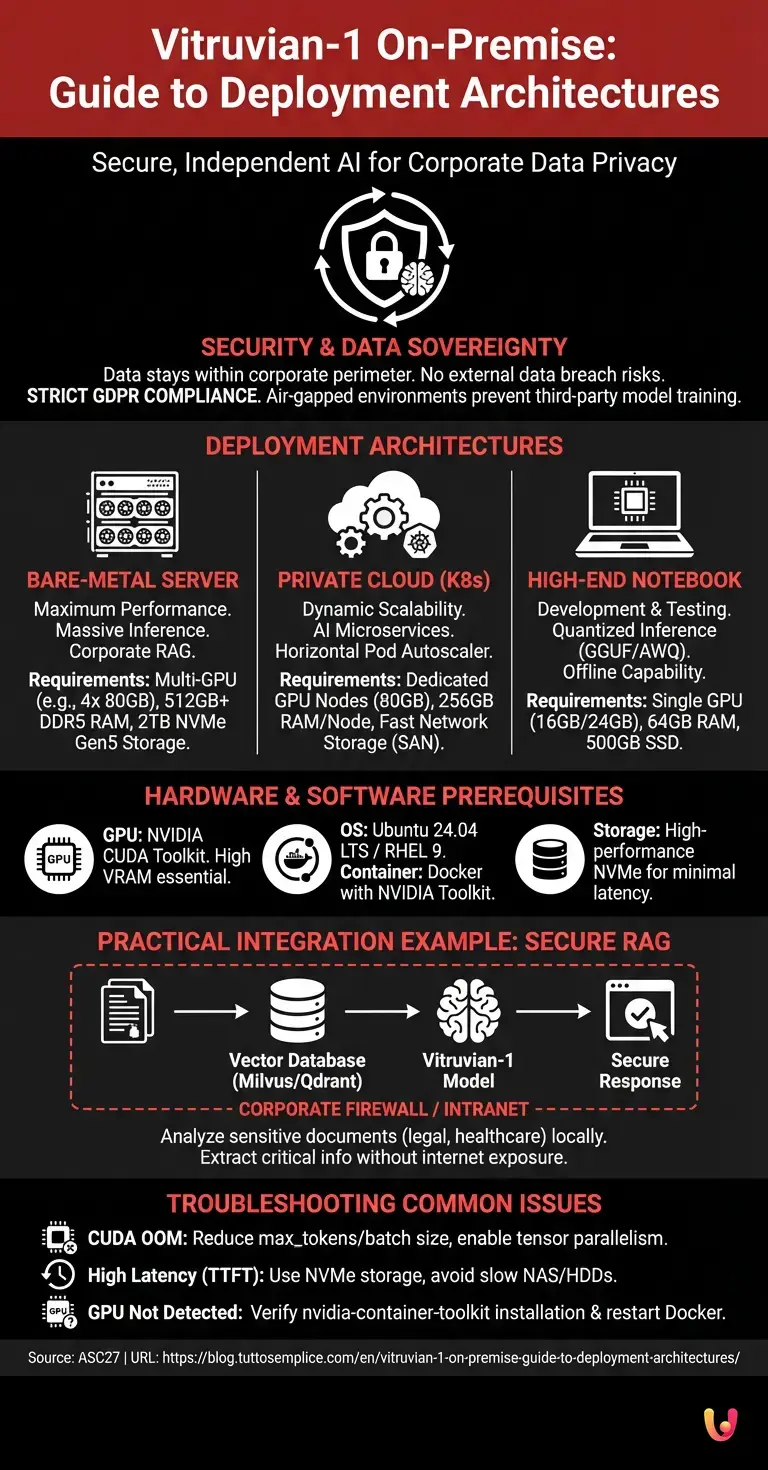

Choosing the right architecture to run Vitruvian-1 on-premise means balancing computing power, security, and operational costs. Companies can opt for dedicated physical servers, private cloud environments, or portable workstations, always ensuring total control over sensitive data.

In the IT landscape of 2026, the decentralization of artificial intelligence has become a standard for critical infrastructures. According to industry data, enterprise companies are progressively abandoning public APIs in favor of local solutions to mitigate risks related to information exfiltration. The deployment architecture must be designed taking into account the volume of daily inferences, the number of concurrent users, and the specific latency requirements of the use case.

Security and Data Sovereignty

Data sovereignty is the main advantage of a Vitruvian-1 on-premise configuration. By keeping information flows within the corporate perimeter, external data breach risks are eliminated, strictly complying with privacy regulations such as the GDPR.

Operating in an air-gapped environment (physically isolated from unsecured networks, including the Internet) ensures that user prompts and documents analyzed by the AI are never used for the training of third-party models. This approach is vital for sectors such as healthcare, finance, and defense, where data classification dictates military-grade security standards.

Hardware and Software Prerequisites

For a smooth installation of Vitruvian-1 on-premise, it is fundamental to have adequate hardware, particularly GPUs with high VRAM, and a software environment based on containerization. Requirements vary significantly between enterprise servers, private clouds, and high-end notebooks.

Below, we present a comparative table based on official ASC27 documentation to correctly size the infrastructure according to the deployment target:

| Deployment Environment | GPU / Minimum VRAM | System RAM | Storage Required | Ideal Use Cases |

|---|---|---|---|---|

| Bare-Metal Server | Multi-GPU (e.g., 4x 80GB) | 512 GB+ DDR5 | 2 TB NVMe Gen5 | Massive inference, Corporate RAG |

| Private Cloud (K8s) | Nodes with dedicated GPUs (80GB) | 256 GB per node | Fast Network Storage (SAN) | AI Microservices, dynamic scalability |

| High-End Notebook | Single GPU (e.g., 16GB/24GB) | 64 GB | 500 GB SSD | Development, testing, quantized inference |

Requirements for Local Servers

The ideal infrastructure for Vitruvian-1 on-premise in an enterprise setting requires latest-generation graphics accelerators, high-performance NVMe storage, and Linux operating systems optimized for AI workloads, thus ensuring minimal latency and maximum operational reliability.

At the software level, the host environment must be prepared with the following fundamental components:

- Operating System: Ubuntu 24.04 LTS or Red Hat Enterprise Linux 9.

- GPU Drivers: NVIDIA CUDA Toolkit updated to the latest stable release.

- Container Engine: Docker Engine with NVIDIA Container Toolkit enabled for hardware resource passthrough.

- Orchestration: Docker Compose for single instances or Kubernetes for distributed clusters.

Local Installation Options

Options for the deployment of Vitruvian-1 on-premise fall into three main categories: bare-metal servers for maximum performance, private cloud for internal scalability, and notebooks for development and testing on the go without connectivity.

Let’s analyze in detail the technical steps for each of these implementation methodologies, following a “Zero-to-Hero” approach for IT engineering teams.

Configuration on Corporate Server

Implementing Vitruvian-1 on-premise on a corporate server requires installing NVIDIA drivers, configuring Docker, and pulling the official ASC27 image. This approach ensures maximum performance for large-scale inference in production environments.

The step-by-step process for activating the service on a bare-metal machine is as follows:

- Phase 1: Host Preparation. Ensure the Docker daemon is configured to use the

nvidiaruntime as default in thedaemon.jsonfile. - Phase 2: Authentication. Use the credentials provided by ASC27 to access the private container image registry.

- Phase 3: Deployment. Create a

docker-compose.ymlfile defining memory limits, GPU allocation (e.g.,count: all), and persistent volumes for logs and model weights. - Phase 4: Start and Health Check. Run the start command and monitor the exposure of local RESTful APIs (typically on port 8080 or 8000).

Implementation in Private Cloud

Integrating Vitruvian-1 on-premise within a corporate private cloud allows for resource orchestration via Kubernetes. This solution offers excellent horizontal scalability, allowing different departments to access the AI model while keeping the infrastructure completely isolated from the outside.

For environments based on VMware Tanzu, OpenShift, or bare-metal Kubernetes clusters, deployment is handled via Helm Charts provided by ASC27. This method allows for dynamic management of inference pods. When the API request load increases, the Horizontal Pod Autoscaler (HPA) can instantiate new model replicas on available nodes, optimizing the use of corporate data center hardware resources.

Execution on High-End Notebooks

For developers and researchers, running Vitruvian-1 on-premise on a high-end notebook is possible thanks to quantized versions of the model. By using optimized frameworks, it is possible to test ASC27’s AI capabilities directly locally, even in the absence of a network.

Quantization (such as GGUF or AWQ formats) reduces model weight precision from 16-bit to 8-bit or 4-bit, drastically lowering VRAM requirements. Using interfaces like LM Studio or Python scripts based on llama.cpp, a data scientist can load a lightweight version of Vitruvian-1 on a laptop equipped with a consumer GPU (e.g., RTX 4090 Mobile series) or on unified memory architectures, ensuring a rapid and confidential development environment.

Practical Integration Examples

A typical use case for Vitruvian-1 on-premise is the analysis of legal or healthcare documents. Companies integrate the model via local APIs into their management systems, allowing the extraction of critical information without ever exposing data to the internet.

Imagine a banking institution that needs to analyze thousands of mortgage contracts to extract specific clauses. Instead of sending these PDFs to a public cloud service, the bank configures an internal RAG (Retrieval-Augmented Generation) pipeline. Documents are vectorized and saved in a local vector database (e.g., Milvus or Qdrant). When an operator queries the system, Vitruvian-1 processes the request by cross-referencing data from the vector database, generating precise and contextualized responses, all within the intranet network protected by corporate firewalls.

Troubleshooting Common Issues

During the deployment of Vitruvian-1 on-premise, the most frequent errors concern VRAM memory exhaustion or network conflicts in containers. Monitoring system logs and optimizing inference parameters is essential to ensure application stability.

Here are the main problems encountered and their respective troubleshooting solutions:

- CUDA OOM (Out of Memory) Error: Occurs when the model context or batch size exceeds physical VRAM. Solution: Reduce the maximum context length (max_tokens), decrease the concurrent batch size, or enable tensor parallelism across multiple GPUs.

- High Latency in Time-To-First-Token (TTFT): Often caused by storage bottlenecks. Solution: Ensure model weights are loaded onto NVMe disks and not on mechanical HDDs or slow NAS.

- Container does not detect GPU: Runtime configuration issue. Solution: Verify the installation of the

nvidia-container-toolkitpackage and restart the Docker daemon.

In Brief (TL;DR)

The Vitruvian-1 model developed by ASC27 provides local artificial intelligence to ensure maximum security and absolute privacy of corporate data.

This isolated architecture ensures total information sovereignty, eliminating risks of external breaches in full compliance with current privacy regulations.

Technical deployment requires dedicated hardware with high-performance GPUs and containerized environments, scaling across physical servers, private clouds, and high-end notebooks.

Conclusions

Adopting the Vitruvian-1 on-premise architecture represents the definitive choice for organizations demanding advanced AI performance without compromising on security. Whether it involves servers, private clouds, or notebooks, ASC27 offers flexible solutions for every business need.

Total control over the infrastructure not only ensures regulatory compliance and intellectual property protection but also allows for deep performance customization. Investing today in skills and hardware for local deployment means preparing the company for a future where artificial intelligence will be the main engine of decision-making processes, operating in total security and technological independence.

Frequently Asked Questions

Vitruvian-1 is an artificial intelligence model developed by ASC27 designed to run in isolated environments. The main advantage is total data sovereignty, which allows for the elimination of external breach risks and compliance with rigorous regulations such as the GDPR. In this way, companies keep sensitive information strictly within their own security perimeter.

For optimal operation on corporate servers, latest-generation graphics cards with high video memory, at least 512 gigabytes of system memory, and ultra-fast disks are necessary. For mobile development on notebooks, a single graphics card with at least sixteen gigabytes of memory is sufficient when using lightweight versions of the software.

Operating in an environment physically disconnected from external networks ensures that analyzed documents and user requests never leave the local network. This approach prevents confidential information from being used to train external models, making the solution ideal for critical sectors such as healthcare, finance, and defense.

Developers can test the model on high-end notebooks by leveraging quantization, a technique that reduces the precision of mathematical parameters, drastically lowering video memory requirements. By using optimized formats, a data scientist can launch a lightweight version of the system directly on their device without needing an internet connection.

This error occurs when requests exceed the physical capacity of the graphics card. To resolve the problem, it is advisable to reduce the maximum context length, decrease the number of simultaneous processes, or distribute the workload across multiple graphics accelerators by enabling hardware parallelism.

Still have doubts about Vitruvian-1 On-Premise: Guide to Deployment Architectures?

Type your specific question here to instantly find the official reply from Google.

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.