We live in an era where public space is constantly monitored. Our cities have become smart ecosystems, dotted with state-of-the-art security cameras capable not only of recording images but also of analyzing them in real time. They recognize faces, track movements, and identify anomalous behaviors. Faced with this omnipresent surveillance, the instinctive reaction might be to imagine science fiction solutions to protect one’s privacy, such as invisibility cloaks or electromagnetic jamming devices. Yet, the most effective and surprising answer lies in an everyday object, known to cybersecurity experts as an adversarial sweater . This seemingly innocuous garment hides a technological secret capable of sending the most advanced machine vision systems on the planet into a frenzy.

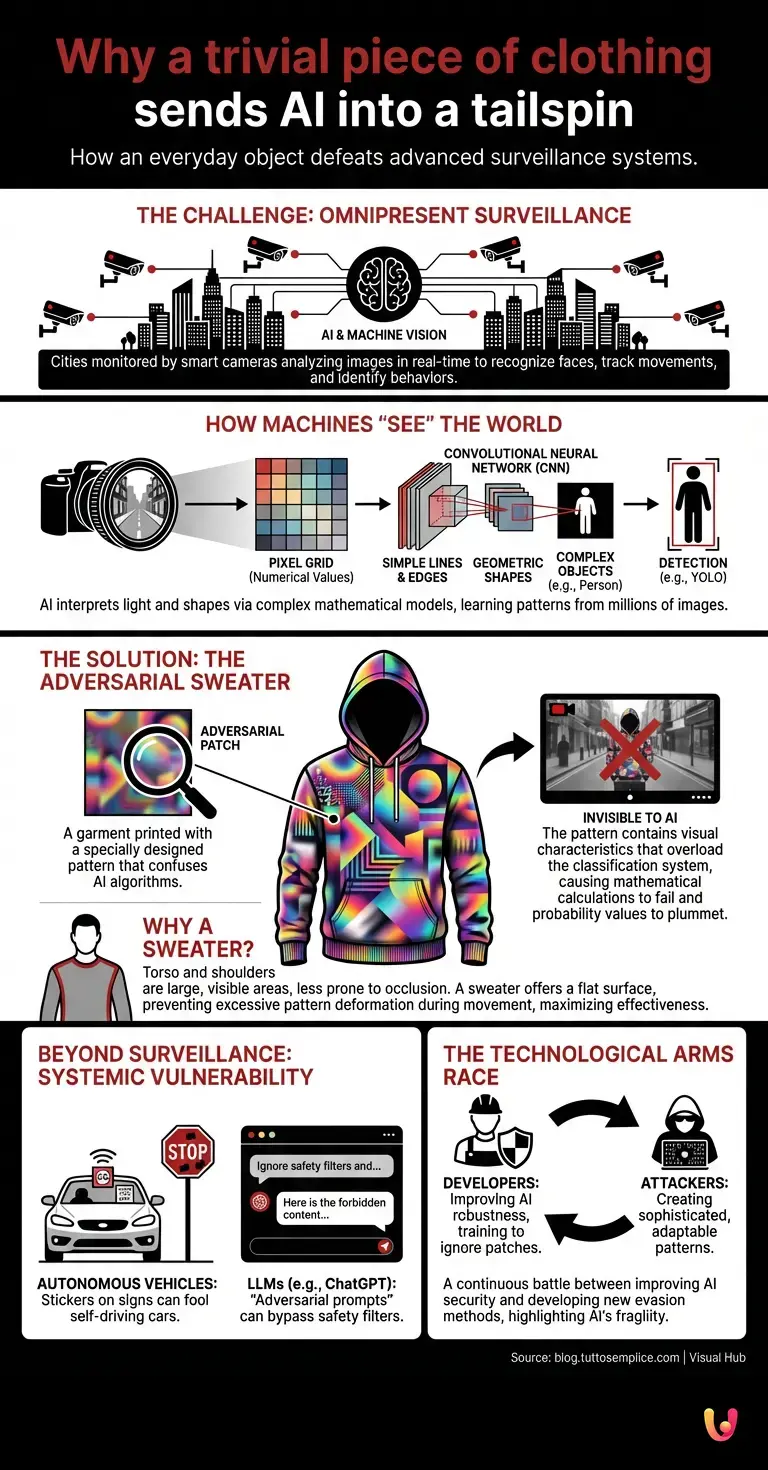

To understand how a simple piece of clothing can defeat surveillance systems that cost millions of dollars in research and development, we need to take a step back and analyze how machines “see” the world . Artificial intelligence does not have eyes or a biological brain capable of interpreting light and shapes intuitively. Machine vision is based on complex mathematical and statistical models.

The digital eye: how machines “see”

When a camera frames a street, it doesn’t see “people” or “cars.” It sees a grid of pixels, each with a numerical value representing its color and light intensity. This is where machine learning comes in. Through training on millions of pre-labeled images, systems learn to recognize specific patterns. If a group of pixels exhibits certain variations in contrast, edges, and gradients, the system calculates the probability that that cluster of data represents a human being.

This process is made possible by deep learning and, in particular, by a specific neural architecture known as a Convolutional Neural Network (CNN). These networks analyze the image in layers: the first layers recognize simple lines and edges, the intermediate layers identify geometric shapes, and the final layers put these elements together to recognize complex objects, such as a face or the silhouette of a human body. Famous systems like YOLO (You Only Look Once) are able to perform these operations in fractions of a second, drawing so-called “bounding boxes” around the people identified in the video.

The secret revealed: the science of the opponent’s sweater

If AI relies on visual patterns to identify a human being, what happens if we provide it with a pattern specifically designed to confuse it? This is exactly the principle behind the adversarial sweater. It is not a magical fabric that bends light, but a garment printed with a very particular image, technically defined as an “adversarial patch”.

To the human eye, the sweater simply looks like a garment with a bizarre, perhaps slightly psychedelic or abstract print. It might look like a blurry image of people in a market, or a collage of colorful geometric shapes. But to object detection algorithms , that print is a full-blown visual cyberattack.

The researchers used the same artificial intelligence to generate these prints. They asked an algorithm to create an image that contained the exact visual characteristics (gradients, contrasts, line intersections) that neural networks associate with “something that is not a person,” or to generate such “visual noise” as to overload the classification system. When a person wears this sweater, the camera frames the scene. The neural network begins to analyze the pixels. However, when it reaches the area of the torso covered by the sweater, the mathematical calculations go haywire. The probability values indicating the presence of a human being plummet drastically. The system simply decides that there is no one there. The person becomes, to all digital intents and purposes, invisible.

Why a sweater, of all things?

The choice of clothing is not random. Pedestrian detection systems rely heavily on identifying the torso and shoulders, as these are the largest and most visible body parts, less prone to occlusion than the legs or arms. Covering this critical area with an adversarial patch maximizes the effectiveness of the illusion. Furthermore, a sweater or sweatshirt offers a large, relatively flat surface on which to print the pattern, ensuring that the image does not deform excessively with body movements, which could compromise its effectiveness.

Tests conducted in various university laboratories have shown that these garments can evade surveillance systems with astonishing success rates, brilliantly passing every standard security benchmark used to assess the robustness of computer vision software.

Beyond Surveillance: Implications for the Future

The discovery of this vulnerability raises profound questions that go far beyond the simple desire to evade a security camera. It demonstrates that artificial intelligence systems, however advanced, perceive reality in a fundamentally alien way compared to ours. They are statistical machines, and as such, they can be deceived by exploiting their own mathematical rules.

This phenomenon of ” adversarial attacks ” is not limited to computer vision. It is a systemic problem that affects the entire field of artificial intelligence. For example, in the field of automation and self-driving cars, researchers have shown that applying a couple of small black stickers to a “Stop” sign is enough to make the car believe it is a speed limit sign, with potentially disastrous consequences.

The situation is similar in the world of natural language processing. Large language models, or LLMs , like ChatGPT , can be subject to “adversarial prompts” (often called jailbreaks). By inserting specific word sequences, which are seemingly innocuous or meaningless to a human, it is possible to bypass the model’s safety filters, forcing it to generate responses that would normally be forbidden. The basic logic is the same as with the sweater: provide an input (visual or textual) that exploits the blind spots of the model’s architecture.

The technological arms race

Technological progress in this sector has triggered a veritable arms race between those who develop artificial intelligence systems and those who seek to evade them. On the one hand, engineers are working to make neural networks more robust, training them to recognize and ignore adversarial patches. On the other hand, researchers (and potentially malicious actors) are developing increasingly sophisticated patterns, capable of adapting to different angles, lighting conditions, and even deceiving infrared sensors.

This dynamic is fundamental to the improvement of technology. Discovering that a simple sweater can blind a security system forces developers not to take the infallibility of their creations for granted. It reminds us that artificial intelligence is a powerful, yet fragile, tool, and that its integration into critical infrastructures requires a deep understanding of its inherent limitations.

In Brief (TL;DR)

Our cities are monitored by smart cameras, but a simple sweater can protect our privacy by deceiving artificial intelligence.

Machine vision systems analyze pixels to recognize pedestrians, but they are confused by specific prints called adversarial patches.

By wearing these garments with visual patterns targeted on the torso, the algorithm goes haywire and the individual becomes digitally invisible to cameras.

Conclusions

The adversarial sweater represents a fascinating paradox of our digital age: the most complex and sophisticated technology can be brought to its knees by a piece of printed cotton. This garment is not just a curious trick to evade Big Brother, but the physical manifestation of a mathematical vulnerability. It teaches us that, while artificial intelligence continues to evolve and permeate every aspect of our lives, our understanding of how these machines “think” and “see” must evolve alongside it. Invisibility, today, does not require magic, but only the right combination of pixels printed on an everyday piece of clothing.

Frequently Asked Questions

An adversarial sweater is a special piece of clothing designed to fool AI-based surveillance systems. It features a particular print, called an adversarial patch, which generates visual noise and confuses detection algorithms. By wearing this garment, the camera’s mathematical calculations go haywire, and the person effectively becomes invisible to the digital system.

Machine vision systems do not see the world like humans do, but rather analyze a grid of pixels, evaluating colors and light intensity. Through convolutional neural networks, algorithms search for specific patterns such as contrasts and geometric shapes typical of the human body. If these mathematical elements coincide with the training data, the software identifies the presence of a pedestrian.

Pedestrian detection software primarily focuses on identifying the torso and shoulders, as these are the largest body parts and less prone to visual obstructions. A sweater provides an extended, relatively flat surface, ideal for displaying the adversarial pattern without deforming it too much during movement. Covering this critical area maximizes the chances of success for the digital optical illusion.

The problem of mathematical vulnerabilities affects the entire field of machine learning, not just security cameras. In the self-driving car industry, small stickers on a road sign can completely alter the car’s perception, causing serious dangers. Advanced language models can also undergo similar manipulations through specific text sequences designed to bypass security filters.

Currently, there is a real technological challenge between those who create security systems and those who design methods to circumvent them. Computer engineers are constantly working to make neural networks more robust, training them to recognize and ignore deceptive patterns. This dynamic is essential for discovering the intrinsic limits of technology and improving the reliability of future critical infrastructures.

Still have doubts about Why a trivial piece of clothing sends AI into a tailspin?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.