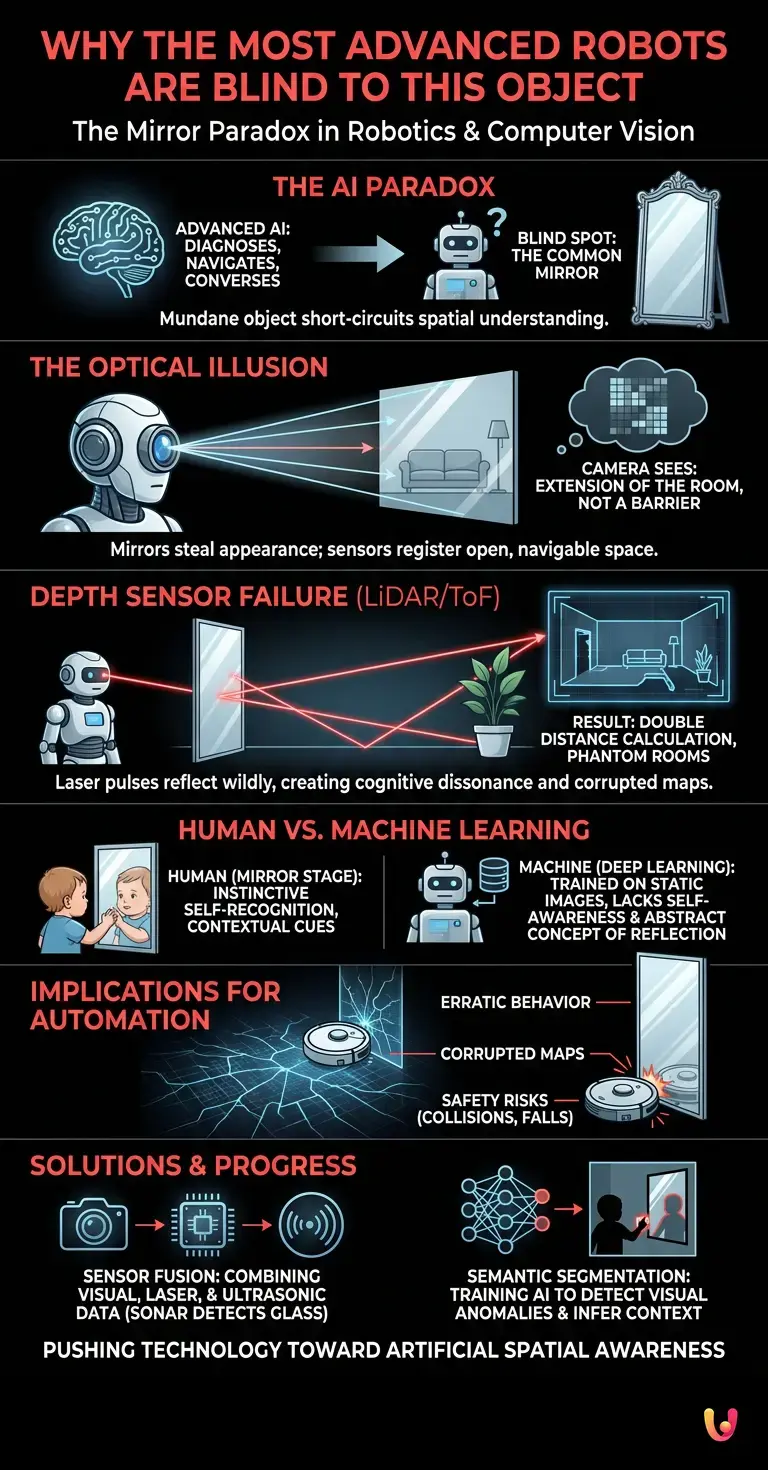

We live in an era where artificial intelligence seems to know no bounds. Modern systems are capable of diagnosing complex medical conditions by analyzing X-rays in fractions of a second, navigating cars through the chaotic traffic of major cities, and even engaging in philosophical conversations. While an LLM (Large Language Model) like ChatGPT can generate flawless essays on quantum physics or write advanced programming code, a fascinating paradox exists in the fields of robotics and computer vision. If we transfer this immense computing power into a physical droid and let it roam freely through an ordinary home, there is one everyday object—mundane and omnipresent—that will short-circuit its understanding of space : the mirror . This simple household item currently represents one of the greatest blind spots for synthetic brains.

To understand the significance of this technological curiosity, we must delve into the way machines “see” and interpret the world around them. This is not merely a programming flaw, but an intrinsic limitation tied to the physics of light and the very nature of current machine learning models . Solving the mirror enigma means pushing technology toward a new frontier of spatial awareness.

The optical illusion that tricks machines

For a human being, recognizing a reflective surface is an almost instinctive action. Our brain utilizes a series of visual and contextual cues: the frame, the faint reflections on the glass surface, the object’s position (for example, above a sink), and, above all, the presence of our own reflected image. For an AI , however, the process of vision is radically different. A home robot’s cameras capture light and convert it into a two-dimensional grid of pixels, each with a numerical value corresponding to a color.

When computer vision algorithms analyze this grid, they look for patterns, edges, and textures to identify objects. The fundamental problem with a mirror is that it possesses no visual appearance of its own: its nature is to steal the appearance of the surrounding environment. Consequently, when a robot looks at a mirrored cabinet, its optical sensors do not register a solid obstacle, but rather see an extension of the room. They see another floor, other walls, and other furniture. The optical illusion is perfect: the machine is convinced that there is an open, navigable space where, in reality, there is a barrier of glass and silver.

Neural Architecture and the Challenge of Depth

One might assume that the problem is limited to traditional cameras alone and that advanced depth sensors can easily circumvent this obstacle. Unfortunately, physical reality further complicates matters. Many autonomous navigation systems rely on LiDAR (Light Detection and Ranging) or ToF (Time of Flight) sensors. These devices emit pulses of laser or infrared light and measure the time it takes for them to bounce off objects and return, thereby calculating distance with extreme precision.

However, the neural architecture processing this data clashes with the laws of optics. When a LiDAR laser beam strikes a mirror, it does not bounce off the surface to return to the sensor. Instead, it is reflected according to the angle of incidence, travels across the room, strikes a real object (such as a sofa behind the robot), bounces off the mirror again, and finally returns to the sensor. The result? The robot calculates a distance that is double or triple the actual distance. In the three-dimensional “point cloud” generated by the synthetic brain, the mirror disappears completely, replaced by a black hole or a phantom room extending beyond the wall. This phenomenon creates cognitive dissonance for the machine: tactile sensors or bumpers indicate a collision, yet the digital map insists that the path is clear.

Why does machine learning fail where a child excels?

The difficulty machines face in handling reflections highlights a vast difference between biological learning and machine learning . In human developmental psychology, there is a crucial phase known as the “mirror stage,” theorized by the psychoanalyst Jacques Lacan. Around 18 months of age, a child learns to recognize that the reflected image is not another child, but themselves. This epiphany requires a complex understanding of the self, space, and basic physics.

Current deep learning systems, however sophisticated, lack this causal and contextual understanding of the world . They are trained on millions of static images, learning to associate specific pixel patterns with specific labels (e.g., “cat,” “chair,” “door”). If the training dataset does not include a massive number of specific examples of mirrors under every possible lighting condition and angle, the neural network will never develop the abstract concept of a “reflective surface.” The machine is unaware of its own existence, does not know what it looks like, and, consequently, cannot use its own reflection as a clue to infer the presence of a mirror.

The implications for home automation

This blind spot is not merely an academic curiosity; it has immediate practical implications in the field of automation . High-end robot vacuums, which map our homes with millimeter precision, often behave erratically in the presence of floor-to-ceiling mirrors or highly reflective glass doors. They may repeatedly attempt to pass through the glass, get stuck in endless loops trying to clean a non-existent “room,” or corrupt the digital map of the home by superimposing real geometry with reflected geometry.

For the robotics industry, overcoming this obstacle has become a true benchmark . It is not merely a matter of preventing a vacuum cleaner from getting scratched, but of ensuring safety for future assistive robots. Imagine a humanoid robot designed to assist the elderly or people with disabilities: a spatial miscalculation caused by a reflection could lead to falls, accidents, or damage to fragile objects. The ability to reliably identify transparent and reflective surfaces has become a fundamental metric for evaluating the reliability of new autonomous navigation systems.

Beyond the Reflection: Solutions of Technological Progress

How are engineers and researchers addressing this problem? Technological progress is driving a shift toward multimodal solutions that do not rely on a single type of sensor. One of the most promising strategies is sensor fusion. By combining visual data from cameras, LiDAR measurements, and—crucially—ultrasonic sensors (sonar), robots can cross-reference information. While light passes through or is reflected by glass, sound waves bounce off solid surfaces. If the LiDAR indicates “open space” but the sonar indicates an “obstacle at 10 centimeters,” the algorithm learns to infer the presence of a mirror or glass.

Furthermore, researchers are developing neural networks specialized in the “semantic segmentation of reflective surfaces.” Instead of looking only for solid objects, these networks are trained to detect visual anomalies typical of mirrors: discontinuities in floor edges, differences in lighting between the reflection and the real environment, and the presence of the robot itself within the image. The aim is to teach machines not merely to look, but to infer context, endowing them with a form of artificial “spatial common sense.”

In Brief (TL;DR)

Despite the incredible power of modern artificial intelligence, the most advanced robots are unable to recognize a common household object like a mirror.

Cameras and laser sensors interpret reflective surfaces as navigable extensions of space, creating dangerous optical illusions and completely erroneous three-dimensional mappings.

This obstacle highlights a profound shortcoming of machine learning, which recognizes visual patterns but lacks the spatial awareness of humans.

Conclusions

The mirror serves as a perfect metaphor for the current state of artificial intelligence. It reminds us that, despite the extraordinary milestones achieved in natural language processing and abstract computation, physical interaction with the chaotic real world remains a formidable challenge. Machines can calculate planetary orbits or simulate protein folding, yet they stumble when faced with an optical illusion that a two-year-old child can easily see through. Solving the problem of the domestic blind spot means not only improving our household appliances but taking a fundamental step toward creating synthetic brains endowed with genuine spatial awareness. Until then, the mirror will continue to reflect not only our rooms but also the fascinating limitations of the technology we are striving to create.

Frequently Asked Questions

Robot vacuums struggle to recognize reflective surfaces because their optical and laser sensors are deceived. Instead of detecting a solid obstacle, the cameras perceive the reflection of the room as open space, causing the device to attempt to pass through the glass.

When the laser beam of a navigation system strikes a reflective surface, it does not return directly but bounces off toward other objects in the room. This measurement error leads the machine to believe that there is a much deeper empty space, creating non-existent rooms in its digital map.

Engineers are adopting sensor fusion, a technique that combines cameras, lasers, and ultrasonic sensors. Since sound waves bounce off glass—unlike light—the system cross-references the data to detect the presence of an obstacle and correct the visual error.

Humans use intuition and context to realize they are facing a reflective surface by recognizing their own image. Artificial systems analyze only pixel patterns and, without specific training on these visual anomalies, fail to grasp the concept of reflection.

The inability to correctly map reflective spaces poses a serious safety risk for assistive robots. A spatial miscalculation could lead to collisions, falls, or damage to fragile objects, making the development of advanced spatial awareness essential.

Still have doubts about Why the most advanced robots are blind to this object?

Type your specific question here to instantly find the official reply from Google.

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.