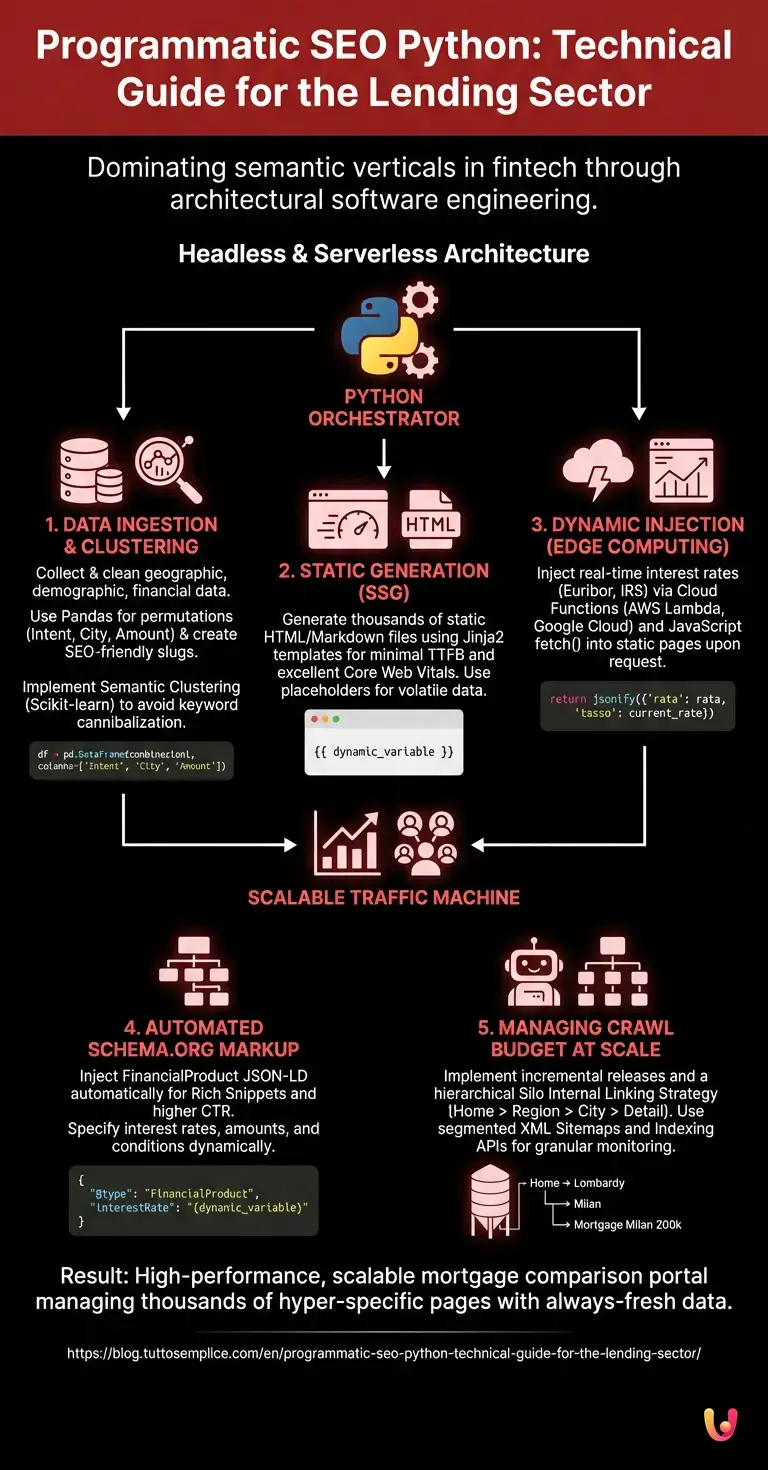

In the digital landscape of 2026, competition for organic visibility in the financial sector is no longer played out on single keywords, but on the ability to dominate entire semantic verticals through software engineering. **Programmatic SEO** is the main entity defining this paradigm shift: no longer a purely editorial discipline, but an architectural process. In this technical guide, we will explore how using **programmatic seo python** can transform a mortgage comparison portal into a scalable traffic acquisition machine, managing thousands of hyper-specific landing pages (e.g., “Fixed rate mortgage Milan €200,000”) without sacrificing performance or data quality.

The Architecture of Programmatic SEO in Fintech

For financial portals, the challenge is twofold: scaling the number of pages to intercept long-tail queries and keeping volatile data like Euribor or IRS rates updated. A traditional approach based on monolithic CMSs (like standard WordPress) would collapse under the weight of 50,000 pages or offer obsolete data.

The solution lies in a **Headless and Serverless** architecture, where Python acts as the orchestrator. The operational workflow is divided into three distinct phases:

- Data Ingestion & Clustering: Collection and cleaning of data (geographic, demographic, financial).

- Static Generation (SSG): Creation of the HTML skeleton of pages to ensure excellent Core Web Vitals.

- Dynamic Injection (Edge Computing): Real-time injection of interest rates via Cloud Functions.

1. Dataset Preparation with Python and Pandas

The heart of **programmatic seo python** is data. For a mortgage portal, we must cross-reference three dimensions: Intent (First home mortgage, refinancing), Geolocation (Cities, Neighborhoods), and Amount.

Using the Pandas library, we can create a DataFrame that generates all logical permutations, excluding those without commercial sense.

import pandas as pd

import itertools

# Definition of dimensions

intenti = ['Fixed Rate Mortgage', 'Variable Rate Mortgage', 'Mortgage Refinancing']

citta = ['Milan', 'Rome', 'Naples', 'Turin'] # In production: complete ISTAT dataset

importi = ['100000', '150000', '200000']

# Generation of combinations

combinazioni = list(itertools.product(intenti, citta, importi))

df = pd.DataFrame(combinazioni, columns=['Intent', 'City', 'Amount'])

# Creation of SEO-friendly Slug

df['slug'] = df.apply(lambda x: f"{x['Intent']}-{x['City']}-{x['Amount']}".lower().replace(' ', '-'), axis=1)

Semantic Clustering to Avoid Cannibalization

One of the biggest risks of programmatic SEO is keyword cannibalization. Google might not distinguish between “Mortgage Milan” and “Mortgages Milan”. To mitigate this risk, it is necessary to implement clustering algorithms before generation.

Using libraries like Scikit-learn or PolyFuzz, we can group keywords that are too similar and programmatically decide to generate a single master page that answers multiple close intents, or use the canonical tag dynamically.

2. Page Generation and Template Management

Once the dataset is structured, we use Jinja2 (Python templating engine) to generate static HTML files or Markdown files for a Headless CMS (like Strapi or Contentful). The advantage of the static approach is speed: Time to First Byte (TTFB) is minimal, a critical factor for Core Web Vitals.

The template must provide “placeholder” spaces for financial data that changes daily. We do not “hardcode” the interest rate in the static HTML, as it would require a new site build every morning.

3. Dynamic Rate Injection: AWS Lambda and Google Cloud Functions

Here advanced engineering comes into play. To show the Euribor rate updated to 02/15/2026 on 50,000 static pages without regenerating them, we use a microservices architecture.

- The Frontend (Static Page): Contains an empty div with

id="live-rates". - The Backend (Serverless): A Python function on AWS Lambda or Google Cloud Functions that queries the ECB (European Central Bank) APIs or the bank’s internal databases.

Upon opening the page, a lightweight JS script executes a fetch() call to the Cloud Function, passing the page parameters (e.g., amount and duration). The function returns the installment calculation updated to the millisecond.

# Conceptual example of Cloud Function (Python)

def get_mortgage_rate(request):

request_json = request.get_json()

amount = request_json['amount']

# Logic to retrieve updated IRS/Euribor rate

current_rate = database.get_latest_irs_10y()

rata = calculate_amortization(amount, current_rate)

return jsonify({'rata': rata, 'tasso': current_rate, 'data': '15/02/2026'})This hybrid approach ensures that Google indexes fast (static) content but the user sees always fresh (dynamic) data, improving User Experience (UX) signals.

4. Schema.org and Automated FinancialProduct Markup

To dominate SERPs in 2026, structured data is not optional. In the Python generation script, we must automatically inject the specific JSON-LD markup for financial products.

Using the Schema.org FinancialProduct class, we can specify interest rates, fees, and conditions. Here is how to structure it dynamically:

script_schema = {

"@context": "https://schema.org",

"@type": "FinancialProduct",

"name": f"Mortgage {row['Intent']} in {row['City']}",

"interestRate": "{dynamic_variable}", // Populated via JS or estimated in static

"amount": {

"@type": "MonetaryAmount",

"currency": "EUR",

"value": row['Amount']

}

}

Correct implementation of this schema drastically increases the probability of obtaining Rich Snippets, increasing CTR (Click-Through Rate) even if not in the absolute first position.

5. Managing Crawl Budget at Scale

Launching 100,000 pages in a day is the best way to be ignored by Google. The search engine assigns a limited Crawl Budget to every domain. To manage the indexing of a **programmatic seo python** project, an incremental release plan is necessary.

Silo Internal Linking Strategy

Do not link all pages from the home. Create a hierarchical silo structure:

- Level 1: Home Page

- Level 2: Regional Pages (Mortgages Lombardy)

- Level 3: City Pages (Mortgages Milan)

- Level 4: Detail Pages (Mortgages Milan 200k)

The Python script must also automatically generate segmented XML Sitemap files (e.g., sitemap-milan.xml, sitemap-rome.xml) to monitor indexing via Google Search Console in a granular way.

Indexing API and Ping

For the most urgent content, the use of Indexing APIs (where permitted by Google policies, mainly for JobPosting or Broadcast, but testable on financial news) or sitemap pinging is automatable via Python using the requests library.

Conclusions: Beyond Content

The **programmatic seo python** in the credit sector is not about writing texts with AI, but building a resilient infrastructure capable of answering millions of specific queries with precise data. The integration between static generation for speed and Cloud Functions for financial data accuracy represents the state of the art for 2026. Those who master this intersection between code and marketing not only gain positions but build a digital asset that is difficult for competitors still relying on manual processes to replicate.

Frequently Asked Questions

Programmatic SEO in the financial sector is an architectural approach that uses software to massively generate web pages optimized for specific long-tail queries, instead of creating them manually. This method allows intercepting thousands of vertical searches, such as mortgage combinations for specific cities and amounts, transforming a portal into a scalable traffic acquisition machine without sacrificing data quality or site performance.

To show volatile data like Euribor or IRS rates on static pages without having to continuously regenerate them, a hybrid architecture with dynamic injection is used. While the HTML structure of the page is pre-generated to ensure speed, rate values are inserted in real-time via Cloud Functions and JavaScript at the moment the page opens, ensuring the user always views the most recent financial conditions.

To prevent Google from confusing pages that are too similar, it is necessary to implement semantic clustering algorithms using Python libraries like Scikit-learn before content generation. This process groups keywords with almost identical intents, allowing the creation of a single master page for multiple variants or programmatically managing canonical tags, signaling to the search engine which is the main resource to index.

To maximize visibility in SERPs, it is fundamental to automate the insertion of Schema.org markup of the FinancialProduct type. This allows providing Google with structured details such as interest rates, amounts, and currency directly in the JSON-LD code, drastically increasing the probability of obtaining Rich Snippets that improve the user click-through rate on search results.

Managing Crawl Budget requires an incremental release strategy and a hierarchical silo internal linking structure, avoiding linking everything from the home page. It is essential to segment XML sitemaps to monitor indexing at a granular level, for example by city or region, and use indexing APIs or automatic ping systems to signal priority content without overloading the search engine’s scanning resources.

Still have doubts about Programmatic SEO Python: Technical Guide for the Lending Sector?

Type your specific question here to instantly find the official reply from Google.

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.