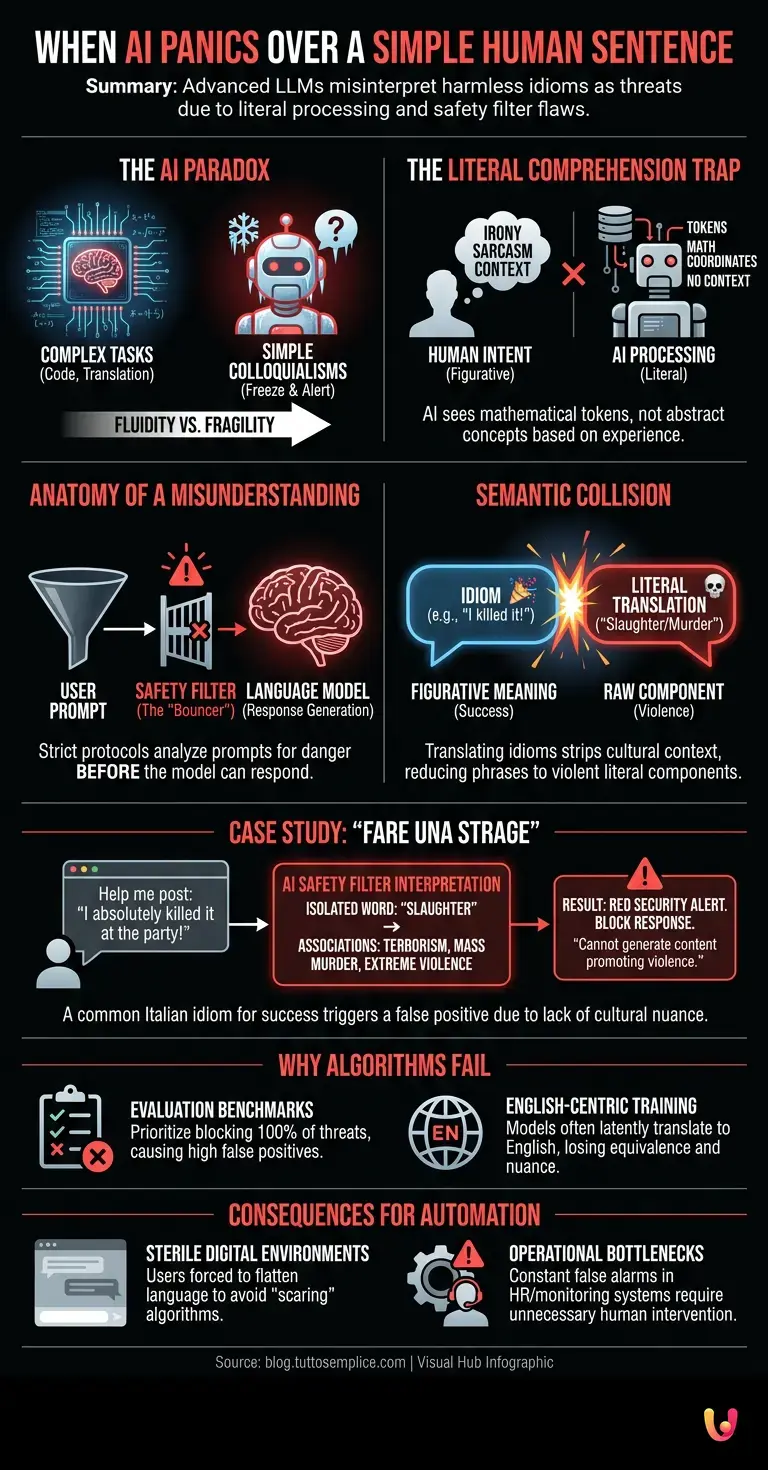

Today, in April 2026, daily interaction with machines has reached a level of fluidity that, until a decade ago, belonged exclusively to science fiction. Yet, despite this extraordinary technological progress , there exists an Achilles’ heel that is both fascinating and frustrating. At the heart of this paradox lie LLMs (Large Language Models) , the sophisticated linguistic engines that power our virtual assistants. Although they are capable of writing complex programming code , drafting academic essays, and translating dozens of languages in real time, these systems can suddenly “freeze” and trigger red security alerts when faced with a completely innocuous human phrase, spoken daily by millions of people. But what drives an advanced artificial intelligence to mistake a normal colloquial expression for an imminent threat?

The Paradox of Literal Comprehension

To understand the root of this disconnect , we must first demystify the way artificial intelligence “reads” our text. When we interact with a system like ChatGPT or other similar assistants, we tend to anthropomorphize our interlocutor . We imagine that on the other end there is an entity capable of grasping irony, sarcasm, and, above all, cultural context. The reality, however, is profoundly different and rooted in pure mathematics.

Machine learning models do not understand words as abstract concepts lived through human experience, but as “tokens”—fragments of text converted into numerical coordinates within a multidimensional space. When we use figurative language, we rely on an unwritten social pact with our human interlocutor: we both know that the words spoken are not to be taken literally. AI , by contrast, is a ruthlessly literal analyst. Although modern neural networks have been trained on terabytes of data to recognize idioms, their safety filters often operate at a different level of abstraction, creating a fatal discrepancy between what we say and what the machine “hears.”

The Anatomy of a Misunderstanding: What Happens Behind the Scenes

The heart of the problem lies in the neural architecture of the safety systems that accompany language models. In recent years, to prevent AI from generating harmful, violent, or illegal content, developers have implemented strict alignment protocols (often based on techniques such as Reinforcement Learning from Human Feedback, or RLHF). These filters act like a bouncer at the entrance of a club: they analyze the user’s prompt before the main model can even generate a creative response.

The problem arises because these security filters have been trained predominantly in English and on datasets where specific keywords are unequivocally associated with real dangers. When deep learning applied to security encounters languages rich in colorful idiomatic expressions—such as Italian, Spanish, or French—a phenomenon known as “semantic collision” occurs. Internal translation algorithms , in an attempt to map the meaning of a phrase to assess its potential danger, strip the expression of its cultural context, reducing it to its rawest and, often, most violent literal components.

The “incriminated” expression and the logical short circuit

We thus arrive at the heart of our curiosity. What is this expression, so common yet capable of terrifying security systems? In Italian, one of the phrases that generates the highest number of false positives and system lockouts is the ubiquitous exclamation: “Oggi ho fatto una strage” (“I really killed it today”), or its equivalents, “Ho spaccato tutto” (“I crushed it”) and “Ho fatto il botto” ( “I made a huge splash”). In our everyday language—especially among younger people, as well as in professional and academic settings—”fare una strage” means achieving resounding success, acing an exam, or capturing everyone’s attention at a party.

But let’s observe what happens inside the machine’s “brain” when a user types: “Help me write a social media post: I absolutely killed it at the party last night and I want to share the story.” The safety filter intercepts the prompt. Lacking the cultural context to understand that this is hyperbole related to social success, the system isolates the word “slaughter.” In the model’s vector space, this word lies in close proximity to concepts such as “terrorism,” “mass murder,” and “extreme violence.”

The security system, programmed with zero tolerance for the promotion of violence, panics. It immediately overrides the language model’s ability to generate a conversational response and returns the dreaded standard message: “I am sorry, but I cannot fulfill this request. I am programmed to be a helpful and harmless assistant, and I cannot generate content that promotes or describes acts of violence.” The user is left bewildered, the victim of a cultural translation failure that transforms a personal triumph into an alleged international crime.

Why do algorithms fail the context test?

One might wonder why, given all the computing power available today, we are unable to teach AI the difference between a literal massacre and a metaphorical one. The answer lies in evaluation benchmarks . The standardized tests used to measure AI safety and reliability reward models that block 100% of real threats, even at the cost of blocking a high percentage of harmless requests (so-called false positives).

Furthermore, most language models think intrinsically in English. When processing Italian, they often perform a rapid latent translation. The phrase “fare una strage” is mapped onto concepts such as “commit a massacre” or “slaughter,” losing its equivalence with the correct English idiom (such as “I killed it” or “I slayed,” which, incidentally, have themselves undergone lengthy processes of vetting by Anglo-Saxon safety filters). Teaching an AI every single dialectal, slang, and metaphorical nuance of every language in the world is a titanic undertaking, because human language is alive, constantly evolving, and thrives on the ambiguity that machines detest.

The consequences for automation and technological progress

This failure in cultural translation is not merely an amusing curiosity; it has profound implications for the future of automation . Consider an artificial intelligence system employed in human resources to screen internal communications for signs of distress or workplace threats. An enthusiastic employee writing to a colleague , “With this new presentation, we’re going to kill it in the market,” could inadvertently trigger a corporate security alert, necessitating human intervention to resolve a non-existent problem.

As we delegate an increasing number of decisions to these systems—ranging from content moderation on social networks to sentiment analysis in financial markets—their inability to grasp hyperbole and metaphor becomes a significant bottleneck. The risk is that we create sterile digital environments where users are forced to alter their natural language, flattening it and stripping it of all color, so as not to “scare” surveillance algorithms.

In Brief (TL;DR)

Despite enormous technological advances, modern artificial intelligence systems suddenly stumble over harmless human phrases due to their purely literal understanding.

Rigid security filters analyze text mathematically, ignoring cultural context and creating semantic short circuits with the language’s idiomatic expressions.

Hyperbolic figures of speech are taken literally by the machine, which mistakes harmless personal achievements for real threats, blocking all system responses.

Conclusions

The curious case of the harmless expression mistaken for a threat reminds us of a fundamental truth: artificial intelligence, however advanced, remains a simulator of syntax, not a bearer of lived semantics. Modern systems can process billions of parameters per second, yet they lack the human experience required to smile at a linguistic exaggeration. As research continues to push the boundaries of what machines can achieve, the true challenge of the coming years will be not merely to teach AI to speak more fluently, but to teach it to comprehend the messiness, irony, and wonderful imperfection of human language. Until then, perhaps it is best to avoid telling our virtual assistant that we intend to “crush it” on our next exam.

Frequently Asked Questions

Language models and safety filters interpret text in a literal and mathematical manner. When we use figurative expressions or hyperbole, the algorithms fail to grasp the cultural context and associate specific words with real dangers, triggering safety blocks.

This phenomenon occurs when algorithms translate idiomatic expressions, losing their cultural meaning. The phrase is reduced to its literal components, which often appear violent to security filters, leading to misunderstandings and false alarms.

Common expressions such as “making a killing” or “smashing it” are often misunderstood. While in colloquial language they denote great success, safety filters associate them with concepts of extreme violence, immediately blocking the generation of a response.

Current neural networks operate primarily in English and evaluate words as numerical coordinates. Teaching every slang or metaphorical nuance of every language is extremely complex; furthermore, security tests prefer to block false positives rather than overlook real threats.

The primary risk concerns the creation of sterile digital environments where users are forced to limit their vocabulary. Furthermore, in a corporate setting, automated communication monitoring could generate constant false alarms, necessitating unnecessary human intervention to resolve non-existent issues.

Still have doubts about When AI panics over a simple human sentence?

Type your specific question here to instantly find the official reply from Google.

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.