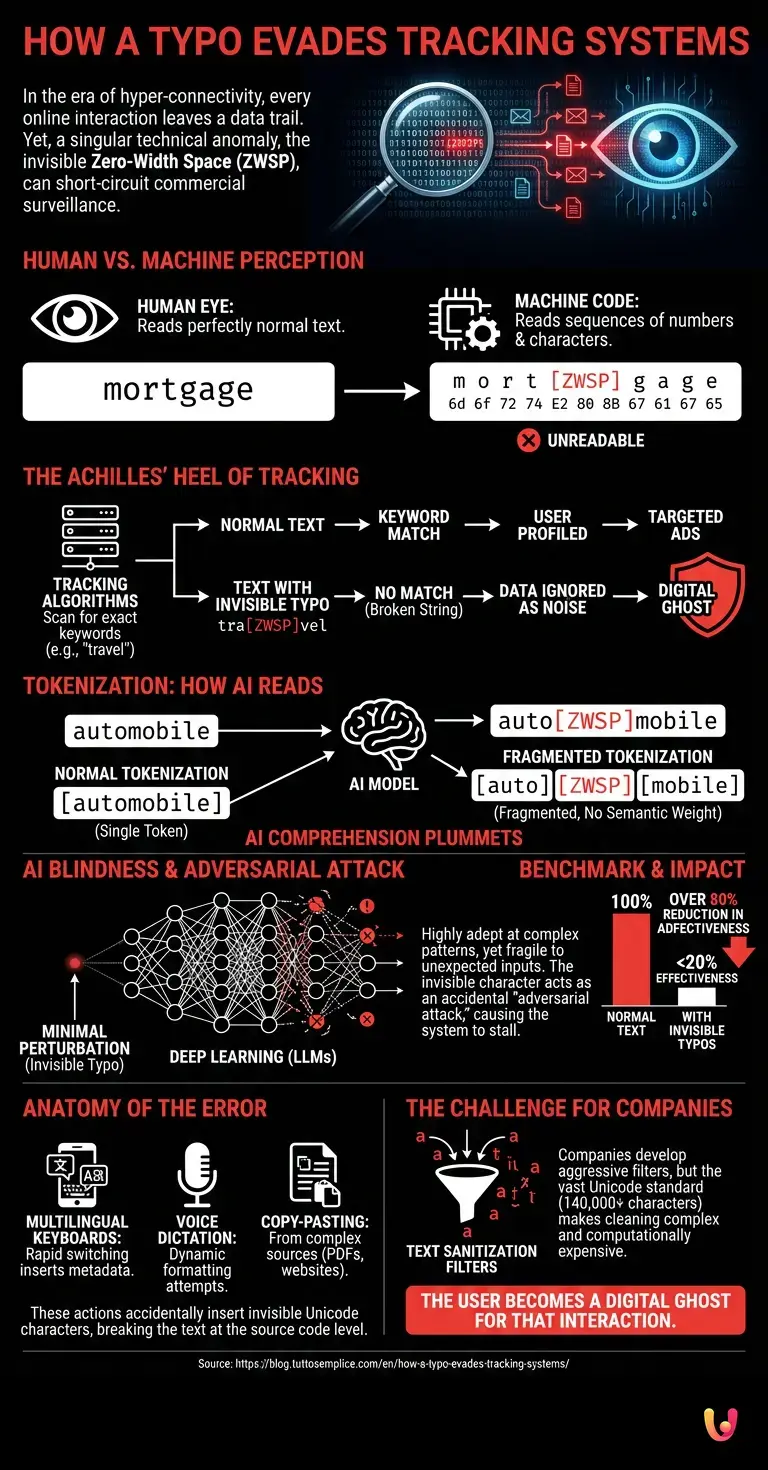

In the era of hyper-connectivity, every online interaction leaves an indelible trail of data. From the websites we visit to the words we type into search engines, everything is meticulously recorded, cataloged, and analyzed. Yet, there exists a singular technical anomaly capable of short-circuiting this immense commercial surveillance machine. The secret lies in an element as invisible as it is powerful: the Zero-Width Space , a Unicode character that, whether inserted accidentally or intentionally while typing, renders text unreadable to profiling systems while keeping it appear perfectly normal to the human eye.

To understand the significance of this computing curiosity, it is necessary to take a step back and observe how machines interpret human language . We read letters, syllables, and words, but computers read sequences of numbers. When a user makes a specific type of typing error, triggering a key combination that generates an invisible character or a homoglyph (a character that is visually identical but has a different computer code), a genuine, unintentional cryptographic barrier is created.

The Achilles’ heel of profiling algorithms

Modern digital tracking systems rely on extremely voracious text-mining algorithms . Their task is to scan our emails, social media posts, and search queries to extract key keywords. If you frequently type the word “mortgage” or “travel,” data brokers will place you into specific market segments, bombarding you with targeted advertising.

However, these systems suffer from structural rigidity. They are programmed to recognize exact text strings or their most common variations. When a user inserts a Zero-Width Space within a word—whether due to a specific keyboard layout, copy-pasting from sources with unusual formatting, or hasty typing on touchscreens (for example, turning “mutuo” into “mu[ZWSP]tuo”)—the traditional tracking system fails. The word is broken at the source code level. The tracker no longer identifies a potential customer interested in a loan, but instead records a string of nonsensical characters , discarding it as background noise.

Tokenization: How Machines Read

To fully understand this phenomenon, we must delve into the heart of artificial intelligence and machine learning . Modern language models do not process text word by word; instead, they use a process called tokenization. The text is broken down into smaller units called “tokens.”

In an advanced neural architecture , the word “automobile” might be a single token. But if an invisible typo is hidden within that word, the tokenization system (often based on Byte Pair Encoding) goes haywire. Instead of assigning the token corresponding to the concept of a vehicle, it fragments the word into isolated syllables or single characters that carry no semantic weight. This means that, as far as the AI is concerned, you never wrote that word. You have literally slipped under the radar.

The Blindness of Artificial Intelligence in the Face of the Unforeseen

One might assume that the most advanced systems are immune to such trivial errors. In reality, deep learning is exceptionally adept at recognizing complex patterns, yet it is surprisingly fragile in the face of minimal and unexpected perturbations . This phenomenon is known in the field of cybersecurity as an “adversarial attack,” although in this instance, it occurs entirely by accident.

Take, for example, large language models, or LLMs . Platforms like ChatGPT or the sentiment analysis systems used by multinational corporations are trained on terabytes of clean, normalized text. When they encounter text contaminated by invisible characters or Unicode encoding errors resulting from anomalous keystrokes, their comprehension capabilities plummet. The automation designed to categorize your psychological profile or consumption habits stalls, as the input data fails to match any of the coordinates within its immense vector database.

A benchmark test for invisibility

Researchers in the fields of privacy and cybersecurity have begun studying this phenomenon with great interest. By subjecting tracking systems to rigorous benchmark tests, they discovered that the strategic (or accidental) insertion of these invisible typos reduces the effectiveness of advertising profiling by more than 80%.

This is not a trivial programming flaw, but rather a limitation inherent to the way computers process text. Technological progress is driving companies to develop increasingly aggressive text “sanitization” filters, designed to remove any non-standard characters before the text is analyzed. However, the vastness of the Unicode standard, which encompasses over 140,000 characters, makes this cleaning operation extremely complex and computationally expensive.

The Anatomy of Error: What Happens Behind the Scenes

But how does this error actually occur? It often happens when using multilingual keyboards on smartphones. Rapidly switching between layouts, or using voice dictation features that attempt to format text dynamically, can insert invisible metadata between characters. At other times, it is the result of copying and pasting from PDF documents or websites with complex formatting.

When we press “Send,” our browser transmits the entire sequence of bytes. Ad servers, optimized for speed and for processing billions of requests per second, do not have the actual time to perform a forensic analysis of every single word. They apply standardized regular expressions (regex). If the regex looks for the word “smartphone” and finds “smart[invisible-character]phone,” the condition evaluates to false. The data is ignored. The user, for that fraction of a second and for that specific interaction, becomes a digital ghost.

In Brief (TL;DR)

Inserting invisible characters, such as the zero-width space, either accidentally or intentionally, creates a genuine cryptographic barrier against modern digital tracking systems.

These invisible anomalies disrupt the delicate process of tokenization, rendering keywords completely unreadable to voracious commercial profiling algorithms.

This structural limitation of machine learning significantly reduces the success of targeted advertising, allowing users to accidentally evade surveillance by data brokers.

Conclusions

The discovery that a simple—and often invisible—typo can neutralize multi-billion-dollar surveillance systems reminds us of a fundamental truth: technology, no matter how advanced, always operates within rigid logical boundaries. While the data industry continues to invest in increasingly sophisticated algorithms, the complexity and unpredictability of human interaction (and of the coding systems we have created to represent it) still offer unexpected avenues of escape.

The Zero-Width Space and similar typographic anomalies are not the definitive solution to the problem of online privacy, but they represent a fascinating modern paradox. In a world where we constantly strive to be precise and machine-readable, it is precisely in error, imperfection, and the glitch that we paradoxically reclaim our right to invisibility.

Frequently Asked Questions

It is a Unicode character that is invisible to the human eye but perfectly processed by computers. When inserted into a word, it splits it at the source code level, rendering it completely unintelligible to advertising tracking algorithms that search exclusively for exact, predefined terms. This technique blocks the collection of personal data.

By inserting invisible characters into keywords, profiling systems are unable to recognize terms of commercial interest. Consequently, data brokers discard the text, treating it as mere background noise, thereby avoiding sending intrusive targeted advertising to the individual in question. This creates an unintentional protective shield against digital surveillance.

Modern language models use tokenization to fragment text into units of meaning. An anomalous character abruptly interrupts this process, breaking the word into fragments devoid of semantic meaning. This causes a veritable short circuit in automated comprehension, rendering the text unreadable to the machine. Psychological profiling is thus thwarted at the outset.

They often appear when using multilingual keyboards on smartphones—while rapidly switching from one layout to another—or through voice dictation systems. They can also result from copying and pasting text from complex documents, which carries along hidden metadata that alters the invisible structure of the typed word. Even hasty typing on touchscreens can trigger this digital anomaly.

Technology platforms are developing increasingly aggressive text-cleansing filters to remove non-standard characters prior to the analysis phase. However, handling over 140,000 Unicode variants requires enormous computing power. Consequently, this operation is extremely complex and very costly for ad servers.

Still have doubts about How a Typo Evades Tracking Systems?

Type your specific question here to instantly find the official reply from Google.

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.