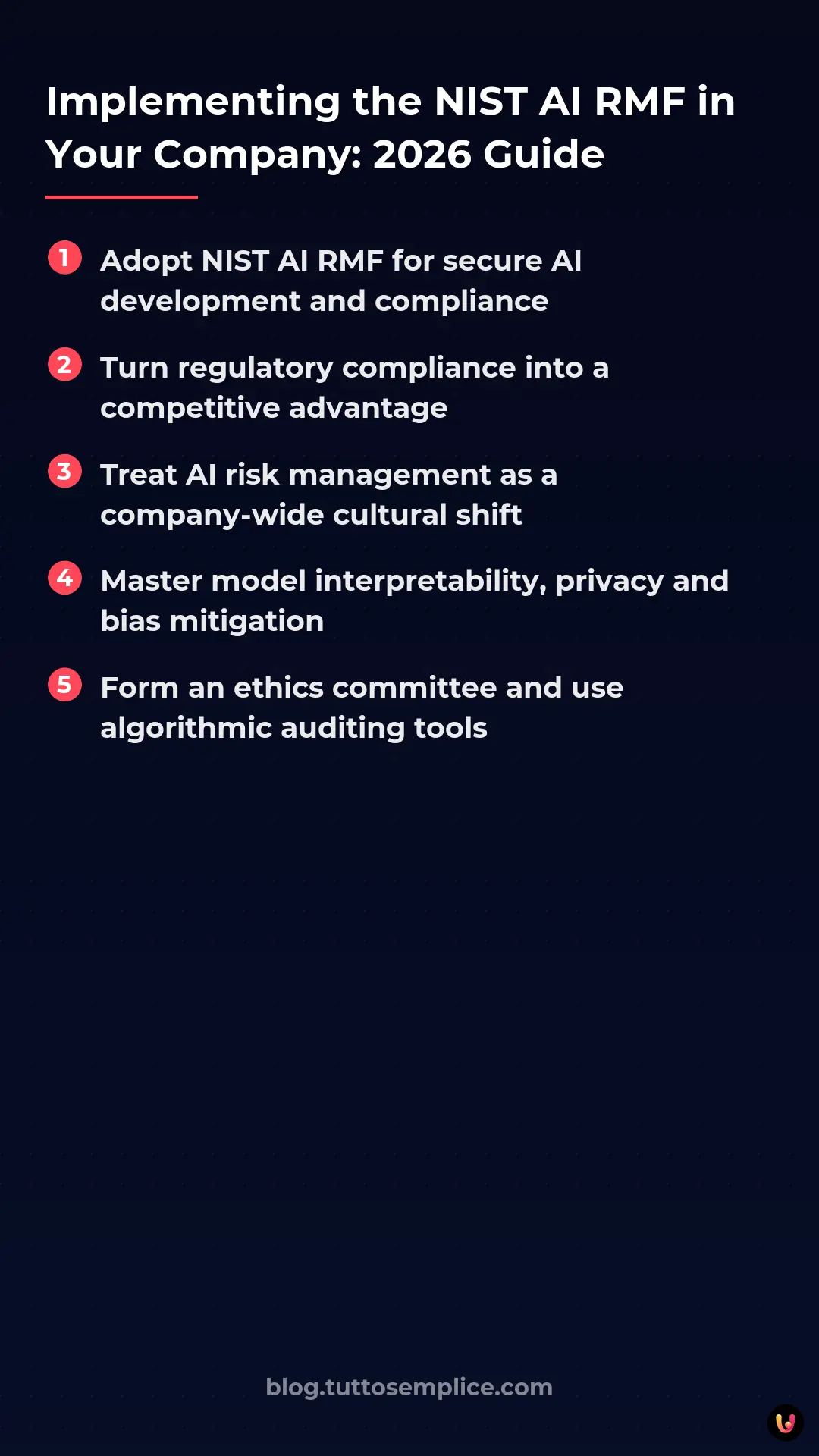

The integration of the NIST AI RMF (Artificial Intelligence Risk Management Framework) is now the fundamental pillar for any organization wishing to develop, acquire, or integrate artificial intelligence systems. In the technological context of 2026, characterized by the full operation of the EU AI Act and the proliferation of complex generative models , risk management is no longer an option but a strategic imperative. This technical guide provides a structured path to adopt the framework, transforming regulatory compliance into a tangible competitive advantage through robust AI governance .

Introduction to AI Risk Management

The adoption of the NIST AI RMF represents the global standard in 2026 for governing artificial intelligence securely. This framework allows companies to map, measure, and manage AI-related risks, ensuring regulatory compliance and the reliability of technological systems.

According to official documentation from the National Institute of Standards and Technology, the framework is designed to be industry-agnostic and scalable. It is not a simple security checklist, but a cultural shift that involves the entire corporate structure, from the board of directors to machine learning developers. In 2026, the alignment between the traditional NIST RMF and the specificities of artificial intelligence requires a deep understanding of concepts such as model interpretability, training data privacy, and algorithmic bias mitigation.

Prerequisites and Tools for Compliance

Before implementing the NIST AI RMF , it is critical to establish a robust enterprise AI governance . Prerequisites include forming a multidisciplinary ethics committee, adopting algorithmic auditing software, and mapping existing data infrastructure.

To successfully initiate the process, organizations must equip themselves with an ecosystem of appropriate tools and skills. The “Zero-to-Hero” approach requires preparing the business ground through the following operational steps:

- Establishment of the AI Governance Board: A cross-functional team composed of Data Scientists, Legal Counsel, CISO, and business representatives.

- AI Asset Inventory: Creation of a centralized registry (AI Registry) of all models in use, including third-party services (Shadow AI).

- Adoption of MLSecOps Tools: Integration of platforms for continuous monitoring of model performance and security in production.

| Category Instrument | Function in the Framework | Application Example 2026 |

|---|---|---|

| AI TRiSM Platforms | Monitoring and Trust | Real-time detection of data drift and bias. |

| GRC (Governance, Risk, Compliance) Software | Policy Management | Automatic alignment between internal policies and the requirements of the EU AI Act. |

| LLM Vulnerability Scanner | Technical Safety | Prevention of prompt injection and data leakage attacks. |

The Core of the Framework: The Four Functions

The NIST AI RMF architecture is based on four interconnected functions: Govern, Map, Measure, and Manage. This circular and continuous framework ensures that the artificial intelligence lifecycle is constantly monitored, reducing bias and operational vulnerabilities .

These functions are not sequential, but operate synergistically. Their implementation requires rigorous methodologies and traceable documentation, which is essential for passing any compliance audits.

Govern: Creating a Safety Culture

The Govern function of the NIST AI RMF establishes corporate policies and legal responsibilities. It is the heart of AI governance , as it aligns AI strategies with ethical values, risk tolerance, and current regulations.

In this phase, company leadership must clearly define who is responsible for each AI system. Key actions include drafting a code of ethics for AI, defining acceptable risk levels (Risk Appetite Statement), and creating transparent communication channels to report algorithmic anomalies without fear of retaliation.

Map: Identify Model Risks

Through the Map phase of the NIST AI RMF , organizations catalog each AI system in use. This step requires documenting training data, model limitations, and potential negative impacts on end-users or the business.

Mapping is often the most complex phase. According to industry data, over 60% of companies are unaware of the true extent of their AI attack surface. It is necessary to document:

- The intended use and foreseeable misuse.

- The origin and quality of the training and validation datasets.

- The software supply chain (AI Bill of Materials – AIBOM).

Measure: Evaluating the Impact of Artificial Intelligence

The Measure phase of the NIST AI RMF uses quantitative and qualitative metrics to test the reliability of models. It includes stress tests, bias analysis, and robustness evaluations, ensuring that the AI operates within established safety parameters.

Measurements must be objective and repeatable. Technical teams must implement metrics for fairness, accuracy, interpretability, and resilience to adversarial attacks (Adversarial Machine Learning). The use of AI-specific red teaming became a de facto standard in 2026 to validate the robustness of generative models before release into production.

Manage: Mitigate Vulnerabilities

With the Manage function, the NIST AI RMF provides guidelines for responding to identified risks. Companies implement technical controls, fallback plans, and continuous monitoring to disable or correct AI systems in the event of anomalies.

Active risk management requires the creation of AI incident response playbooks. If a model starts producing discriminatory results or harmful hallucinations , circuit breaker systems must automatically intervene to degrade the service safely (graceful degradation) or hand over control to a human operator (Human-in-the-Loop).

Practical Examples of Corporate Adoption

Applying the NIST AI RMF in real-world scenarios demonstrates its versatility. Whether it’s an LLM-based chatbot for customer service or financial scoring algorithms , the framework standardizes validation and release processes.

Let’s consider a banking institution that implements a new mortgage approval system based on Machine Learning. Using the framework:

- Govern: The committee determines that the model must not use sensitive demographic variables.

- Map: It is documented that the model will be used only for loans under 100,000 euros and the historical data used are mapped.

- Measure: Statistical tests are performed to verify the absence of bias against specific minorities (Disparate Impact Analysis).

- Manage: An automatic alert is set if the rejection rate for a given demographic group exceeds a warning threshold, triggering a human review.

Troubleshooting Common Problems

When integrating the NIST AI RMF , companies often face obstacles such as a lack of vendor transparency or poor data quality. Solving these problems requires third-party audits and continuous updating of NIST RMF policies.

| Problem Encountered (Troubleshooting) | Strategic Solution |

|---|---|

| Opacity of third-party models (Black Box) | Contractually request Model Cards and AIBOMs from suppliers before acquisition. |

| Cultural resistance of development teams | Integrate risk controls directly into CI/CD pipelines (Shift-Left Security) so as not to slow down the release. |

| Difficulty in quantifying reputational risk | Use scenario simulation frameworks and involve external stakeholders (e.g., consumer associations) in the Measure phase. |

In Brief (TL;DR)

The adoption of the NIST AI RMF in 2026 is essential for governing artificial intelligence risks, ensuring full compliance with the EU AI Act.

To embark on this path, solid corporate governance is needed, supported by a multidisciplinary ethics committee and advanced tools for algorithmic auditing.

The core of the framework includes four synergistic functions essential for governing, mapping, measuring, and constantly managing the entire lifecycle of the models.

Conclusions

Successfully implementing the NIST AI RMF in 2026 is not just a compliance obligation, but a competitive advantage. Companies that master AI governance build trust, innovate safely, and prepare for future regulatory developments.

AI risk management is an ongoing journey, not a final destination. By adopting the Govern, Map, Measure, and Manage functions, IT organizations can maximize the value of emerging technologies while protecting their users, data, and reputation in the global marketplace. Today’s investment in structured frameworks like NIST’s is the best insurance against tomorrow’s technological uncertainties.

Frequently Asked Questions

The NIST AI RMF framework represents a global standard for managing risks related to artificial intelligence. This system allows organizations to map, measure, and govern models, ensuring compliance with European regulations and improving overall technological security.

The architecture is based on four continuous and synergistic functions called Govern, Map, Measure, and Manage. These phases allow for the establishment of ethical policies, the cataloging of systems in use, the evaluation of impacts through objective metrics, and the timely mitigation of operational vulnerabilities.

To initiate the process, it is crucial to establish robust corporate governance by creating a multidisciplinary ethics committee. Subsequently, it becomes necessary to map the existing data infrastructure and adopt specific tools for continuous monitoring of model performance and security.

To overcome the lack of transparency of external suppliers, the framework suggests contractually requesting specific documentation before acquisition. Tools such as Model Cards and software bills of materials are essential for understanding the limitations and training data of opaque models.

The adoption of this framework standardizes validation and risk management processes, greatly facilitating compliance with European legal requirements. By using dedicated governance software, companies can translate internal policies into actions that comply with international directives.

Still have doubts about Implementing the NIST AI RMF in Your Company: 2026 Guide?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.