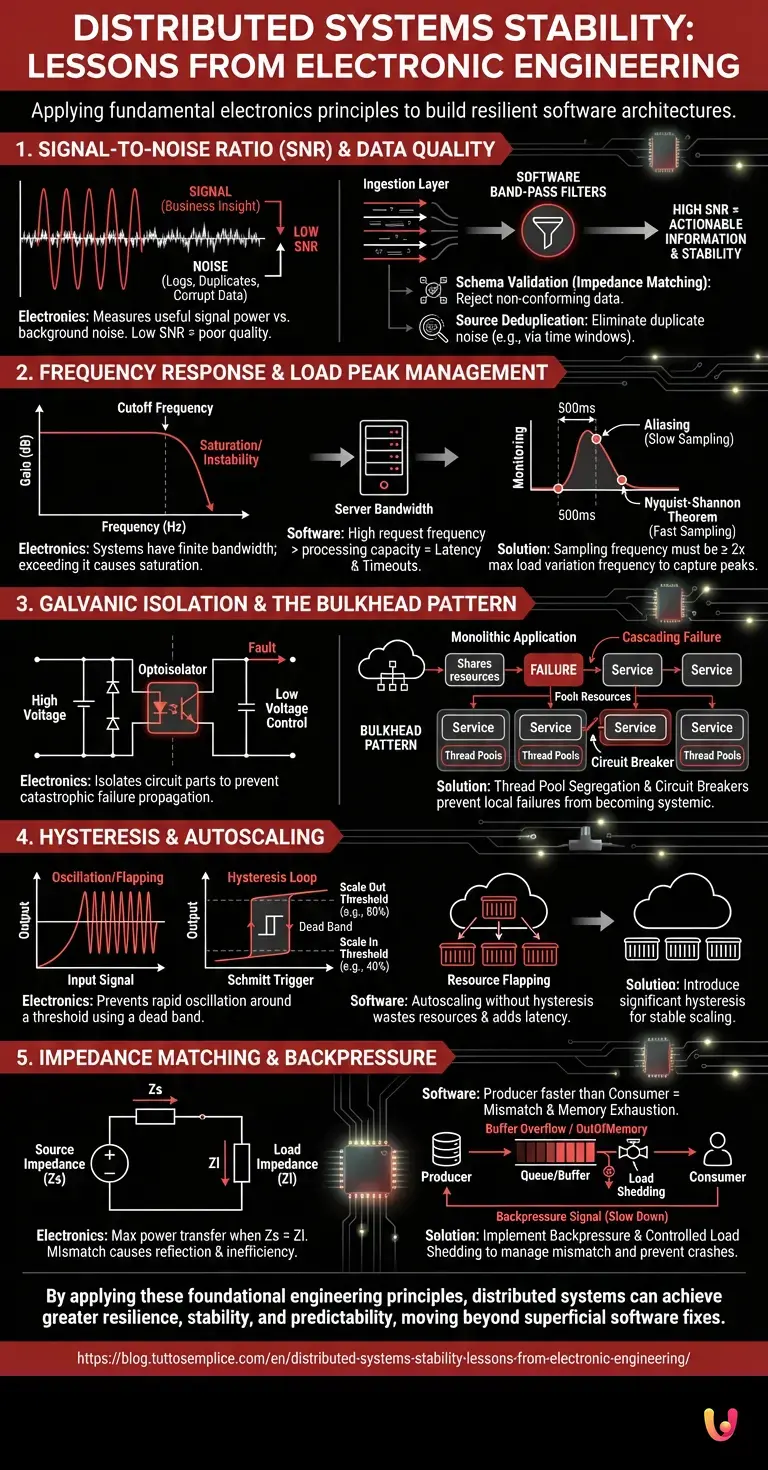

In today’s cloud computing landscape, distributed systems stability is often treated as a purely software problem, solvable through container orchestration or retry policies. However, there is a fundamental truth often overlooked: the principles governing the resilience of a microservices architecture are the same ones that regulate the stability of analog and digital electronic circuits. In this technical guide, we will momentarily step away from software abstraction to return to first engineering principles, demonstrating how concepts such as Signal-to-Noise Ratio (SNR), Frequency Response, and Galvanic Isolation are the true keystones for building resilient infrastructures.

1. Signal-to-Noise Ratio (SNR) and Data Quality

In electronics, the Signal-to-Noise Ratio (SNR) measures the power of a useful signal compared to the background noise corrupting it. A low SNR in an audio amplifier translates into unbearable hiss. In distributed systems, especially in data-oriented architectures (Data Lakes, Event Streaming), the concept is identical.

Defining Noise in Distributed Systems

In a Data Lake, the “signal” is actionable information (business insight), while the “noise” consists of:

- Verbose and unstructured logs.

- Duplicate events generated by poorly configured retry policies (at-least-once delivery).

- Corrupt or incomplete data due to race conditions.

If the volume of this spurious data (Noise Floor) increases, the computational cost to extract value (Signal) grows exponentially, degrading distributed systems stability due to excessive I/O and CPU load wasted on filtering out the useless.

Practical Application: Software Band-Pass Filters

To improve SNR, we must apply the software equivalent of an electronic filter:

- Schema Validation (Impedance Matching): Reject data at the input (Ingestion Layer) if it does not conform to rigid schemas (e.g., Avro or Protobuf), similar to how a circuit rejects out-of-band frequencies.

- Source Deduplication: Use time windows (tumbling/sliding windows) in stream processors like Apache Flink to eliminate duplicate noise before it reaches cold storage.

2. Frequency Response and Load Peak Management

Every electronic circuit has a frequency response: it reacts well up to a certain rate of signal variation, beyond which it attenuates the output or becomes unstable. A web server is no different.

Server Bandwidth Analysis

Let’s imagine a microservice as an amplifier with finite bandwidth. If requests (input signal) arrive at a frequency higher than the system’s processing capacity (cutoff frequency), a saturation phenomenon occurs. In electronics, this leads to signal clipping; in software, it leads to increased latency and request timeouts.

The Sampling Theorem and Monitoring

To maintain stability, the monitoring system must respect the Nyquist-Shannon Theorem. If traffic on your servers has peaks (transients) lasting 500ms, but your monitoring system samples the CPU every 60 seconds, you are operating in aliasing: you will never see the real peak that caused the crash. To guarantee distributed systems stability, the sampling frequency of critical metrics must be at least twice the maximum frequency of expected load variations.

3. Galvanic Isolation and the Bulkhead Pattern

In electronic engineering, galvanic isolation (via optoisolators or transformers) is vital to separate two parts of a circuit, preventing a catastrophic failure (e.g., a high-voltage short circuit) from propagating to the low-voltage control logic. Without this isolation, a single fault destroys the entire apparatus.

From Circuit to Software: The Bulkhead Pattern

In the cloud, this principle translates to the Bulkhead pattern. Often, a monolithic or poorly distributed application shares thread pools or database connections between different features. If a slow external service blocks all threads dedicated to a secondary feature (e.g., sending emails), the entire system can lock up (Cascading Failure).

Implementing Isolation

To achieve “software galvanic isolation”:

- Thread Pool Segregation: Assign distinct resource pools for each downstream service. If the payment service times out, it will only exhaust its own pool, leaving the rest of the application (e.g., the product catalog) intact.

- Circuit Breaker: This pattern takes its name literally from the electromechanical switch. If a service fails repeatedly, the “circuit opens,” preventing further calls and allowing the system to recover (cool-down period), exactly like a fuse protects against thermal overloads.

4. Hysteresis and Autoscaling

A common problem in control systems is rapid oscillation around a threshold point. In electronics, a comparator without hysteresis will fluctuate wildly if the input signal is noisy and close to the reference threshold. In distributed systems, this is the number one enemy of Autoscaling.

Avoiding Resource Flapping

If you configure an autoscaler to add instances when the CPU exceeds 70% and remove them when it drops below 65%, you risk the “flapping” phenomenon: the system continuously creates and destroys containers, wasting resources and introducing startup latency. The solution is to introduce significant hysteresis (e.g., scale out at 80%, scale in at 40%), creating a dead band that stabilizes the control system, just as a Schmitt Trigger stabilizes a noisy digital signal.

5. Impedance Matching and Backpressure

Maximum power transfer in a circuit occurs when the source impedance equals the load impedance. If there is a mismatch, energy is reflected, creating standing waves and inefficiency. In distributed systems, this mismatch occurs when a Producer generates data faster than the Consumer can process it.

Managing Mismatch with Backpressure

If unmanaged, this mismatch leads to memory exhaustion (buffer overflow). The technical solution is Backpressure. The consumer must signal the producer to slow down, or the system must introduce a correctly sized buffer (queue) to absorb transient peaks. However, just as a capacitor has a maximum capacitance, queues (Kafka, RabbitMQ) also have physical limits. Distributed systems stability requires that, in the event of a full queue, the system discards messages in a controlled manner (Load Shedding) rather than crashing due to OutOfMemory errors.

In Brief (TL;DR)

Electronic engineering principles offer an indispensable model for ensuring the resilience and stability of distributed software architectures.

Improving the signal-to-noise ratio by filtering useless data drastically reduces computational costs and preserves system performance.

Resource isolation and frequent monitoring prevent local failures from propagating and compromising the entire cloud infrastructure.

Conclusions

Designing resilient cloud systems is not a new discipline, but the application of physical and engineering laws to a virtual domain. Understanding the signal-to-noise ratio helps clean up Data Lakes; applying frequency analysis improves monitoring; implementing galvanic isolation via Bulkheads saves infrastructure from cascading failures. For a modern software architect, looking at electronic circuits is not an exercise in nostalgia, but the most rigorous method to guarantee distributed systems stability at scale.

Frequently Asked Questions

The engineering approach applies physical concepts like Signal-to-Noise Ratio and galvanic isolation to software architectures. Treating microservices like circuits allows for better resilience management, using filters for data quality and patterns like the Circuit Breaker to prevent cascading failures, ensuring a more robust and predictable infrastructure.

This theorem establishes that the sampling frequency of metrics must be at least twice the maximum frequency of load variations. If monitoring samples the CPU too slowly compared to the duration of transient peaks, aliasing occurs, making the real causes of crashes invisible and compromising system stability.

To avoid the continuous oscillation between creating and destroying instances, it is necessary to introduce the concept of hysteresis in control systems. By setting a significant dead band between the scale-out and scale-in thresholds, the system stabilizes itself by behaving like an electronic Schmitt Trigger, reducing resource waste and latency.

Software galvanic isolation aims to separate critical parts of an application to prevent a local failure from becoming systemic. It is achieved through the Bulkhead pattern, which segregates thread pools for different services, and the use of Circuit Breakers, preventing the blockage of a secondary feature from exhausting the resources of the entire distributed system.

When a producer generates data faster than the consumer can process it, a mismatch similar to impedance mismatch in circuits is created. Backpressure solves the problem by signaling the producer to slow down or by managing controlled queues; if the buffer fills up, Load Shedding is applied to discard the excess and avoid out-of-memory errors.

Still have doubts about Distributed Systems Stability: Lessons from Electronic Engineering?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.