We live in an era where Generative Artificial Intelligence seems to have no limits. From hyperrealistic landscapes of alien worlds to portraits of people who never existed, today’s systems are capable of synthesizing any visual input with disarming precision. Yet, in this vast ocean of chromatic and compositional possibilities, there is a fascinating anomaly : a specific color, a single shade that machines categorically refuse to reproduce in its pure form. This is not a hardware limitation of our monitors, but a true psychological-digital block inherent in the very code of these networks. To understand this mystery, we must delve into the intricacies of visual computation and discover what happens when mathematical logic clashes with the history of computer science.

The Paradox of Synthetic Creativity

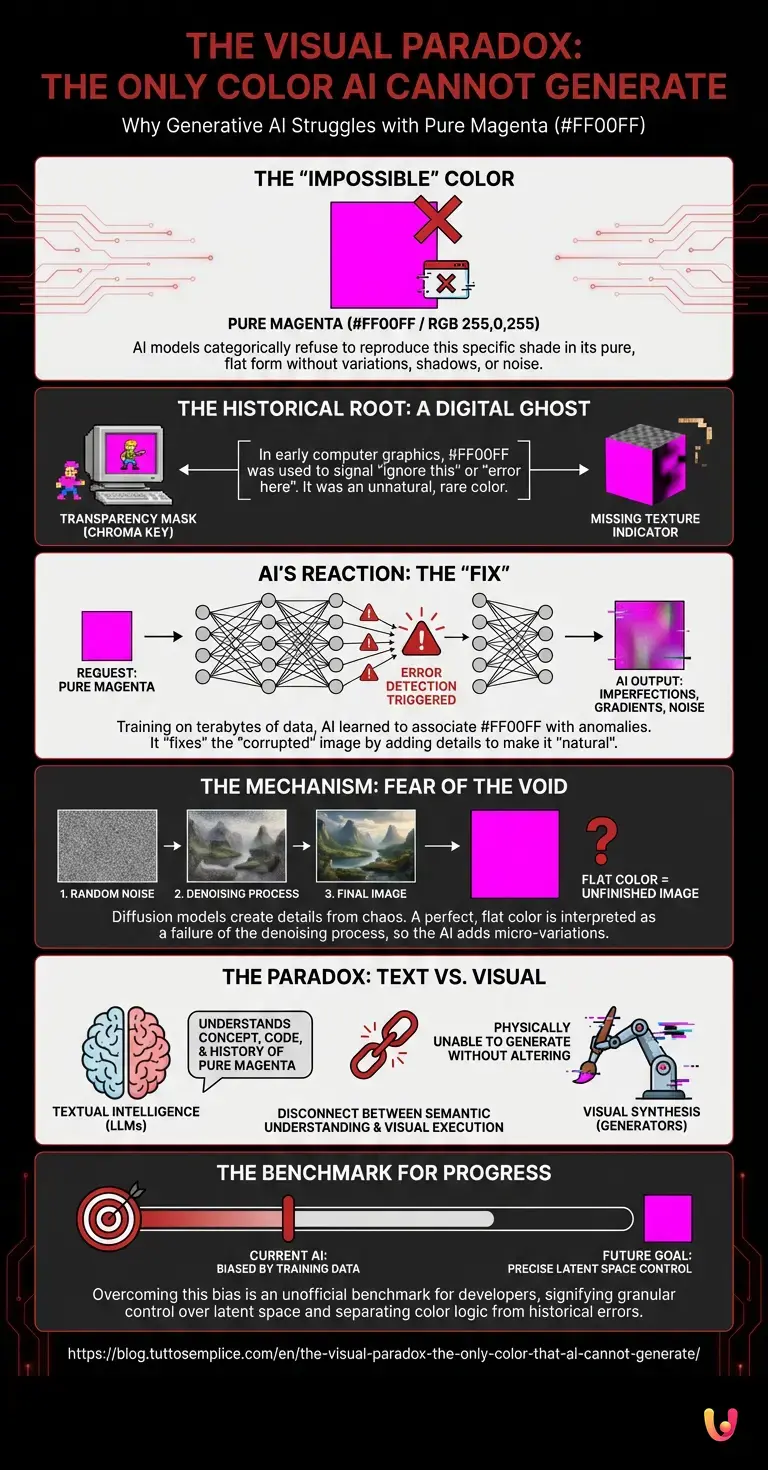

To understand why an “impossible” color exists, it is essential to understand how artificial intelligence imagines and creates images . Unlike a human painter who mixes pigments on a palette, generative models operate within a multidimensional mathematical space called latent space . In this space, every concept, shape, and color is represented by numerical coordinates. Through a process known as diffusion, the AI starts from random visual noise (similar to the snow effect of old televisions) and progressively refines it until the requested image emerges.

In theory, since modern monitors use the RGB (Red, Green, Blue) model to generate over 16 million colors, an algorithm should be able to light up any pixel with any numerical combination from 0 to 255. However, theory clashes with the practice of training. Machines do not learn in a vacuum, but assimilate terabytes of human-created data. And it is precisely in this data that the seed of rejection is hidden.

The Ghost in the Code: The Forbidden Shade

So, what is this impossible color? It’s Pure Magenta , known in hexadecimal as #FF00FF (or RGB 255, 0, 255). If you ask an image generator to create a canvas filled exclusively and perfectly with this specific and vibrant shade of fuchsia/magenta, without any variation, shadow, or noise, the system will get confused. Instead of returning a flat fill, the algorithm will introduce imperfections, gradients, unwanted textures, or slightly alter the color code , perhaps changing it to #FE00FE or adding visual artifacts.

But why pure magenta? The answer lies not in the physics of light (although magenta is an extraspectral color, meaning it is not present in the visible spectrum but created by our brain), but in the history of computer graphics. From the dawn of video game development and 3D rendering, the color #FF00FF has been used as a transparency mask (chroma key) or as an indicator of a missing texture . It was such an unnatural and rare color in the real world that programmers used it to tell the computer: “Ignore this part” or “Attention, there’s an error here.”

How Neural Architecture Reacts to Error

When researchers began collecting billions of images to train modern deep learning models, they inadvertently included millions of screenshots, video game assets, and graphic files in which pure magenta represented a void or an error. The neural architecture of these systems then learned a deep, unconscious semantic association: the color #FF00FF is not a color to be drawn, but an anomaly to be corrected or a hole to be filled.

Consequently, when the algorithm is forced to generate that exact color, its internal error correction filters are automatically triggered. The neural network “thinks” it is dealing with a corrupted image and desperately tries to repair it by adding details, Gaussian noise, or modifying the hue to make it more “natural” and acceptable according to the parameters learned during the training phase.

Machine Learning and the Fear of the Perfect Void

There is also a second layer of complexity related to the intrinsic functioning of machine learning applied to image generation. Diffusion models are designed to create details from chaos. Their nature is to add information. Asking these algorithms to generate a pure, flat, and mathematically perfect color is equivalent to asking them not to do their job.

The system interprets the absence of variations (flat color) as a failure of the denoising process. For the algorithm, an image without texture or gradients is an unfinished image. Therefore, it refuses to deliver a result that, according to its mathematical parameters, is incomplete, inserting micro-variations that destroy the purity of the requested color.

The Role of LLMs and Color Perception

This phenomenon becomes even more fascinating when we analyze today’s multimodal models. If we query an advanced LLM , such as the latest versions of ChatGPT with visual analysis capabilities, it is perfectly capable of explaining what pure magenta is, writing its exact code, and describing its computer history. Textual intelligence understands the concept flawlessly.

However, when automation moves from text to pixel generation, the block manifests itself. This demonstrates a fascinating disconnect between semantic understanding (language) and visual synthesis (generation). Artificial intelligence knows what color is, it knows its name, but its “pictorial arm” is physically (or rather, digitally) unable to trace it without altering it, a victim of its own training biases .

A benchmark for technological progress.

Far from being a mere amusing flaw, the inability to generate pure magenta has become an unofficial benchmark for developers. Testing how a model handles error colors or flat color fields helps engineers understand how much the algorithm is beholden to biases present in the training data.

Technological progress in the field of artificial intelligence is not only measured by the ability to create increasingly complex images, but also by the ability to unlearn incorrect associations. Being able to force a neural network to generate a perfect #FF00FF square without it trying to “fix” it means having achieved more granular and precise control over the latent space, finally separating the logic of color from the semantics of computer error.

In Brief (TL;DR)

Despite the infinite capabilities of generative Artificial Intelligence, there is one specific color that machines refuse to reproduce in its pure form.

Pure magenta is perceived by algorithms as a digital error, due to its historical use in computing to indicate missing textures.

Diffusion models interpret the absence of color variations as an incomplete process, inevitably adding imperfections to correct this visual anomaly.

Conclusions

The case of the impossible color reminds us that artificial intelligence, however alien and omnipotent it may seem, is deeply rooted in human history and our technological idiosyncrasies. The refusal to generate pure magenta is not an act of rebellion by the machine, but the echo of decades of programming in which that color meant “error.” As we continue to push the boundaries of what these networks can create, these small anomalies offer us a valuable window into how machines “think” and how, at times, the ghosts of the computer past continue to haunt the codes of the future.

Frequently Asked Questions

The impossible color for generative machines is pure magenta, identified by the hexadecimal code FF00FF. If you ask software to create an image composed only of this specific hue without gradients, the system will get confused. Instead of returning a perfect canvas, the program will insert visual imperfections or alter the hue to make it more natural.

This block stems from the history of computer graphics, where pure magenta was used as a transparency mask or to indicate a missing texture. Having analyzed billions of images with this characteristic, neural networks have learned to associate this hue with a computer error that must be corrected at all costs.

Diffusion models are programmed to add details starting from visual noise. Asking them to generate a flat and perfect fill is interpreted by the system as a failure of the noise removal process. For this reason, the software adds micro variations to complete what it considers an unfinished image.

Advanced language models perfectly understand the concept of pure magenta and know its complex computer history. However, there is a clear separation between semantic text understanding and visual synthesis. The graphic generator remains stuck with the biases assimilated during the visual training phase, demonstrating how theory does not always translate into digital practice.

The inability to generate this specific hue serves as a key indicator of how much an algorithm is influenced by its training data. Successfully getting the machine to produce a perfect magenta square means achieving superior control over the latent space. This milestone allows engineers to separate color logic from the semantics of past computer errors.

Still have doubts about The visual paradox: the only color that AI cannot generate?

Type your specific question here to instantly find the official reply from Google.

Sources and Further Reading

- Magenta (Extra-spectral color and RGB representation) – Wikipedia

- Diffusion model in Generative Artificial Intelligence – Wikipedia

- RGB color model and digital image representation – Wikipedia

- Latent space in Machine Learning – Wikipedia

- Chroma key and transparency masks in computer graphics – Wikipedia

Did you find this article helpful? Is there another topic you’d like to see me cover?

Write it in the comments below! I take inspiration directly from your suggestions.